As part of the StudySmart2 project, five students developed posters which focused on different aspects of using Artificial Intelligence in their everyday lives.

For each poster (which could be used for display), there is also an accessible Word Version:

- AI for wellbeing development. [Posters covering the use, risks and tools available]

- AI for personalised learning. [Personalised learning poster]

- AI for promoting cultural awareness and inclusivity. [Cultural awareness poster]

- Tips to boost English with AI. [English tips poster]

These posters also complement the video reflections from the students.

Please note that these posters mention some tools which are not officially supported or recognised by the University of Northampton. Copilot is the main supported University of Northampton generative artificial intelligence tool. Please refer to the guidance on using unsupported or external tools if you decide to use other tools.

Blackboard’s AI design assistant, launched last December, has quickly proven to be a helpful tool for developing content within NILE courses. Our early data shows that while most users create tests independently, the AI design assistant is especially popular for test and quiz creation. Senior Lecturer in Nursing, Julie Holloway, shares her positive experience using AI to support her pharmacology students in a prescribing program.

“We are using AI to help generate new exam questions, particularly in relation to pharmacology content,” Julie explains. Due to the parameters of professional regulation within independent prescribing, she’s unable to provide students with past exam papers for their revision. Instead, AI has allowed her to create supplemental revision questions directly linked to each pharmacology lecture, providing students with valuable practice material aligned with their coursework.

One notable feature in the AI design assistant enables users to select existing course materials for the AI to draw from when generating content. Julie has leveraged this by selecting specific pharmacology materials, allowing her to create questions that closely reflect the lectures and give students an efficient tool for self-assessment. “The process was easier than I originally thought,” she adds.

As well as helping with efficiency, Julie also noted the AI’s capacity to inspire new ways of phrasing questions. “Exam questions can become repetitive,” she says, and the AI’s suggestions help with this and enhance the student experience by supplementing their revision.

In thinking about the limitations of using AI to create test questions, Julie points out that the AI occasionally generates questions that aren’t entirely relevant. However, she highlights, “I think this will improve as we get more experienced with working with AI and search terms, etc.”

Would she recommend this approach to other educators? “Absolutely,” she says, encouraging colleagues to explore the tool themselves. “Just give it a try—it’s not as scary as you think!”

If you would like any support using any of the AI Design Assistant tools in NILE, then please contact your Learning Technologist. If you are unsure who this is, then please select this link: https://libguides.northampton.ac.uk/learntech/staff/nile-help/who-is-my-learning-technologist

For more information on the AI Design Assistant, then please select this link: https://libguides.northampton.ac.uk/learntech/staff/nile-guides/ai-design-assistant

As part of a longitudinal study into student perspectives of Generative Artificial Intelligence (GenAI), Learning Technologists Richard Byles and Kelly Lea, along with Head of Learning Technology Rob Howe, have published the results of their second student survey, launched in February 2024.

This 2024 report reveals a significant shift in the role of GenAI in students’ academic lives and their changing motivations to engage with these technologies. Notably, the survey highlights a marked increase in student use of GenAI since the 2023 survey with distinct differences in usage and views between UK and international students.

Key findings indicate a growing awareness among students about both the benefits and limitations of GenAI. Many students appreciate its ability to assist with summarising content, generating ideas, and editing text. However, they are increasingly questioning where data is gathered from and its reliability. Students remain ethically aware and want to ensure academic integrity when using these tools.

The full report can be viewed below.

Report Link (PDF): Exploring Student Perspectives of Generative Artificial Intelligence Tools at the university of Northampton: A survey-Based Study

R Byles, K Lea, R Howe

In this short film, Dr Mu Mu, Programme Leader for the AI and Data Science course, and students discuss a new project that aims to improve student access to information through the development of a new AI chatbot.

Dr Mu Mu explains that students often have common questions about their schedules, deadlines, and accommodation. To address these needs, second-year students are tasked with creating an AI chatbot. Three BSc Artificial Intelligence & Data Science students share insights into the development process.

Dr Mu Mu emphasises the broader learning outcomes: “It’s not only about the technical challenges, but also thinking about ethics, legal issues, and how to make the chatbot more personalised.”

The practical experience gained from this project has led the students gaining successful placements and internships in prestigious UK organisations. This project exemplifies how our programme not only equips students with technical expertise but also prepares them to navigate and address real-world challenges.

The following report provides an overview of the findings of the Generative AI Staff Survey which was available to all staff at UON from the 12th of Feb to 12th of April 2024.

The purpose of the survey is to explore staff understanding and use of Generative Artificial Intelligence tools, and their impact on staff roles at UON.

Author: R Howe. Researchers: K Lea, R Byles.

Catch up with the latest news, case studies, and other interesting stories from the Learning Technology Team.

Download the Learning Technology Team Newsletter – Semester 2, 2023/24 (PDF, 560 KB)

With an overload of information and such dichotomous opinions about Artificial Intelligence (AI), it is difficult to know where to begin; especially if you are yet to experience using AI at all. One starting point is to find out where you are with your own knowledge and the Jisc discovery tool can assist with this.

The discovery tool, which was introduced to UON in 2020, is a developmental tool that students and staff can use to self-assess their digital capabilities, identify their strengths, and highlight opportunities to develop skills. The tool has been recently updated to include a question set for both staff and students on their capability and proficiency with AI and generative AI tools.

The question sets for students and staff have been developed with assistance from Jisc and aligns with the latest AI advice and AI guiding principles developed by Jisc and the Russell Group on the responsible and equitable use of AI to enhance learning and teaching.

How the discovery tool helps students and teachers

The new question sets provide users with a basis to self-assess their skills and knowledge of what AI is and how it could, or should, be used in the context of their studies or role.

Once users complete the question set, they can then access a personalised report with a confidence rating which will vary from ‘developing’ through ‘capable’ to ‘proficient’ depending on their experience. The report also provides recommendations and courses on how to advance knowledge around AI.

Users can repeat any of the discovery tool’s question sets at any point and therefore keep a dynamic view of their confidence levels.

Where can I access the tool?

Click here to log straight into the discovery tool and the AI question set, or copy and paste the link below into your address bar.

How can I Support Students?

To assist students in enhancing their digital skills and their knowledge and understanding of AI, we have put together a student guide which can be found here. It may also be helpful to add a link to this guide, or to the discovery tool itself, within NILE courses.

What if I would like to know more?

For more information about how to use the discovery tool, see: https://digitalcapability.jisc.ac.uk/resources-and-community/discovery-tool-guidance/staff/

For further information about the AI design assistant in NILE or Padlet’s new AI features, please get in touch with your Learning Technologist.

Helpful links

The new features in Blackboard’s April upgrade will be available on Friday 5th April. This month’s upgrade includes the following new/improved features to Ultra courses:

- Anonymous discussions

- AI Design Assistant: Select course items/context picker enhancements

- Duplicate test/form question option, plus changes to default test question value

- Likert form questions includes options for 4 and 6, as well as 3, 5, and 7

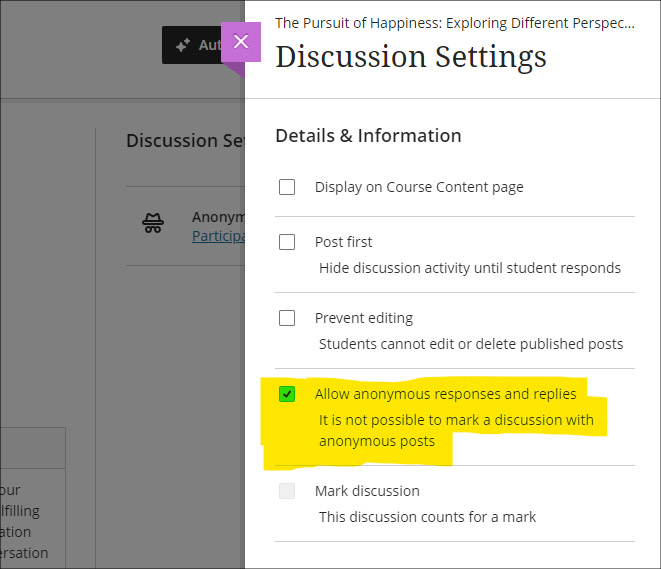

Anonymous discussions

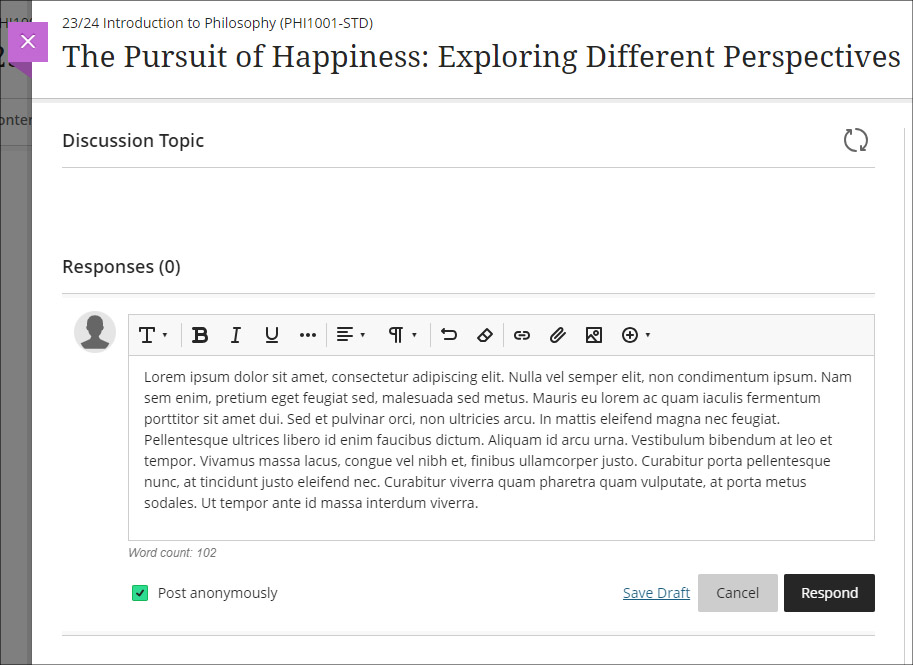

Following feedback from staff, April’s upgrade will allow staff to set up Ultra discussions to allow students to post and reply to posts anonymously. After the upgrade, the option to allow anonymous responses and replied will be available in the ‘Discussion Settings’ panel.

Please note that selecting ‘Allow anonymous responses and replies’ does not mean that all replies and reponses will be anonymous; rather it means that students and staff can choose to post anonymously if they want to. To post anonymously, the ‘Post anonymously’ checkbox will need to be selected. Once posted, the anonymity of a post cannot be changed – i.e., an anonymous post cannot be de-anonymised by the person who posted it, and a non-anonymous post cannot be changed to anonymous.

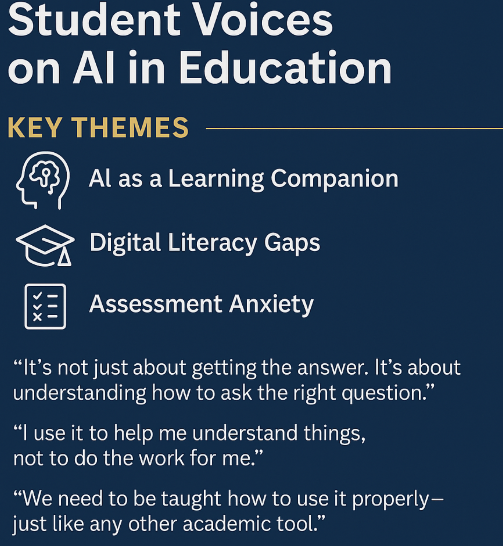

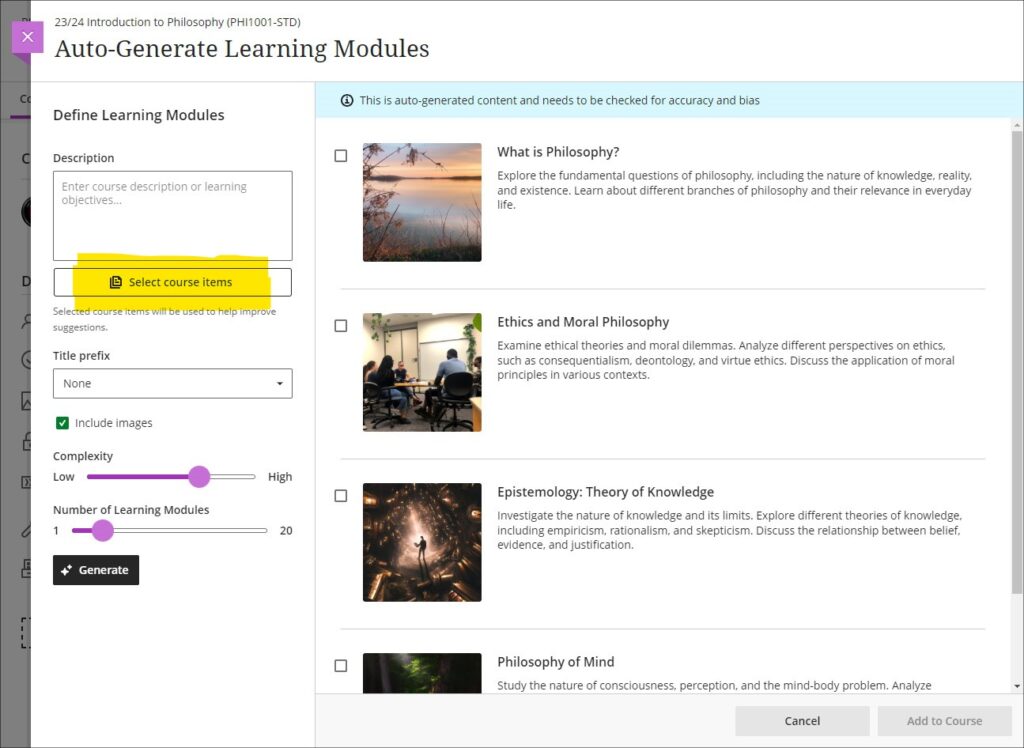

AI Design Assistant: Select course items/context picker enhancements

Following last month’s upgrade which introduced the context picker (the ‘Select course items’ tool) for auto-generated test questions, April’s upgrade introduces the option to select course items when auto-generating learning modules, assignments, and discussion and journal prompts.

The purpose of the ‘Select course items’ tool is to allow staff to specify exactly which resources should be used when auto-generating content. If ‘Select course items’ is used, the auto-generated content will be based only upon the items selected. Where no course items are selected, auto-generated content will be based upon the course title.

You can find out more about the AI Design Assistant and how to use it it at: Learning Technology Team: AI Design Assistant

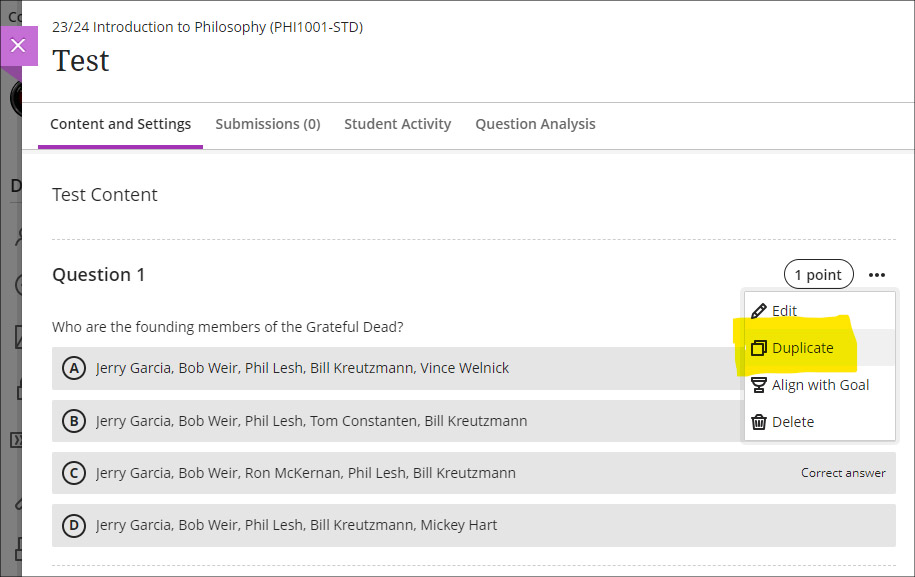

Duplicate test/form question option, plus change to default test question value

The April upgrade introduces the ability for staff to duplicate test and form questions. Additionally, following the upgrade the default point value for newly created test questions will be changed from 10 points to 1 point.

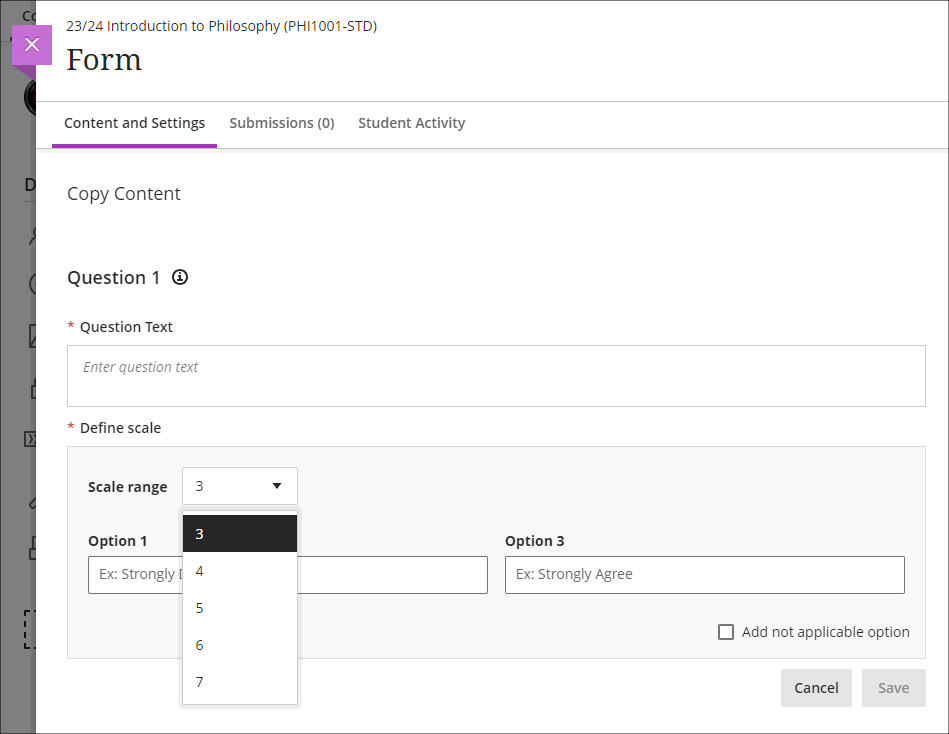

Likert form questions includes options for 4 and 6, as well as 3, 5, and 7

The February 2024 upgrade introduced the ‘Forms’ tool to Ultra courses. One of the question types available in forms is a Likert question; however, the original release only included options for staff to select Likert scales with 3, 5, or 7 points. April’s upgrade will add options to choose scales with 4 or 6 points.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

In this short video UON Learning Development tutor Anne-Marie Langford discusses her work employing generative AI to produce sample passages of academic writing for analysis and refinement in development workshops.

Anne-Marie notes that the use of AI-generated text can prompt students to critique academic writing, encouraging them to develop higher order thinking skills. This proves particularly valuable in scrutinising shortcomings in generative AI-generated text which can prove useful in identifying and presenting knowledge but are less adept and applying, analysing and evaluating it.

While recognising the time-saving potential of chatbots such as ChatGPT and their uses in enhancing student learning, she underscores the limitations of GAI in academic writing and referencing. Anne-Marie emphasises the importance of students adopting a critical, ethical and well-informed approach to using generative AI, urging them to cultivate their own critical voices and refine their skills.

By incorporating text from generative tools into her sessions, Anne-Marie exemplifies the advantages of modelling critical use of generative AI with students.

This short film features three BA Fashion, Textiles, Footwear & Accessories students discussing their experiences using Generative AI (GAI) in their projects. The students demonstrate diverse applications of GAI, highlighting how they tailor the technology to their individual creative needs.

The film features Subject Head Jane Mills, who discusses the potential of AI to support students, and outlines the introduction of a new AI logbook – designed to provide a framework for students to confidently explore and utilize GAI for brainstorming and research purposes.

Recent Posts

- Blackboard Upgrade – June 2026

- Blackboard Exemplary Course Program Award Recipients – May 2026

- Blackboard Upgrade – May 2026

- Choosing the Right NILE Tool to Encourage Student Engagement

- Worthy Online Work event review

- Blackboard Upgrade – April 2026

- 15 Years of the Learning Technology Blog!

- Blackboard Upgrade – March 2026

- Blackboard Upgrade – February 2026

- Blackboard Upgrade – January 2026

Tags

ABL Practitioner Stories Academic Skills Accessibility Active Blended Learning (ABL) ADE AI Artificial Intelligence Assessment Design Assessment Tools Blackboard Blackboard Learn Blackboard Upgrade Blended Learning Blogs CAIeRO Collaborate Collaboration Distance Learning Feedback FHES Flipped Learning iNorthampton iPad Kaltura Learner Experience MALT Mobile Newsletter NILE NILE Ultra Outside the box Panopto Presentations Quality Reflection SHED Submitting and Grading Electronically (SaGE) Turnitin Ultra Ultra Upgrade Update Updates Video Waterside XerteArchives

Site Admin