The new features in this month’s Blackboard’s upgrade will be available from Friday 5th June. This month’s upgrade includes the following new/improved features to Ultra courses:

- Provide answer-level feedback for multiple choice and multiple answer questions

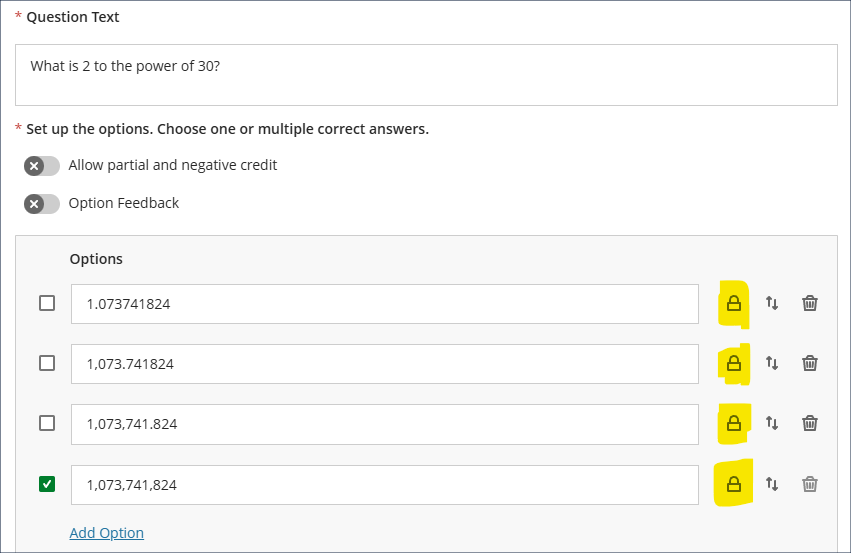

- Lock response options in a fixed position for multiple choice and multiple answer questions in Blackboard tests

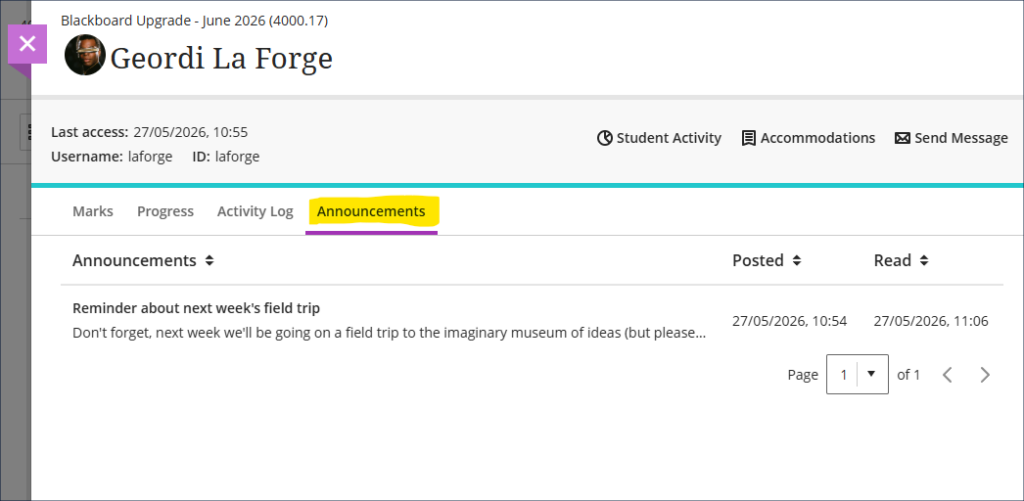

- Track announcement engagement by student

Provide answer-level feedback for multiple choice and multiple answer questions

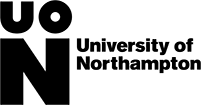

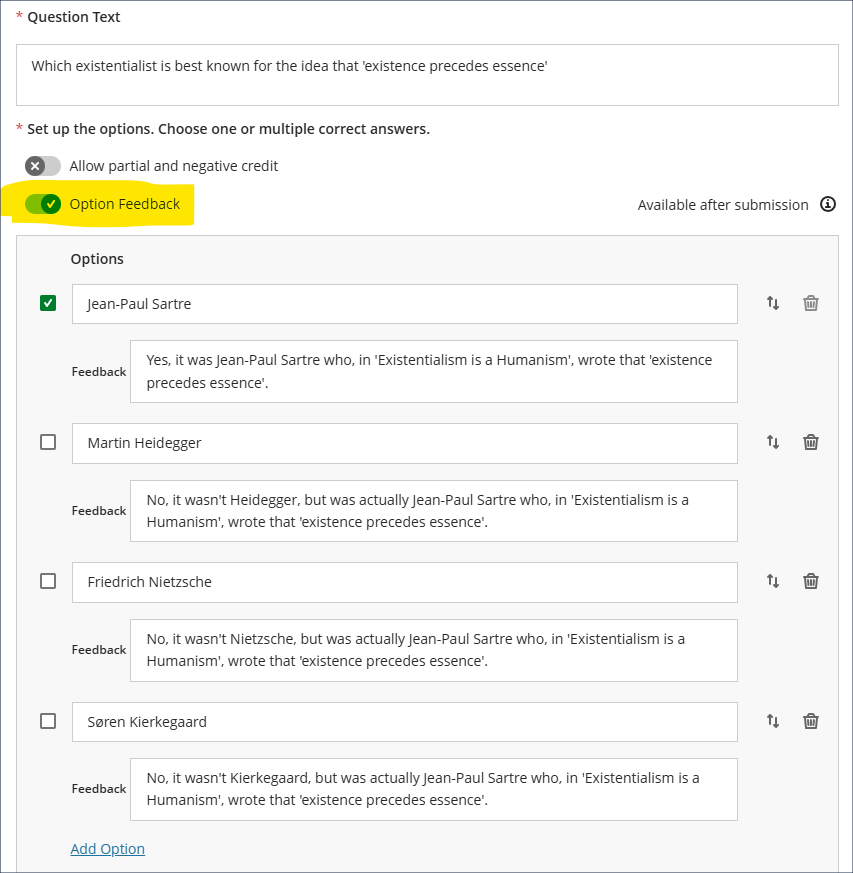

When creating or editing multiple choice or multiple answer questions, June’s upgrade will bring in an ‘Option Feedback’ tool which allows staff to add feedback to each answer response, thus providing students with more detailed feedback about why certain responses were correct or incorrect. When adding feedback, staff do not have to enter feedback for all responses, so can, for example, only add feedback for incorrect responses.

The release timing of the feedback is controlled in the assessment setting panel, so staff can choose when to provide the question feedback, e.g. immediately upon submission of the test, or only when all grades are posted.

More information about setting up and deploying tests in NILE is available at: Learning Technology Team – Ultra Workflow 3: Blackboard Test

Please note that in this initial release of the option feedback tool, students will see the feedback for all responses, not just the ones they selected (as shown in the screenshot below). However, this will be changed in a later release so that feedback is only displayed to each student for the responses they actually selected.

Lock response options in a fixed position for multiple choice and multiple answer questions in Blackboard tests

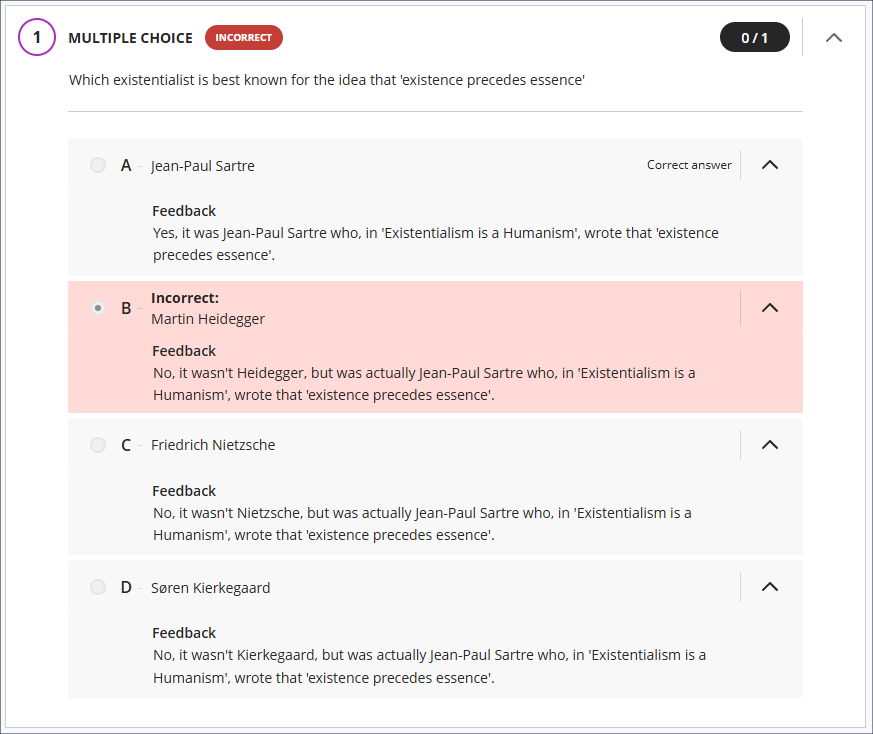

Following June’s upgrade, staff using Blackboard tests will be able to lock multiple choice/answer responses in place when using the ‘randomise answers’ setting. The main use case for this is where staff want to lock responses such as ‘all of the above’ or ‘none of the above’ in place while randomising the display of the remaining responses (as in the screenshot below).

However, as the randomise answers test setting applies to all multiple choice/answer questions in a test (i.e. staff cannot specify that some questions should have have randomised answers and some should not), the lock tool allows for scenarios in which staff want the responses to most questions randomised, but where there are certain questions in which all the responses to a particular question need to be displayed in a particular order, therefore there is no limit to the number of responses that can be locked in a test question.

In the following screenshot, all the responses for the question are locked, so that although other test questions will have their responses randomised, this one will always display the responses in the order specified.

Note that the ability to lock answers in place will only display once randomise answers has been selected in the assessment settings panel.

More information about setting up and deploying tests in NILE is available at: Learning Technology Team – Ultra Workflow 3: Blackboard Test

Track announcement engagement by student

In addition to the Marks, Progress, and Activity Log tabs, the June upgrade will bring in an Announcements tab to the student overview panel in NILE courses, showing which announcements each student has marked as read and when. However (and it’s a big ‘however’), despite appearing to do so, the announcements tracker does not actually indicate whether a student has read an announcement or not, only whether they have marked it as read or not. Students can read an announcement but not mark it as read, and can not read an announcement but mark it as read. Therefore, because there is no necessary relationship between marking an announcement as read and actually reading it, staff should treat the information in this tab (and also in the review student engagement with announcements panel) with an appropriate degree of caution.

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

We’re very excited to let you know that three academics from UON have each received a Blackboard Exemplary Course Program (ECP) Award for their NILE courses this month. The recipients are:

- Carey Allen for PSY1011: Positive Psychology

- Dr Muhammad Hijazy for HRMM094: Academic and Digital Skills for Professionals

- David Meechan for EYS3145: Research and Inquiry

The ECP is an international award which recognises staff who have demonstrated outstanding commitment to creating engaging, innovative, and student-centred learning experiences in their Blackboard (i.e. NILE) courses. To reach ECP standard is a major achievement, and effectively means that these NILE courses are amongst the best anywhere in the world!

“We are proud to recognise educators like Carey, Muhammad, and David, whose work reflects the creativity, care and intention behind impactful course design,” said Dr Lisa Clark, Associate Vice President of Academic Transformation at Blackboard.

As well as being a very well-deserved recognition for Carey, Muhammad, and David, for their hard work and expertise in developing excellent NILE courses, this is a big achievement for UON’s Learning Technology Team too, as only seven ECP awards went to staff at UK universities, and UON staff won three of them!

A complete list of May’s ECP winners is available at: Blackboard Community – Congratulations to Our Exemplary Course Program Winners

If you’d like to know more about how you can transform your NILE course into an award-winner, or if your NILE course is already brilliant and you’d like to find out how to apply for ECP status or for one of UON’s own NILE Ultra Course Awards, please get in touch with your learning technologist.

Finally, many, many congratulations once again to Carey, Muhammad, and David. ECP status is no small achievement, and we’re very proud to have your award-winning courses on NILE.

Update – May 8, 2026: Please note that the May upgrade is currently on hold as a problem was discovered with it. NILE is not affected by the problem as it was discovered prior to our upgrade, therefore we will remain on version 4000.12 (April 2026) and won’t be upgraded to version 4000.15 (May 2026) until the problem has been fixed. More information is available at: Blackboard Issues with 4000.15.0 Release

Update – May 11, 2026: The 4000.15 (May 2026) upgrade is available as of today, Monday 11 May 2026.

The new features in this month’s Blackboard’s upgrade will be available from Friday 8th May. This month’s upgrade includes the following new/improved features to Ultra courses:

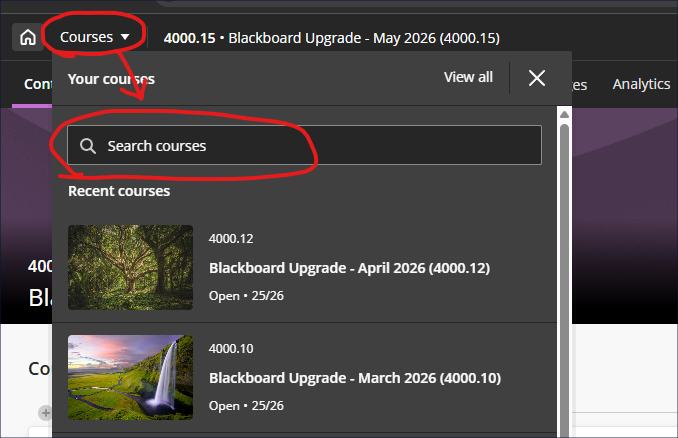

- Improved search and navigation in course switcher

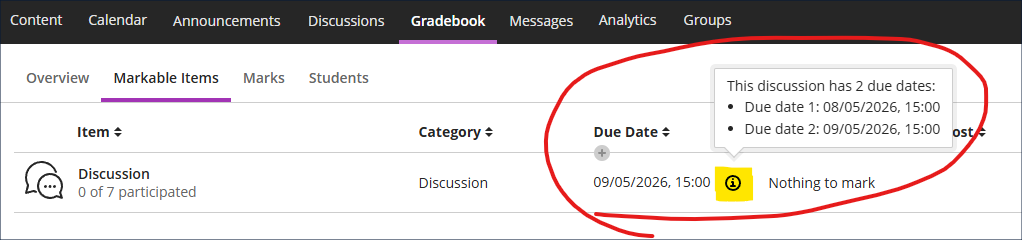

- Second due date for discussions visible in gradebook

Improved search and navigation in course switcher

Following the introduction of the new navigation in the January 2026 upgrade, the May upgrade will bring in an improvement to the course switcher, allowing staff and students to search for courses. At present, the course switcher allows staff and students to move from their current course to one of their four most recent courses (displayed in the ‘Recent courses’ section of the course switcher window). Following the upgrade staff and students will see a search box, making it possible to switch between any two NILE courses without closing the current NILE course and returning to the main NILE courses menu.

Second due date for discussions visible in gradebook

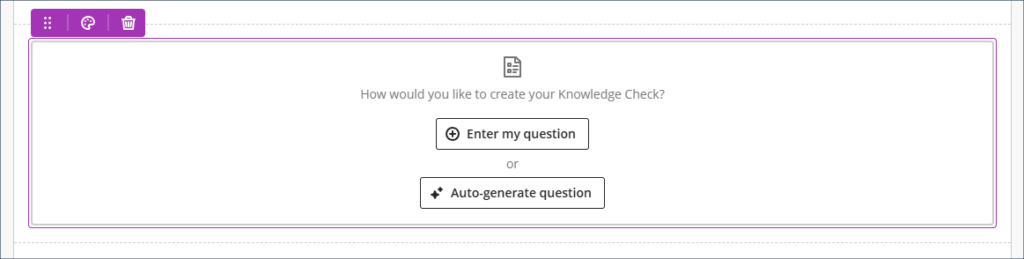

Following the December 2025 and March 2026 upgrades, which, respectively, brought in the options to specify participation requirements and add a second due date when using marked discussions, May’s upgrade will, where a second due date has been set, allow staff to view both discussion due dates in the gradebook.

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

On 27 March 2026 six online presentations took place and are described below.

1. Creating an Introduction to AI for Students using H5P

Presented by Rob Howe, Head of Learning Technology.

This project outlines the development of a self-paced Introduction to AI resource designed to build students’ confidence, critical AI literacy, and ethical understanding of AI and generative AI in academic contexts. Developed collaboratively using H5P, the course was designed to be flexible, reusable, and accessible both as a standalone web resource and as an embedded activity within NILE modules.

The team focused on creating a centrally maintained resource aligned with University guidance, while allowing academic staff to contextualise its use within their own teaching. Two delivery approaches were supported: a simple web link for easy access, and an embedded version offering student progress tracking and reporting.

The process highlighted both benefits and challenges—particularly around collaborative editing in H5P, quality assurance, and the significant time investment required alongside existing roles. Early feedback from staff and students has been largely positive, identifying the resource as a useful and accessible introduction, while also highlighting the need for more depth, visual content, and clearer guidance on appropriate academic use of AI.

Key lessons from the project include the importance of clear objectives, early planning using curriculum and UDL principles, piloting content extensively, providing strong implementation guidance, and committing to ongoing review. The next phase will focus on more targeted, assignment-specific guidance, supported by staff-led contextual discussions with students.

2. AI use at UON resource for staff (H5P)

Presented by Kelly Lea, Learning Technologist in the Learning Technology team.

Artificial Intelligence use at UON H5P resource was developed as part of Study Smart Project Part 1. The study was to provide staff with more information about AI.

Kelly produced a Toolkit. Kelly started the project with a Padlet which she found good for ideas and for a mind map. Kelly then looked at H5P software and produced two resources for staff.

- Toolkit for staff

- Overview of AI

Using the H5P Course Presentation content type Kelly started with a blank slide, and this was to be the main menu slide where buttons are available to link out to resources. Conscious of not having information in lots of different places, Kelly could edit the one slide to provide menu buttons to areas using hyperlinks. A nice detail is the use of the Information bubble icon to select to read more information.

3. REGAIN – Regulating Ethical Generative AI use in Northampton

Presented by Prof Simon Sneddon (FBL) and Sheryl Mansfield (LLSS)

Problem

Since the rapid expansion of GenAI technology beginning in 2023, HEIs globally have encountered significant opportunities and challenges. GenAI offers vast potential for enhancing student learning and engagement, but also introduces ethical risks such as academic misconduct, over reliance, and privacy concerns. Project REGAIN was co-created to educate students about responsible and ethical AI use.

Solution is a recource developed using Xerte Online Toolkits

- Open-source suite of tools developed by the University of Nottingham.

- Browser based tools that are quick and easy to develop.

- Interactive e-tivities with built in accessibility within the software to support content recall and mini assessment.

Further information

REGAIN is a LT funded bid project which ran up until 2025. The project involved a big team including assistance from ASSIST and a student who built the resource using Xerte software.

The aim was to produce a package to allow students to explore the use of AI (Artificial Intelligence). Prior to creating REGAIN a feedback form was distributed to students asking what students wanted to know about AI. REGAIN is based on student feedback on what students want to know about AI.

Statistics show that REGAIN has had 1300 viewed activities and the overarching Xerte Bootstrap template website UNPAC (University of Northampton Plagiarism Awareness Course) has had 103,723 views.

What next?

Review use of REGAIN at end of 2025/6 and tweak for 2027.

4. The Knee by Pierre Bonnaud, Lecturer in Physiotherapy

The H5P Interactive Book is an engaging online learning resource for students to participate in a range of activities. Pierre has created 7 Interactive books for the first year Physiotherapy students studying at level 4 and Level 7 (Level 7 have added activities for the critical thinking required, eg article reviews, extra links for reading etc). The H5P interactive books are part of a broader range of module content with face to face, reading lists, online tutorials and practical sessions. Follows the scaffolding of learning with the interactive book being the first step of the learning.

Why H5P Interactive Book?

The Interactive book provides a package of a variety of content rather than separate isolated pieces of content in NILE (Northampton Integrated Learning Environment). The H5P Interactive books created for each week are consistent in style and delivery with a standardised display providing the NEXT and BACK arrow located on the top and bottom right of the screen and a left-hand index. The students work through the Interactive books in a linear way. The students can repeat the learning activities to consolidate their learning further. By using the retry button on the H5P packages they can re-do the activities. Students have verbally mentioned that the Interactive Books are excellent and no negative feedback has been received by Pierre from the students.

This is, Pierre feels for several reasons:

- A page at the beginning where the list of anatomy is provided so that students have that knowledge at the start (if they do not have that knowledge already).

- Offers a variety of tools and interactions (links to websites and videos, hotspots, drag and drop, interactive video, text entry essay page etc).

- Interactivity.

- Immediate feedback with the ‘Show Solution’ option, without students having to wait.

- From a Lecturers stand point, it can be shared with colleagues, and that supports interprofessional work, sharing resources and sharing best practice.

- Students can copy and paste content to create their own learning resources.

Students with dyslexia and ADHD enjoy working with the H5P Interactive book because of the range of delivery, eg images, video, graphics etc. Pierre states that being able to embed a link to a site with information, before asking a question, can help the student answer a question which follows, as the student can refer to that recent information. This is very useful.

How long does it take for a student to use?

That can vary depending on whether the student takes part in every activity or whether they prefer to read the ‘Solution’ for feedback and information text. Students have fed back verbally that although the H5P package can be fairly quick to explore, the content within the H5P workbook provides a focused and clear direction for the student to prepare for the week ahead by using the links to reading material, video, websites etc, and this can take up to 4 to 5 hours in line with the guidelines with self-directed study for the module.

5. Interactive Video

Presented by Jim Atkinson, Organisational and People Development Consultant.

Jim created an interactive video using Adobe Rush and Kaltura MediaSpace supported by Richard Byles in Learning Technology team.

Background

Jim completed a Digital Learning Design (DLD) Level 5 apprenticeship in 2025. Jim’s final piece of work for the Project was a piece of e-learning called Environmental and Sustainability Induction for UON staff. Jim worked with Hollie Darby in the Environment and Sustainability team to produce the relevant content for the e-learning. Included in the e-learning is an interactive video.

Pre-production

Jim wanted to include a video with quiz questions in the category ‘Waste Management and Recycling’ and approached Tony Routhorn the UON Waste Operative to present in the film. The planning, creating the questions, organising diaries etc took about an hour.

Production

Jim used a Samsung S10 Lite. This Android phone came out in 2019. Jim used his mobile phone in-built microphone which he states was okay. Richard Byles a Learning Technologist was Director of Photography. The filming took about two hours.

Post-production

Jim used Adobe Rush to edit the clips together and included graphics. It was Jim’s first use of Adobe Rush but with a 15-minute lesson from Richard Byles and guidance from LinkedIn Learning, Jim upskilled himself to edit the video. The editing took about 2-3 hours.

Adding the quiz questions

The completed film was uploaded to Kaltura MediaSpace, the University of Northampton media platform, where the quiz questions were added to the video.

Outcome

Jim received a distinction for the project and passed his apprenticeship.

Further comments

Jim states: “It was windy on the day, so we tried to find somewhere sheltered (which was alongside the Engine Shed). Some footage of interview questions in another location I just couldn’t use due to wind/audio issues”.

Reflection

Jim states that he really enjoyed creating the video and particularly the creative aspect of it all and found it a lot easier to do than he thought.

6. Student Supervisor Interaction Log (H5P)

Presented by Lee Machado, Professor of Molecular Medicine.

Background

Lee has taken over a module, SLSMO13 a Dissertation Module of 60 credits on the taught MSc in Molecular Medicine. This involves lab-based research from February to July 2026. The thesis submission is in September 2026. The Royal Society of Biology re-accreditation was received by Lee on 27 March 2026. This wasn’t the rationale for making the log but pertinent as RSB accreditors in their pre-accreditation report are asking how we track supervisor-student interaction. The catalyst for such a resource, is that Lee wanted to make sure that students are being supported by supervisors, and progress and actions were being effectively captured during the course of the research project.How did Lee know to use H5P?

Lee asked AI with a prompt “What is the best approach to develop an interaction log in Blackboard?”. AI provided an answer suggesting use of the H5P Interactive Book. Lee did try other tools/software but decided to use H5P Interactive Book.How it works.

The Student Supervisor interaction log consists of the H5P Essay content type within the Interactive Book. The Essay content type allows for text entry. It is engaging and clear. The students must submit (green submit icon on the last page) to log the text.Benefits of the resource for the student are:

- Structured sequence.

- Make expectations clear.

- Engages.

- Guided reflective record.

Benefits for the staff creating the resource are:

- Add Collaborators.

- Student – Supervisor log prompts.

- Reports are available to the staff to see what the students have written.

- The Reports can be downloaded as a CSV file.

Summary of logs for each student

Once a week submit a log. As this is the first year we have not been too prescriptive about frequency but yes weekly logs are helpful.Conclusions

Are students engaging with supervisors?

Reply: Variable.What is the quality of the supervision?

Reply: Harder to determine.Are students clear on actions assigned to both student and supervisor?

Reply: Need better entries.Are students at different levels and support?

Reply: Yes and Yes.Summary of the Worthy Online Work event

Summarising the event Rob Howe, Head of Learning Technology states: The Worthy Online Work event showcased a range of short, practice-focused demonstrations highlighting innovative uses of interactive technologies to support learning and student engagement.

The sessions showed the value put on support, learning and teaching by academics (every Faculty was represented) and professional services staff. Sessions covered introductions to AI for students, subject-specific learning activities, interactive video, and tools to enhance supervision and feedback. A key highlight showcased in working with students was the ‘REGAIN’ project, which emphasised meaningful student involvement in the design and delivery of digital learning resources.

Collectively, the Worthy Online Work sessions illustrated how tools such as H5P, Xerte and video tools were being used to create engaging, inclusive, and student-centred learning experiences throughout University of Northampton. If you would like further information about the projects presented, you can contact the staff directly via email.

Anne Misselbrook who put the event together, concludes that it is satisfying to see her idea come to fruition. Anne adds that training is available on H5P (HTML5 package), Xerte Online Toolkits, Record and Edit Video and Content Development.

The new features in this month’s Blackboard’s upgrade will be available from Friday 3rd April. This month’s upgrade includes the following new/improved features to Ultra courses:

- Specify assignment submission type with Blackboard assignments

- Add conversation constraints to AI conversations

- Upgrades to Collaborate (available from 16th April)

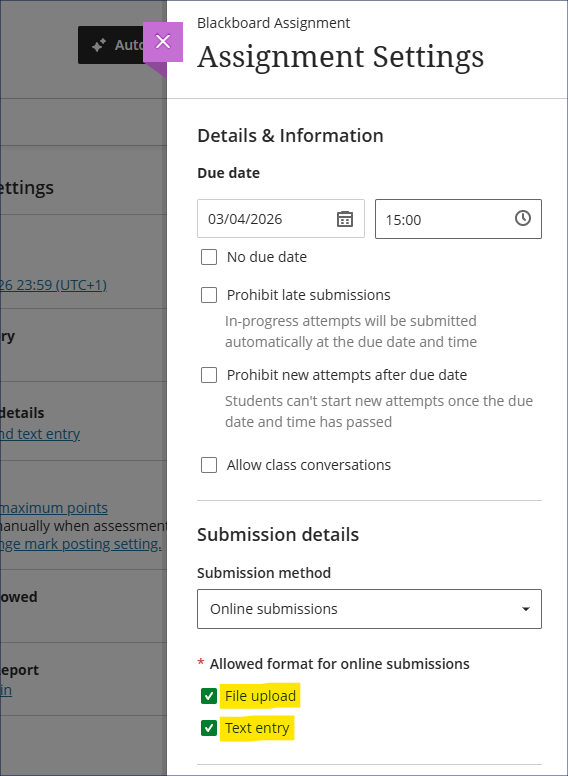

Specify assignment submission type with Blackboard assignments

Following Friday’s upgrade, staff setting up Blackboard assignments will be able to specify whether students can upload a file, submit a text entry, or both. The default setting is with both options enabled, and we recommend leaving both enabled as this means that students will not be prevented from submitting anything they can currently submit. The new file upload block makes it considerably easier for students to submit files to Blackboard assignments, and they can submit one or many files in the same submission.

Important note for Kaltura submissions.

Please be aware that for students to be able to submit Kaltura videos for assessment, the ‘Text entry’ box must be ticked, because it is this box that allows access to the ‘+’ (plus) button, which allows students to access to the Content Market within which Kaltura is situated. Where staff want students to submit both a Kaltura video and supporting documents, both boxes must be ticked.

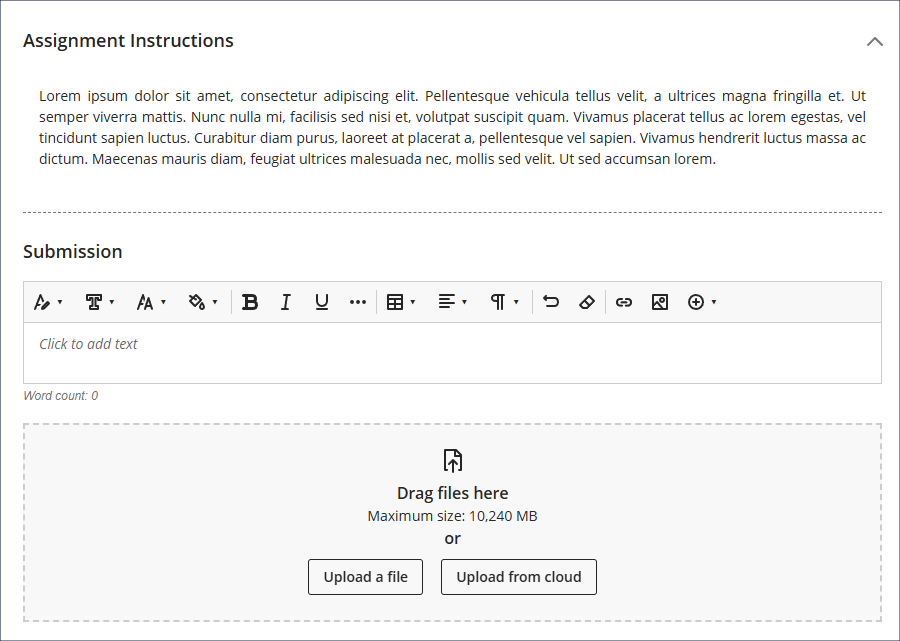

The student view of the updated assignment submission boxes is show below, with the ‘Text entry’ box immediately below the assignment instructions, and the ‘File upload’ box at the bottom. While the ‘Upload from cloud’ button will be visible to students, it is not functional, and students need to use the ‘Upload a file’ button to attach files to their submissions. As is currently the case, files must be less than 1GB in size, and media files must be submitted using Kaltura. See our FAQ ‘Why can’t I upload my file to NILE?’ for more information.

More information about setting up and using Blackboard assignments is available from: Learning Technology Team – Ultra Workflow 2: Blackboard Assignment

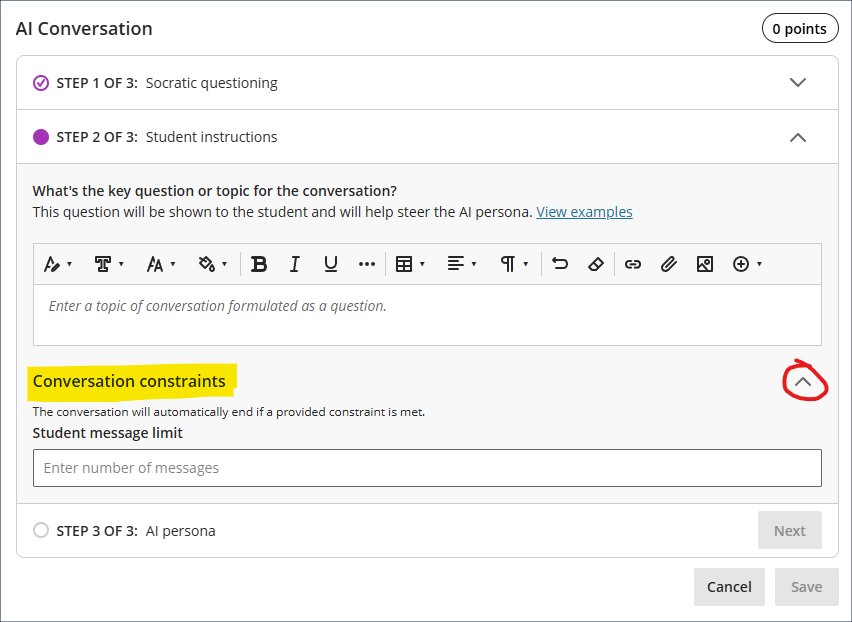

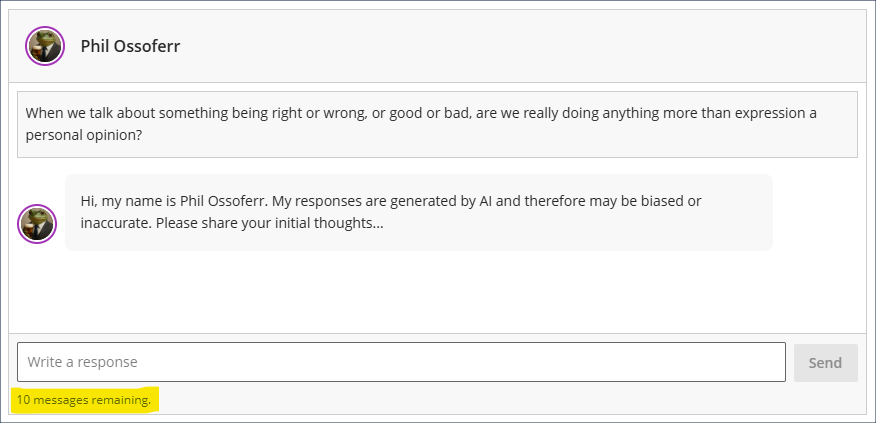

Add conversation constraints to AI conversations

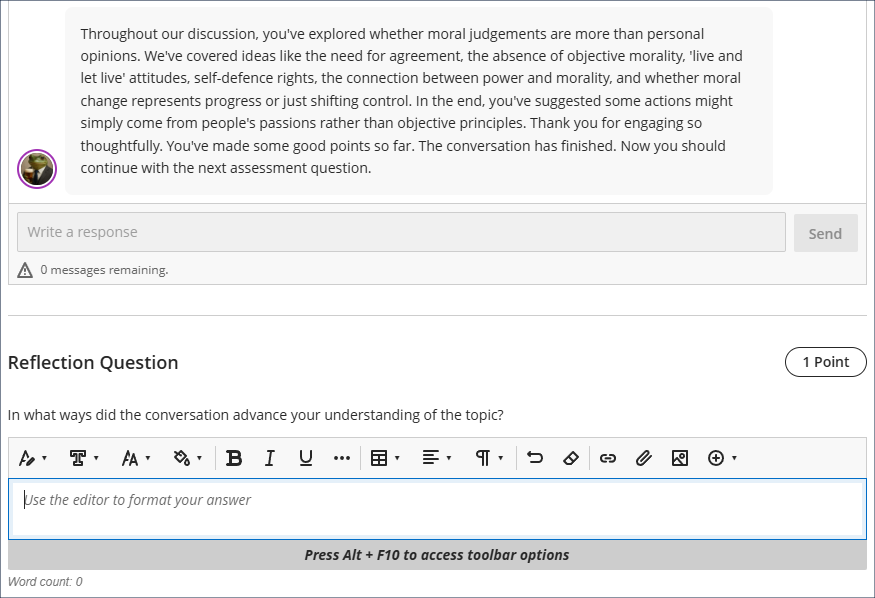

April’s upgrade will allow staff to add limits to AI conversations. In response to feedback about the open-endedness of AI conversations, staff can now optionally specify how many responses a student can make. Students will not be able to go beyond this message limit, but can still submit before the message limit is reached. If message limits are specified students will see this, and will be kept updated as to the number of messages remaining. Upon submission of their last message, the AI persona will summarise the discussion, thank the student for their responses, and will prompt them to complete the reflection question.

More information about using AI conversations is available from: Blackboard Help – AI Conversation

Upgrades to Collaborate (available from 16th April)

From the 16th of April the following new features will be available to all Collaborate users:

Additionally, moderators of Collaborate sessions will be able to make use of the following new features:

*Please note that auto-generated subtitles are never 100% accurate therefore will not be suitable for a student with an Academic Inclusion Report (AIR) who requires provision of completely accurate captions and/or transcripts. More information about captioning requirements for Collaborate sessions (and other audio and video content) is available from: Learning Technology Team – Accessibility in NILE, Captioning Collaborate lectures, Kaltura recordings, and other video and audio content

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

On Saturday 14th March 2026 the Learning Technology Blog will be 15 years old! With 646 posts published during that time (including this one), we’ve averaged 43 posts a year. Fittingly enough, our first post was written by Head of Learning Technology, Rob Howe, and Rob H still leads both the team and the blog scoreboard with a total of 258 posts on the LearnTech Blog. Yours truly makes it into second place with 114 posts, and our wonderful ex-colleague, Julie, is in third place with 76 posts.

The origin story of the Learning Technology Team and NILE goes back to 1st August 1997 when Rob H was appointed as the University’s first Learning Technology Adviser. Jump forward to 18th May 1999, and you have the beginnings of NILE, when, as he was by then, the Head of Academic IT Services, Rob H, wrote the discussion paper ‘Proposed Roadmap for NILE (Northampton Integrated Learning Environment)’. The Learning Technology Team as it is now formally began life on 1st September 2007 and since that time the team and its individual members have won various awards, and have benefitted from and enjoyed the brilliance and expertise of many fine people, twenty-six of whom have gone on to pastures new, but none have been forgotten. So, take a bow, Adel, Andy, Belinda, Cleo, Craig, David, Dom, Doreen, Gemma, Gez, Iain, Izzy, Jim & Jim, Julie, Kalina, Kerry, Kieran, Nicola, Omar, Rachel, Rachel, & Rachel, Rob, Simon, & Vicky. The team now comprises ten outstanding and highly-skilled colleagues who have made the University’s Learning Technology Team their home. So, take a bow too, Al, Anne, Kelly, Liane, Richard, Rob & Rob, Sean, Sharon, & Tim.

Over the years we’ve seen many tech fads, booms, threats and disruptors come and go. Some have been supposed panaceas (they weren’t), others have been on the verge of destroying the very fabric of education (they didn’t) – although often the same things were both. Whether the tech on offer was digital gold or, to quote the title of Tara Brabazon’s book, digital hemlock, it mostly depended on your perspective. Back in 1999 when Rob H wrote his discussion paper inaugurating NILE, it was the internet that was going to destroy learning, as Brabazon explained in her 2002 book, Digital Hemlock: Internet Education and the Poisoning of Teaching. In 2001 the perennially unhelpful term ‘Digital Natives‘ was coined, and you can read more about that term on the educational no-go zone that also started life in 2001, Wikipedia. Around 2007 there was a lot of interest in Second Life, and much money was spent (although not by us) on exploring whether it would be, as the Guardian headline put it, the ‘Campus of the future‘. Also in 2007 Martin Weller famously (well, it was famous in learning technology circles) announced the death of the VLE. (Although if you’re wondering what we’re still doing with a VLE you can check out his 2024 post, Things I was wrong about pt2: The Death of the VLE.) Starting in 2008, but really getting going by around 2012/13, MOOCs (Massive Open Online Courses) were causing ‘the ivory towers of academia [to be] shaken to their foundations‘. (By the way, anyone remember cMOOCs and xMOOCs?) Then in 2014 a paper by Freeman, et al., got lots of people thinking about active learning, and in 2015 we were all quite excited about the flipped classroom (well, I was). Folks who remember teaching at UON’s Park and Avenue campuses (cue nostalgic sighs) may remember using classroom response systems (a.k.a. clickers, and yes, they were a big faff to set up and use), and SmartBoards (yes, they were far too small, weren’t they). Also, around this time there were staff doing interesting things with iPods and iPads, as shown in our video Mobile Learning in Art & Design, and in the book Teaching with Tablets by UON innovative educator, Helen Caldwell, and James Bird. Others were doing interesting educational things on Twitter (back in the day, before it went bad), and for far too long everything had an ‘e’ in front of it, which made it seem cool and relevant, but now just makes it feel very dated and a bit sad. For more edtech nostalgia, check out VLE obituarist Martin Weller’s 25 Years of EdTech.

So what tech tools are coming along to disrupt, enchant, or destroy us these days? Well, we’ve all been enjoying the marvel that is Blackboard Ultra and revelling in its superiority to Blackboard Original (come on, you know you have). And the different realities, AR/VR/MR/XR (augmented reality, virtual reality, mixed reality, extended reality), have been well utilised in some specialist areas, but are yet to take off in a big way across HE, although English Lit grads will need no explanation as to why that is; after all, ‘human kind / Cannot bear very much reality’ (cue groans from T.S. Eliot fans, and nonplussed expressions from everyone else). Educational neuroscience appears to be a very exciting field with a great deal to offer, but today’s major topics of interest undoubtedly centre around artificial intelligence, and learning (or learner) analytics – and perhaps both together at some point, as it figures that there will be an AI-powered learning analytics system on offer soon. On the subject of AI in education, UON innovative educators David Meechan and Jane Mills have been making some fascinating inroads. David has kindly written a book called Generative AI for Students, which will help students to use AI sensibly in their studies without destroying themselves or humanity in the process. And Jane is leading the way on AI in art and design, and particularly in fashion, as you can see in her excellent talk Exploring the Fusion of Fashion and Artificial Intelligence. But to correct for bias it should be pointed out that not everyone is in favour of AI in education, quelle surprise, so cue Your Brain on ChatGPT and cut to Private Frazer saying ‘we’re doomed’. Fortunately, as well as the Learning Technology Team, the University now has its own Centre for Active Digital Education to help steer a course through the edtech mud? maze? swamp? wasteland? – take your pick – and help us figure out the useful tech from the useless fads.

So, to wrap up what was meant to be a short blog post, but, master of verbosity that I am, has already become far too long, Happy Birthday to the Learning Technology Blog, and a big thanks to all our readers out there (which I know sounds very grandiose, but during the 24/25 academic year alone we had a total of over 27,000 post views, so we must have one or two readers). And a special big thanks to anyone who actually got all the way to the end of this particular post: it was a bit TL;DR wasn’t it.

The new features in this month’s Blackboard’s upgrade will be available from Friday 6th March. This month’s upgrade includes the following new/improved features to Ultra courses:

- Generate knowledge checks in Ultra documents with AI Design Assistant

- Add a second due date and participation requirements in discussions

- Partial credit limits removed for multiple option test questions

- Blackboard test question title field relocated

Generate knowledge checks in Ultra documents with AI Design Assistant

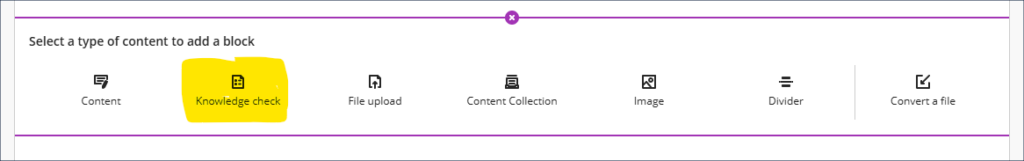

Staff using the knowledge check tool to add interactive quiz elements into their Ultra documents will find, following the March upgrade, that knowledge check questions can be auto-generated. The current option to create questions manually still exists via the ‘Enter my question’ option, but a new ‘Auto-generate question’ option will be in place from Friday. This new option will auto-generate four questions based on the content in the Ultra document (although staff can use the ‘Select course items’ option to broaden the range of material on which the AI Design Assistant bases the questions), and from these four options staff can choose one to add, editing it beforehand should they wish to.

More information about creating Ultra documents can be found at: Blackboard Help – Documents

More information about the AI Design Assistant can be found at: Blackboard Help – AI Design Assistant

Add a second due date and participation requirements in discussions

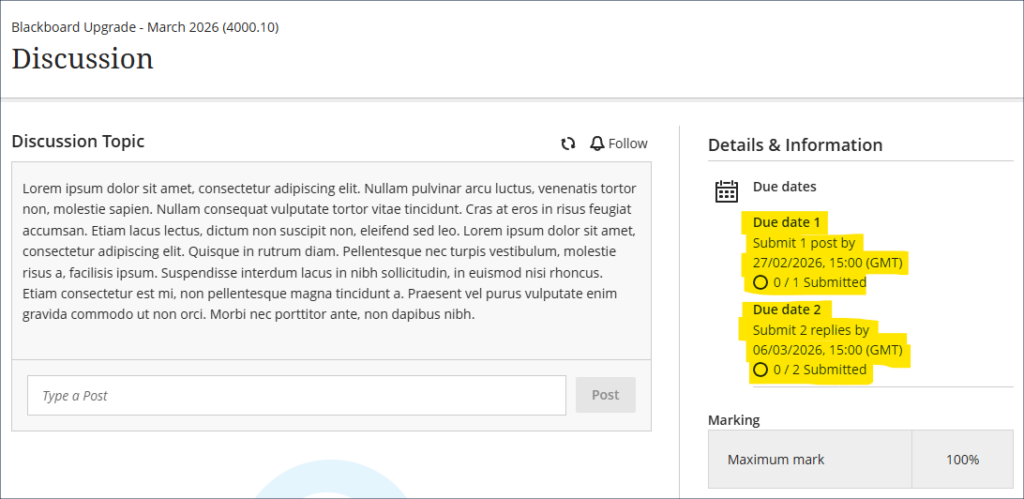

Following the December 2025 upgrade which introduced the option to specify participation requirements in discussions, the March upgrade will bring in the option to specify a second due date when using discussions, along with a second set of participation requirements. An example of how this might be used is shown in the screenshot below, where students are required to make a single post, and then, a week later, to have made two replies.

The participation requirements will be displayed to students when they view the discussion, and the progress tracker will show them when they have met the minimum requirements.

Setting participation requirements does not affect any gradebook settings. For example, if a student has not met the minimum participation requirements this will not affect whether or not the discussion can be marked.

More information about setting up and using discussions is available from: Blackboard Help – Discussions

Partial credit limits removed for multiple option test questions

The March upgrade will see some restrictions removed from the percentages that can be awarded for partial and negative credit-enabled multiple option and multiple choice test questions.

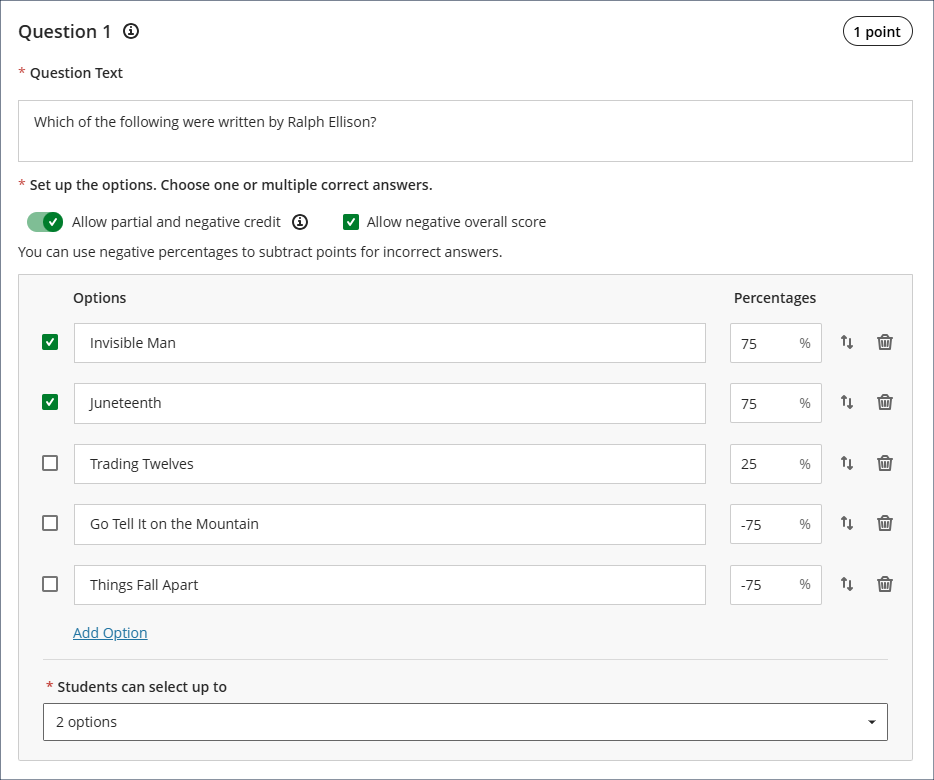

1. Multiple option test questions

A multiple option question, also called a multiple answer question, is a question where students are presented with a number of possible answers and can select more than one answer. Multiple option questions can have more than one correct answer, but it is not a requirement for a multiple option question to have more than one correct answer.

Currently, the following are prohibited when setting multiple option questions:

- Awarding a total percentage for all the correct answers that is not equal to 100%.

- Awarding a positive percentage for an incorrect answer.

Following the March upgrade, staff setting multiple option questions will be able to:

- Where there is more than one correct answer, award a total percentage for the multiple correct answers that is equal to or above 100%. (Where there is only one correct answer to a multiple option question, the correct answer can only have a value of 100%.)

- Award a positive percentage for an incorrect answer.

Unchanged are the following:

- The combined score for the correct answers cannot be less than 100%.

- A correct answer cannot receive a negative percentage.

- No single answer can score more than 100% or less than -100%.

An example of how this could work is shown in the screenshot below. In the question the maximum and minimum scores are the same, and students can score between -150% and 150% on this test question. And a small positive percentage is allowed for one of the incorrect answers, as while it is not a fully correct answer it is not as incorrect as the other two incorrect answers. Note that the correct answers are the ones marked with a tick in the box to the left of the answers.

2. Multiple choice questions

A multiple choice question is one where students are presented with a number of possible answers and can select only one answer.

When setting up a multiple choice question the correct answer must still equal 100%. However, following the March upgrade the incorrect answers in a multiple choice test can score anywhere between 100% and -100%, whereas prior to the upgrade incorrect answers could only score between 0% and -100%.

Advice when setting up multiple option/choice questions

Please note that while these changes allow for more flexibility, particularly when setting multiple option/choice questions, it does now require more care to be taken when using partial and negative credit scoring, not least because staff will no longer be required to enter a negative score for an incorrect response, so there is now nothing preventing a question from awarding as many, or even more, points for getting the answer wrong as for getting it right. To award a negative score for an answer, staff must add a minus in front of the percentage, as, following the upgrade, this will no longer be done automatically or enforced, and there are no warnings given when positive percentages are entered for incorrect answers. Please do not assume that the percentages awarded for incorrect responses are the percentages being deducted. They are only being deducted if there is a minus in front of the percentage.

While there is now more flexibility (and complexity) in this area, staff do not have to use partial and negative credit when setting up multiple option/choice questions. However, staff who would like to make use of partial and negative credit scoring but who are not confident to do so are welcome to contact their learning technologist to arrange a training session about how these features work.

More information about setting up Blackboard tests is available from: Learning Technology Team – Ultra Workflow 3: Blackboard Test

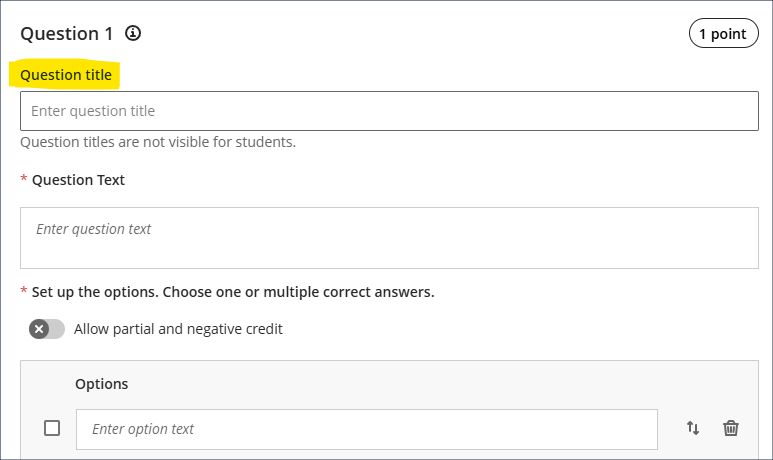

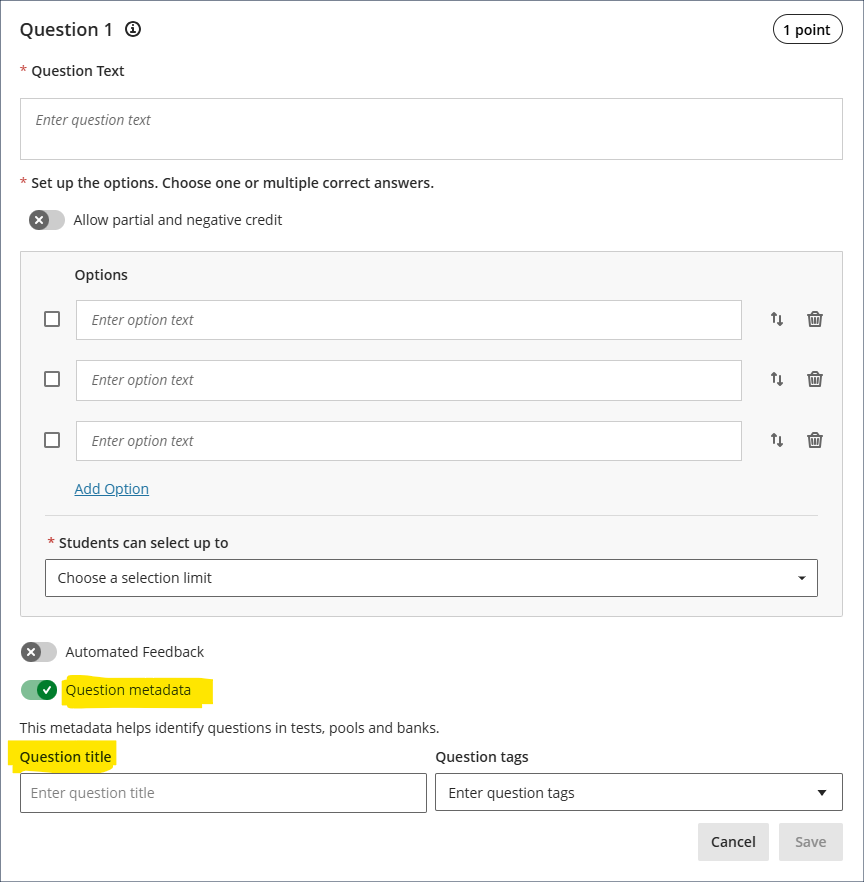

Blackboard test question title field relocated

In the September 2025 upgrade Blackboard introduced the option to add titles to test questions. These question titles are optional, not visible to students, and are designed to help staff locate previously created questions for reuse in other tests. Following requests from staff, many of whom found the prominent placement of the question title field to be unhelpful, the March upgrade will see the question title field moved to the question metadata section of the question setup options, which more accurately reflects its purpose.

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

The new features in this month’s Blackboard’s upgrade will be available from Friday 6th February. This month’s upgrade includes the following new/improved features to Ultra courses:

- Automations

- Ultra document enhancement

- Convert a Word/PowerPoint/PDF file to an Ultra document

- Ultra Course Awards 2026

- H5P, Xerte, Video Showcase – Call for presenters

Automations

Automations allow staff to configure triggers that prompt NILE to email students automatically when certain criteria are met. Following the upgrade staff will be able to set up automatic congratulatory emails for students who have achieved above a specified threshold on assessments, and to send supportive emails for those who have scored below a specified threshold. Staff can also send reminder emails to students who have unread feedback, although please note that this applies only to Blackboard assignments, not Turnitin assignments.

Note that congratulatory/supportive automations are only triggered by posted marks in the gradebook. Unposted marks will not activate the trigger. For example, if a student takes a formative test and receives their mark immediately upon completion of the test, an automation linked to completion of that formative test would be triggered immediately. However, if a student submits a manually marked assignment and receives a grade for it, an automation linked to that assignment will not be triggered when the mark is entered, only when the mark entered is actually posted.

For more information about automations see: Blackboard Help – Automations

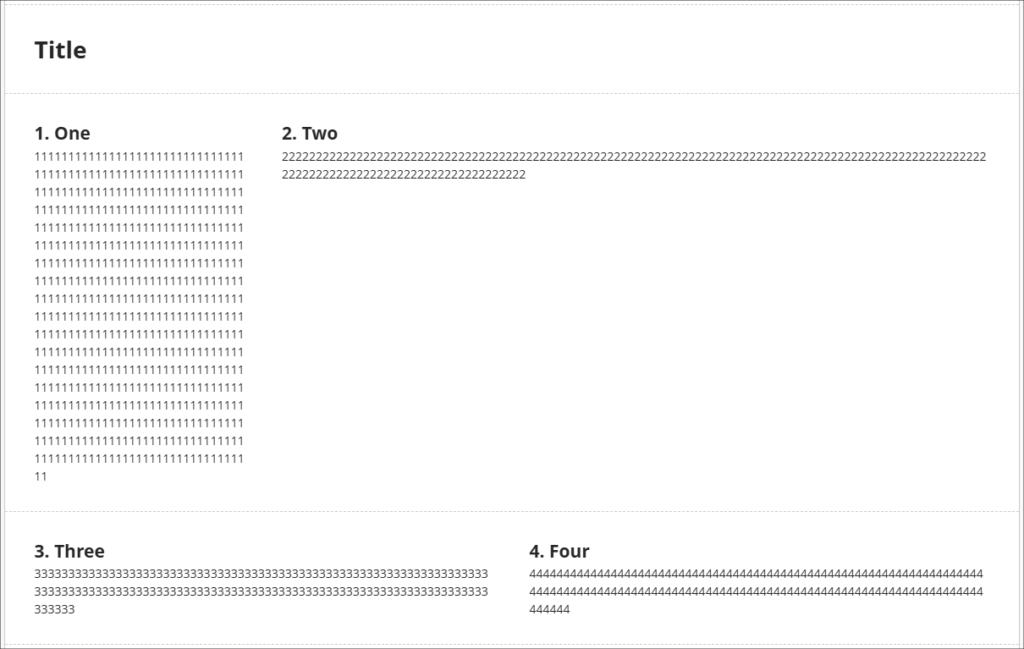

Ultra document enhancement

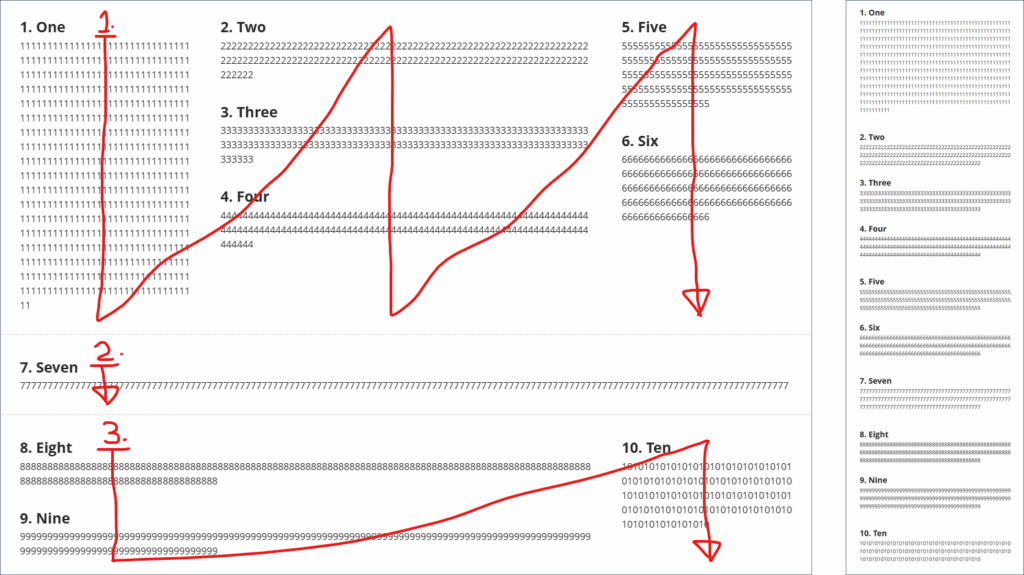

February’s upgrade includes an enhancement to Ultra documents entitled, ‘Stack blocks vertically in documents’. How this improves Ultra documents is as follows. Currently, if you have made use of multiple columns in an Ultra document you may have found that each column can only contain one block. In the following example you can see that the first row of my Ultra document (which contains the blocks titled ‘1. One’, and ‘2. Two’) has been divided into two columns which take up, respectively, 1/4 and 3/4 of the horizonal screen space. This has left me with a lot of space underneath block ‘2, Two’, but if I want to move any blocks underneath ‘2. Two’, I cannot do this as a column can only contain one block. I can, of course, take the text from other blocks and copy and paste it into the ‘2. Two’ block to fill up the space, but this is time consuming, and also frustrating if I just want to try out some different layout options.

The ‘Stack blocks vertically in documents’ upgrade means that a column can contain multiple blocks, so after the upgrade I can now fill the space under ‘2. Two’ with additional blocks by dragging and dropping them in place.

However, do bear in mind that once in a column together the blocks will share the same screen width. So, if I adjust the width of block ‘2. Two’ I will also adjust the width of all of the blocks in that column.

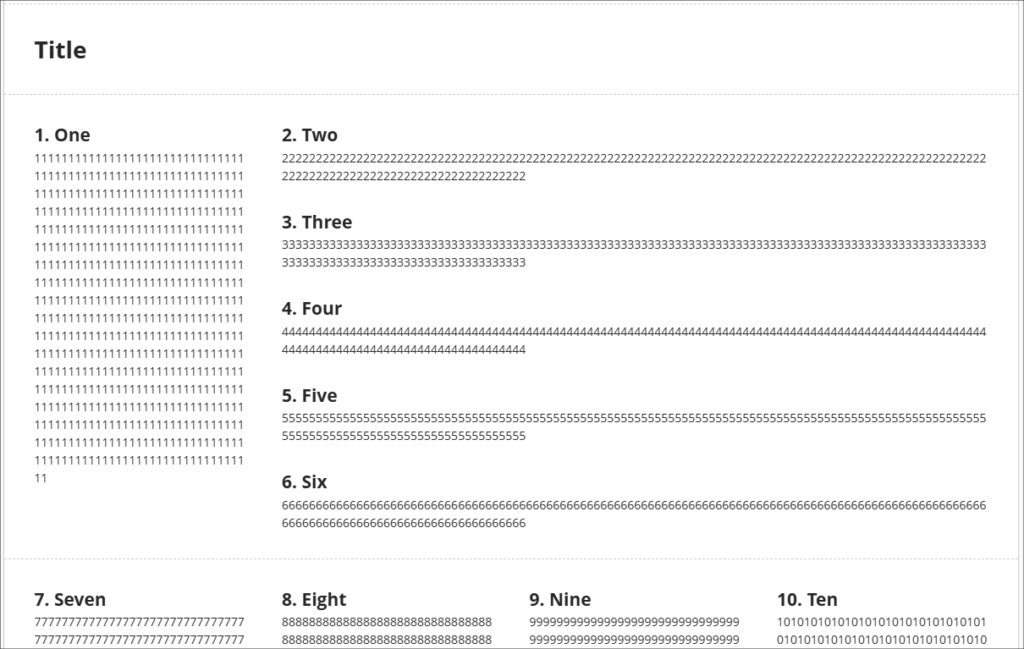

Also important to bear in mind that while your layout choices will be respected while students view Ultra documents on their laptops/desktop, on mobile devices the blocks will resize and flow into a single column, so instructions such as ‘immediately above/below’, or ‘to the left/right of’ will not necessarily make sense to all users. So, for example, rather than referring to an image as being on the right, it would be better to title the image (e.g., Fig 1.) and refer to the image by it’s title than by its relative position.

In the following screenshot you can see how students view the same page on a laptop (shown on the left) and on a mobile device (on the right).

In case it’s helpful to know, when using multiple columns, the blocks always reflow in a simple, predictable matter, from the first row, first column, first block, i.e., the block in the top left position, down the column if there are multiple blocks in the column, but not crossing into the next row, then to the next column(s) on the right in that row. Once there are no more blocks in the row, the next row and the columns therein are selected, and so on. The following (rather roughly annotated) image shows an example of how an Ultra document with a complex layout on a laptop (the image on the left) will reflow when viewed on a mobile device (the image on the right).

If you’d like to see how this works, you can view a video demonstration here: Blackboard Upgrade – February 2026 – Stack Blocks Vertically in Columns in Ultra Documents (video, 4m 22s)

More information about using Ultra documents is available from: Blackboard Help – Documents

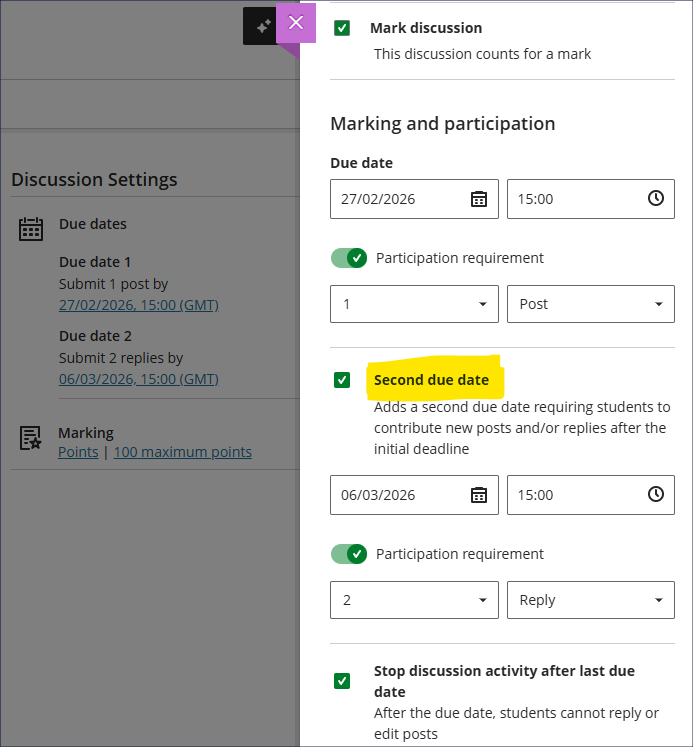

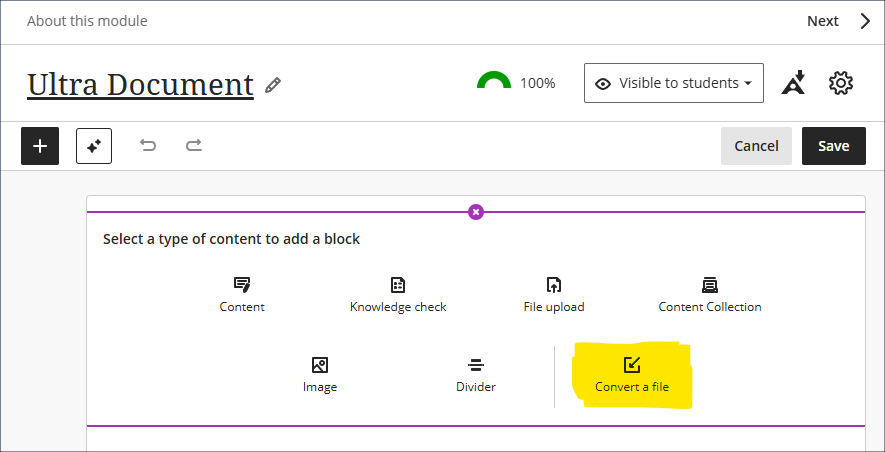

Convert a Word/PowerPoint/PDF file to an Ultra document

Okay, so this one isn’t actually new, but it’s pretty good and I get the impression that not too many people know about it, so here it is again. Long story short – you have various Word, PowerPoint, and PDF files in your Ultra course, and you’d really like them to be Ultra documents* but you don’t have the time or the patience to laboriously copy and paste everything into Ultra documents, so wouldn’t it be great if there was the option to auto-convert Word/PowerPoint/PDF files to Ultra documents …

*The key reasons that you’d really like them to be Ultra documents, of course, is because this not only makes them look better, but it makes them much more accessible and mobile friendly, and really easy for students to access in alternative formats … and, yes, students can still download and print Ultra documents.

Ultra Course Awards 2026

Have you put together a great NILE Ultra course for 2025/26? Or do you know someone who did?

We’re really keen to highlight and celebrate examples of good practice with Ultra, so if you or someone you know has designed a good Ultra course we’d really like to hear from you.

You can nominate yourself, or someone else, or multiple members of staff if the Ultra course has been created by more than one person. In your nomination we just need to know who it is that you’re nominating, which module the nomination is for, and what it is that you think has been done well. And you don’t have to tell us who is making the nomination if you don’t want to.

Nominated courses will be reviewed and Ultra Course Awards will be given according to the following criteria:

- The course follows the NILE Design Standards for Ultra Courses;

- The course is clearly laid out and well-organised at the top level via the use of content containers (i.e., learning modules and/or folders);

- Content items within top-level content containers are clearly named and easily identifiable for students, and, where necessary, sub-folders are used to organise content within the top-level content containers;

- The course contains online activities for students to take part in;

- The course has not previously won an Ultra Course Award.

Winners of 2026 Ultra Course Awards will be announced in the summer, and you can find out more about last year’s winners here.

Please note that nominations close at 23:59 on the 31st of March, 2026

Ultra Course Awards 2026 – Nomination Form

H5P, Xerte, Video Showcase – Call for presenters

If you have created a great online learning resource for a course using H5P, Xerte, or Video, we’d like to hear from you. We are keen to highlight and celebrate examples of content which you are proud of at a virtual online showcase on Friday 27th March, 2026. Six slots of fifteen minutes are available for you to present with Q&A.

Please complete the online entry form with your entry by deadline Friday 6th March, 2026.

For more information see: H5P, Xerte, Video Showcase – Call for Presenters (PDF)

H5P, Xerte, Video Showcase – Call for presenters entry form

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

The new features in this month’s Blackboard’s upgrade will be available from Friday 9th January. This month’s upgrade includes the following new/improved features to Ultra courses:

- NILE course navigation improvements

- Improved options for true/false questions in Ultra tests

- Improved options for multiple option questions in Ultra tests

- Ultra document block layout improvement

NILE course navigation improvements

Following Friday’s upgrade, NILE courses will look slightly different. This change is designed to improve top-level navigation around NILE and to make better use of screen space. As part of the upgrade, all NILE courses will now have a course banner, although this can easily be changed by staff. Please note that this is a required update by Blackboard and not something that individual institutions have any control over.

More information is available at:

- Understanding how a NILE course works (student guide)

- Ultra courses system navigation update – January 2026 (video: 1m 43s)

- How to change your NILE course banner (video: 0m 58s)

- Update on system navigation changes in Blackboard (PDF document)

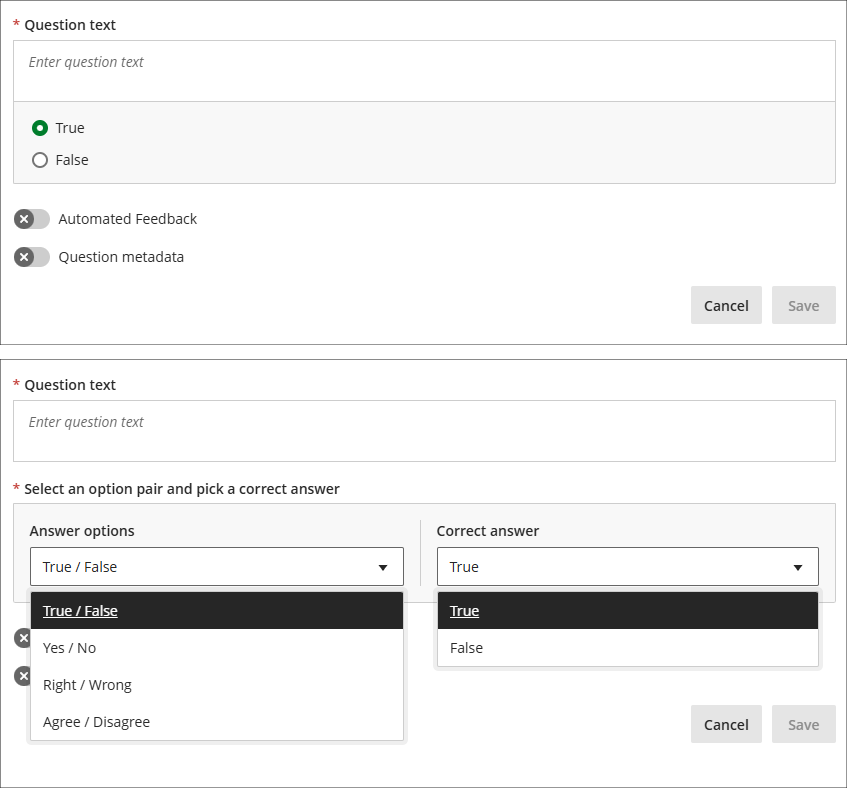

Improved options for true/false questions in Ultra tests

As part of the January upgrade the true/false question type in Ultra tests, which currently only allows users to select the response options ‘true’ or ‘false’, will be expanded to include a wider range dichotomous response types:

- True / False

- Yes / No

- Right / Wrong

- Agree / Disagree

More information about Ultra tests is available from: Learning Technology Team – Ultra Workflow 3: Blackboard Test

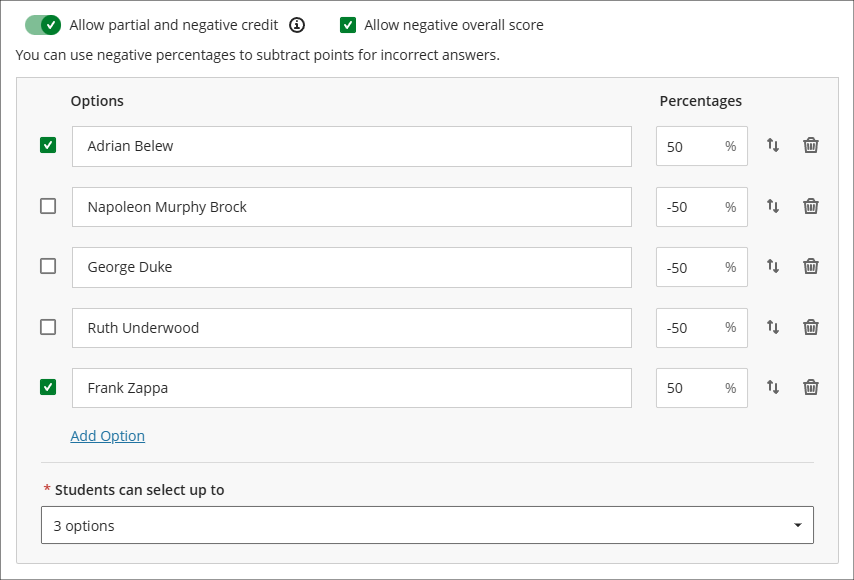

Improved options for multiple option questions in Ultra tests

The January upgrade will introduce a new option for staff setting up multiple option questions, allowing staff to define the maximum number of options that students can select (currently, students can select up to as many options as there are). When setting up multiple option questions, staff will need to specify: 1) the options which can be selected; 2) which of these options are correct, and; 3) the maximum number options that can be selected (this must be between one and four). Students will see a display showing how many options can be selected, but not how many options are correct, so for a more straightforward test the number of correct and selectable options would be the same, but for a more challenging test the maximum number of selectable options could exceed the number of correct options. And, depending on the level of challenge required, staff may or may not choose to disclose to students that the number of selectable options is greater than the number of correct options. Combined with the option to use negative marking, the multiple option question is an extremely versatile question type.

The following screenshot shows a complex multiple option question setup, with two correct answers but the option to choose up to three options. Additionally, partial and negative credit is enabled and a negative overall score is allowed, meaning that students can score between 100% and -150% of the question score.

Where a multiple choice rather than a multiple option question is preferred, this can be set by specifying only one correct option and only allowing students to select one option. Where these settings are selected the question will display to students with radio buttons rather than checkboxes.

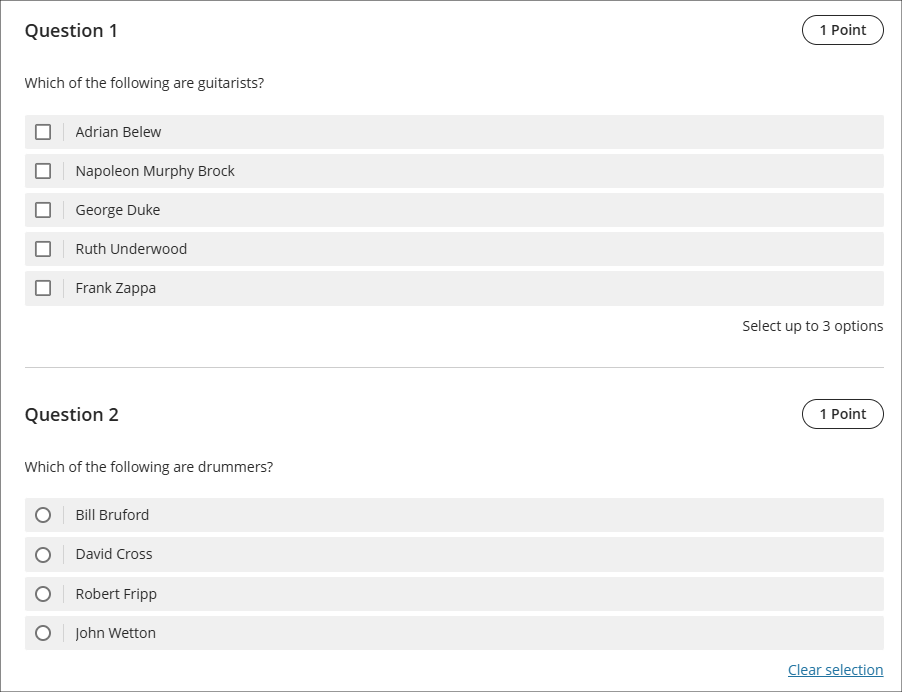

The following screenshot shows how the multiple option/choice questions display to students. Question 1 is the student view of the question already shown in the screenshot above. How many options can be selected is always shown to students, but how many correct answers there are is not displayed to students, so if you wanted to have questions which allowed more options to be selected than there are correct options, you might want to consider letting students know about this in the test instructions. Question 2 shows the display of a multiple option question with one correct option and only one option able to be selected.

More information about Ultra tests is available from: Learning Technology Team – Ultra Workflow 3: Blackboard Test

Ultra document block layout improvement

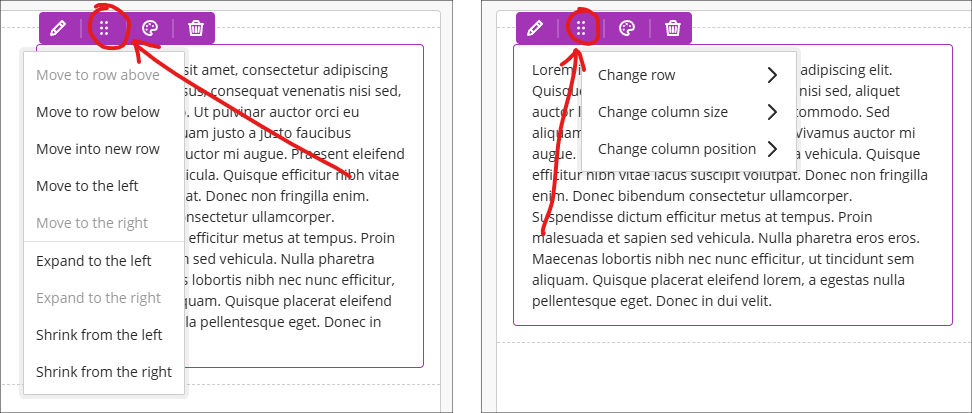

To improve usability and accessibility, the January upgrade will include a restructured menu for the Ultra document block layout. Currently, all options for changing the row, size, or position of a block are in a single dropdown list. Following the upgrade these options will be organised by row, size, and position. The following screenshot shows the pre-upgrade menu (shown on the left) and the post-upgrade menu (shown on the right).

More information about using Ultra documents is available from: Blackboard Help – Documents

Learning technology / NILE community group

Staff who are interested in finding out more about learning technologies and NILE are invited to join the Learning Technology / NILE Community Group on the University’s Engage platform. The purpose of the community is to share information and good practice concerning the use of learning technologies at UON. When joining the community, if you are prompted to login please use your usual UON staff username and password. By joining the Learning Technology / NILE Community you will receive calendar invitations to our regular live community events:

Join the Learning Technology / NILE Community Group

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

Senior Lecturer in Business Management, Deborah Gardner, has introduced an innovative approach to student engagement in her Principles of Management module by creating an AI persona called Business Bot. This Level 4 AI character is designed to spark meaningful dialogue and deepen understanding of core management concepts.

What is Business Bot?

Business Bot is powered by the AI Conversation tool within the AI Design Assistant in Blackboard. It initiates conversations with students by posing thought-provoking questions such as:

“What are some of the main responsibilities of managers?”

From there, the discussion evolves dynamically, encouraging learners to explore ideas beyond surface-level answers. Unlike a quiz or a test, this is a digital conversation that challenges students to think critically and articulate their reasoning.

Why is this approach effective?

The AI conversation tool allows questions to develop naturally, testing students’ ability to engage in intelligent discourse. It promotes active participation and reflection, helping learners apply management theories to real-world scenarios.

Deborah explains:

“It’s not about right or wrong answers – it’s about encouraging students to think deeply and engage with concepts in a way that feels interactive and relevant.”

Student Feedback

The response from students has been overwhelmingly positive. Here are some highlights:

- “The conversation really made me think more deeply about the balance managers face when making decisions that not all staff agree with.”

- “It questioned my answer and made me think deeper as to why I thought what I did.”

- “Listening to different viewpoints helped me analyse management theories from multiple perspectives and understand their practical relevance.”

- “It enabled me to have a free conversation about navigating conflicts within the workplace and improved my critical thinking.”

Students also noted that the tool encouraged them to consider emotional intelligence, motivational strategies, and the complexities of managerial decision-making.

Expanding the Idea Across the Course

Deborah has now extended the use of Business Bot into her Level 6 module, Business Futures, where students explore Social Capital. For this advanced level, she has increased the complexity of the bot’s responses to encourage deeper critical thinking. The aim is to create continuity across the course so that Business Bot becomes a “course companion”—a familiar presence that supports learning in multiple modules.

Examples from Level 6 Student Work

Here are some examples of how Level 6 students engaged with Business Bot in the Business Futures module. These illustrate how the tool supports advanced critical thinking and application of concepts like Social Capital:

- Students explored the role of Social Capital in shaping organisational resilience and innovation, providing detailed case-based arguments.

- Feedback highlighted that the AI prompts encouraged deeper questioning and synthesis of multiple theoretical perspectives.

The screenshot below is of a Business Bot conversation where the AI guides a student through what Social Capital means, using probing follow‑up questions about trust, shared values, and the risks of fragile alliances. The exchange highlights how the tool pushes learners toward deeper, more analytical thinking.

Customising the AI Persona

One of the most powerful features of the AI Conversation tool is the ability to set the personality of the bot. Deborah shares her approach:

- For Level 4 students, she used the “Testing” personality to check understanding and prompt reflection.

- For Level 6 students, she switched to “Critical” to encourage analysis and evaluation.

This flexibility allows educators to tailor the AI experience to the learning outcomes of each module.

Want to Try This in Your Module?

Here’s how to get started:

- Access your module site.

- Navigate to Create in the Content area.

- Select AI Conversation and create a persona or topic-based conversation.

- Add your initial question and configure follow-up prompts.

- Publish the activity and encourage students to engage.

Tip: Start with open-ended questions to promote deeper thinking.

If you’d like support in exploring how this tool can enhance your students’ learning, contact your Learning Technologist to schedule a meeting.

Recent Posts

- Blackboard Upgrade – June 2026

- Blackboard Exemplary Course Program Award Recipients – May 2026

- Blackboard Upgrade – May 2026

- Choosing the Right NILE Tool to Encourage Student Engagement

- Worthy Online Work event review

- Blackboard Upgrade – April 2026

- 15 Years of the Learning Technology Blog!

- Blackboard Upgrade – March 2026

- Blackboard Upgrade – February 2026

- Blackboard Upgrade – January 2026

Tags

ABL Practitioner Stories Academic Skills Accessibility Active Blended Learning (ABL) ADE AI Artificial Intelligence Assessment Design Assessment Tools Blackboard Blackboard Learn Blackboard Upgrade Blended Learning Blogs CAIeRO Collaborate Collaboration Distance Learning Feedback FHES Flipped Learning iNorthampton iPad Kaltura Learner Experience MALT Mobile Newsletter NILE NILE Ultra Outside the box Panopto Presentations Quality Reflection SHED Submitting and Grading Electronically (SaGE) Turnitin Ultra Ultra Upgrade Update Updates Video Waterside XerteArchives

Site Admin