Assignment submission time is always stressful for students. There are the well-known issues that students face of decoding assignment briefs, managing multiple assignments, plus all the work that goes into completing assignments, getting the quotes and references right, and then the anxious wait to get the marks and feedback.

However, one potentially stressful stage that sometimes gets overlooked is the process of actually submitting the assignment. While this might seem like a minor stage in the process, it is a very important one, and is something that some students do struggle with, especially if it’s their first assignment, or uses a new/unfamiliar submission process, e.g., a video assessment. Additionally, and contrary to the popular myth, young people are not ‘digital natives.’ Many students come to university with low levels of digital ability and confidence, and for a lot of our students NILE will be the first VLE they’ve ever encountered, and the process of electronic assignment submission will be entirely new to them.

An excellent way to pre-emptively de-stress the assignment submission process is to adopt the view that it’s best to teach your students how to do all the things that you want them to do, including how to submit an assignment, and that’s exactly what the ITT (Initial Teacher Training) team do. In this guest post, Helen Tiplady, Senior Lecturer in Education (ITT Science), shares her approach to supporting students with the assignment submission process.

Here’s Helen:

Supporting students to submit their digital assessments correctly.

It may be due to our Primary school training backgrounds, but tutors in the Initial Teacher Training (ITT) team often share ‘What A Good One Looks Like’ with our students – otherwise fondly known as a ‘WAGOLL’.

One example I’d like to share with you was from a Level 4 science module (ITT1042) where students needed to complete a digital assessment piece. The premise was that they were planning a talk to a group of governors or sharing ideas at a staff INSET training day. The students needed to create a PowerPoint presentation along with their ‘speech’ written in the notes section. They then converted this to a PDF and uploaded this to the Turnitin submission point.

Although we have detailed, ‘step-by-step’ notes accompanied with screenshots for the students to follow as part of our assignment guidance, we have found that the most effective way for our students to upload their digital assessments correctly is through practice.

We offer a bespoke time during one of our learning events when students can observe the tutors demonstrate the steps to a successful submission (See Figure 1 below). We then ask the students individually to do a draft submission while the tutors are available to support and help with any issues. Finally, we ask the students to ‘teach each other’ on how to upload their assessment correctly to Turnitin.

This final step is crucial as this will allow the students to recall the steps more successfully at a later date. After all, Confucius is famous for saying “I hear, I forget. I see, I remember. I do and I understand.”

| Step 1 – Model: Show the students the stages to submit their digital assessment correctly. |

| Step 2 – Practice: Let the students submit a draft submission. |

| Step 3 – Tell: Ask the students to tell someone the stages they have learnt. |

Figure 1: How to support students to upload digital assessments successfully

So, in summary, try and find some ring-fenced time in one of your classes for the students to do a trial run of submitting their digital assessments. Find a time when the stakes are low and there is no pressure of a looming deadline. And remember, the more the students feel prepared, the easier they will find it to submit their digital assessments correctly the first time.

We are delighted to announce the new V7 video player across all Kaltura content within NILE and on the MyMedia platform. This update introduces a variety of new features designed to enhance both teaching and learning experiences.

What’s New with the V7 Player on NILE?

The V7 player offers several improvements over the previous version, making it easier and more effective to use video content within your courses on NILE:

Interactive Searchable Transcript: One of the most significant new features is the interactive searchable transcript. This allows students to quickly search for specific keywords within the transcript and jump directly to that point in the video. This functionality makes it much easier for students to locate and review specific content, thereby enhancing their learning experience.

Downloadable Transcripts: In addition to being searchable, the transcripts are also downloadable. This feature supports our commitment to being an accessible university, as it enables students to keep a copy of the transcript for offline review or study. This is particularly beneficial for students who may need to access content in different ways, supporting diverse learning needs.

Improved User Experience

The new V7 video player introduces a range of enhancements, including the exciting Pop-Out Player feature. This allows users to detach the video into a resizable, floating window, perfect for multitasking. Whether you’re taking notes or browsing other NILE content, the pop-out player ensures you remain engaged with the video without interruption.

Streamlined Interface

The player’s sleek, modern design makes navigation intuitive. Key functions like playback speed, volume control, and full-screen mode are easily accessible, enhancing the overall user experience.

Faster Load Times and Improved Playback: The V7 player is optimised for faster load times and smoother playback, ensuring that your video content plays seamlessly across all devices, whether students are accessing it from a desktop, tablet, or smartphone.

Enhanced Accessibility: With built-in support for closed captions, subtitles, and transcripts, the V7 player is designed to be fully accessible. It complies with web accessibility standards, making your video content more inclusive for all students, including those with visual or hearing impairments.

What This Means for You

Simply continue to use NILE as you normally would, and you’ll see the new V7 player in action. We’ll update our guides but I’d encourage you to jump in and explore the new capabilities, particularly the interactive and downloadable transcript, which can significantly improve the way students interact with your video content.

We believe these enhancements will be a valuable addition to your teaching toolkit, making video content more accessible, engaging, and effective for your students. If you need any support, don’t hesitate to contact your Learning Technologist and we’ll be happy to help.

With the exception of the enhanced Ultra document design option, which will be added on the 12th of August, the new features in Blackboard’s August upgrade will be available from Friday 9th August. This month’s upgrade includes the following new/improved features to Ultra courses:

- Enhanced Ultra document design and Word/PDF/PowerPoint to Ultra document conversion option

- Improvements to AI Design Assistant image generation

- Advanced options for release conditions

- Improvements to Blackboard assignments

- Anonymous responses in Blackboard forms

- New option to follow discussions

Enhanced Ultra document design and Word/PDF/PowerPoint to Ultra document conversion option

The August upgrade includes two significant improvements to Ultra documents. The first improvement is the ability to create advanced multi-column layouts. By using multiple content blocks and making use of columns, staff can create documents with different layouts. When students view pages with multi-column layouts on a mobile device, the pages will respond by re-flowing the content into a single column so that the content remains viewable.

The second improvement to Ultra documents is a new option to convert Word, PDF, and PowerPoint files to Ultra documents. Documents can still be uploaded and kept in their original formats, and Word, PDF, and PowerPoint documents can still be displayed inline in the browser, but, where possible, converting them to Ultra documents is preferable as it allows them to be more easily viewed on mobile devices.

More information about creating Ultra documents and converting Word, PDF, and PowerPoint files to Ultra documents is available from: Blackboard Help – Create Documents

Improvements to AI Design Assistant image generation

After the August upgrade, the AI image generation process will use DALL-E 3 rather than DALL-E 2. This change will allow staff to generate higher quality, higher resolution images in the following places:

- Learning Module images

- Document images

- Announcement images

- Assessment question images

- Journal prompts images

More information about using the AI Design Assistant’s image generator is available from: Learning Technology Team – AI image generator & Unsplash image library

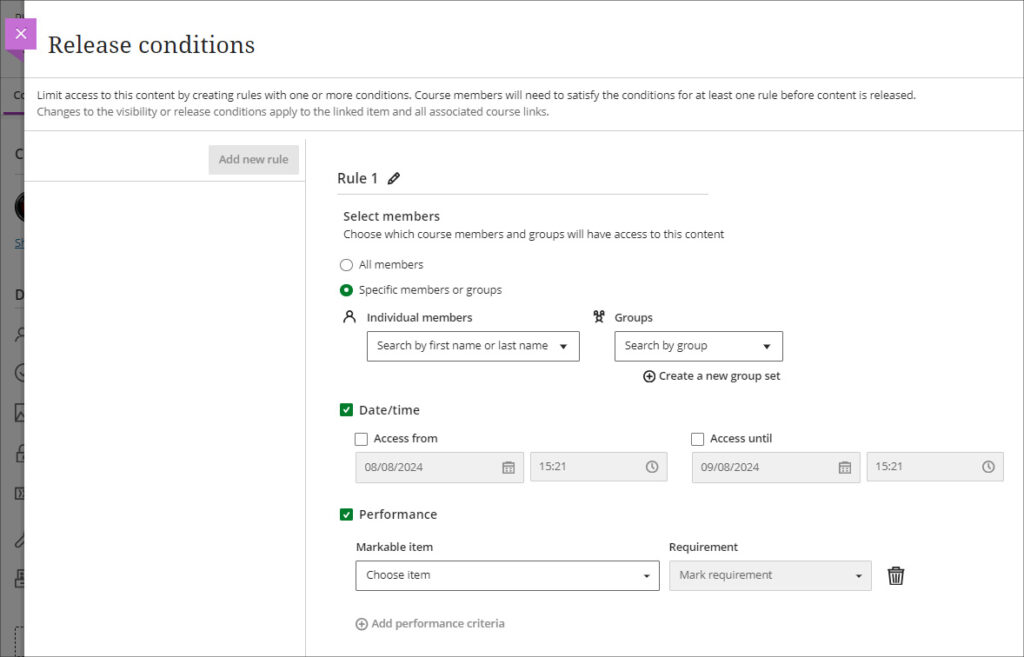

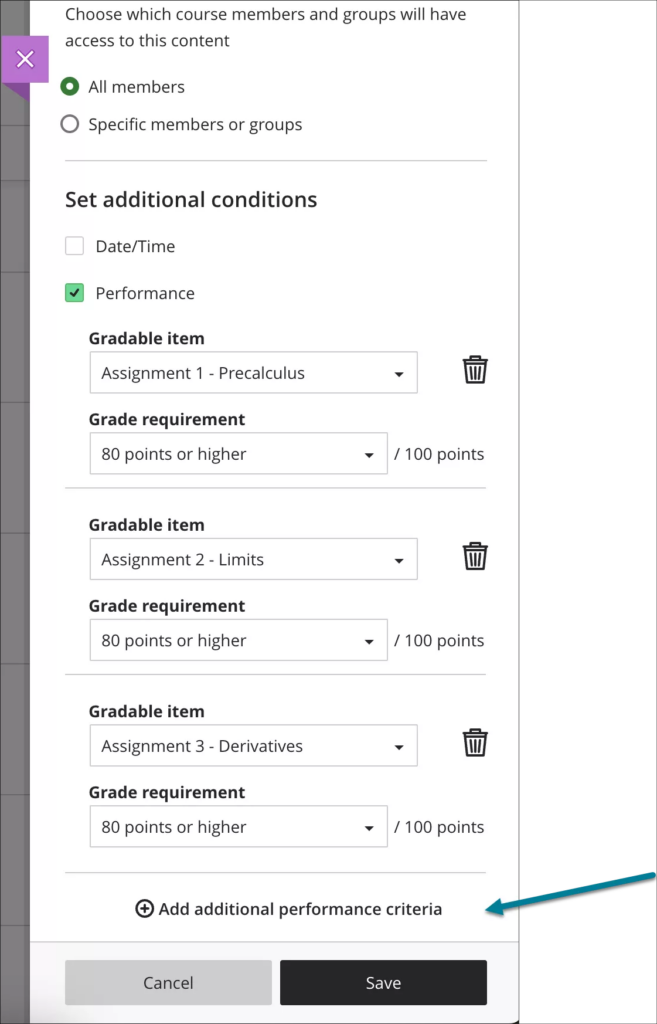

Advanced options for release conditions

August’s upgrade will allow staff to create more complex release conditions, based on date, time, and grade range performance criteria. Additionally, the new release conditions options will allow staff to use multiple rules, and to create different sets of rules for specific individual learners, groups, or for all members.

More information about using release conditions is available from: Blackboard Help – Content Release Conditions

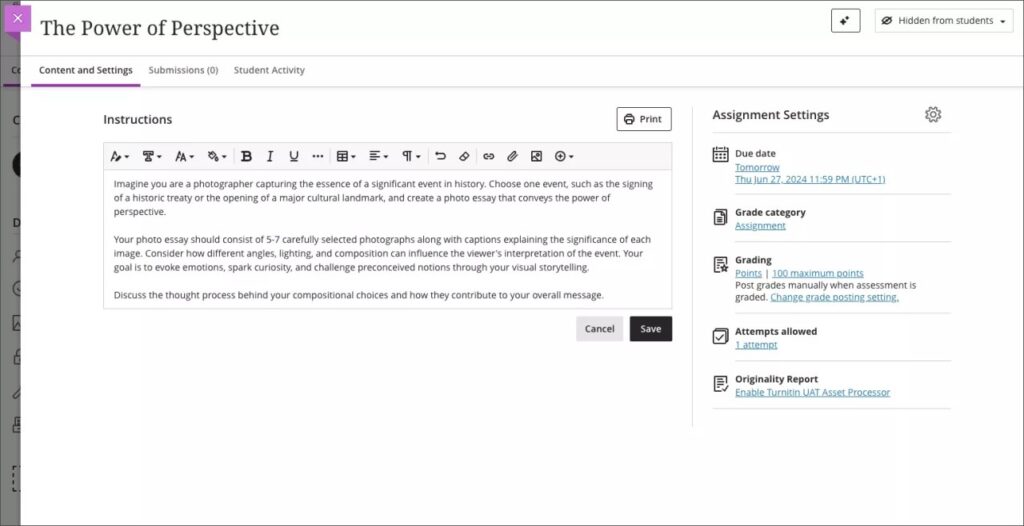

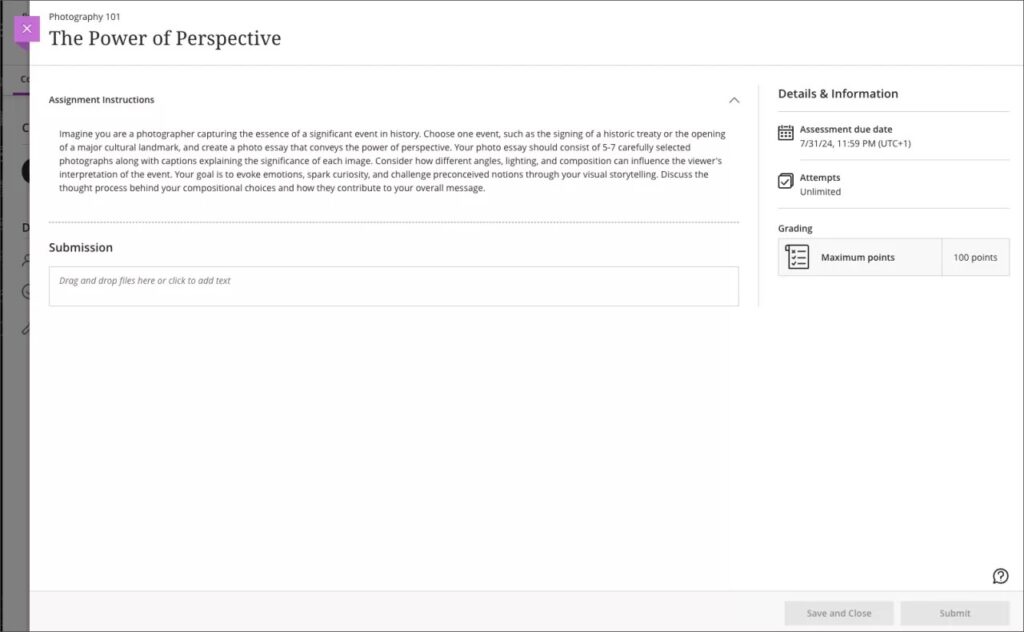

Improvements to Blackboard assignments

Prior to the August upgrade, when setting up a Blackboard assignment there were a number of options available that were relevant for Blackboard tests, but not for assignments. After the August upgrade, when setting up a new Blackboard assignment, staff will notice the following improvements:

- A new instructions box where staff can use the content editor to write assignment instructions.

- There are no longer options to add questions to an assignment, as these are only relevant for Blackboard tests.

- The assignment settings panel now includes only options relevant to assignments.

- Blank attempts are no longer created when students view assignment instructions. The system only creates an attempt when students add content to the file drop zone / content editor. However, group and timed assessments will continue to create attempts when students view the instructions.

Additionally, the student experience of using Blackboard assignments has been improved, and it is now much more straightforward for students to upload their files by dragging and dropping a file into the file drop zone.

More information about setting up a Blackboard assignment is available from: Learning Technology Team – Ultra Workflow 2: Blackboard assignment

Anonymous responses in Blackboard forms

Following the August upgrade, staff will be able to set Blackboard forms to receive anonymous responses. Blackboard forms function almost identically to Blackboard tests, the main difference being that forms can be ungraded/unmarked, whereas tests cannot be, therefore forms only have question types that are appropriate for ungraded responses, but they do include a Likert question type which is not available in Blackboard tests. With the introduction of anonymous responses in forms, this tool can now function effectively as an anonymous survey tool within each NILE course. Staff who used the surveys tools in Original courses, will find that forms now replicates in Ultra all the functionality of surveys in Original.

When select anonymous submissions, these settings are enabled by default:

- Due date

- Prohibit late submissions

- Prohibit new attempts after due date

- Complete/incomplete is selected as the grading schema for non-graded forms

- If the anonymous form is graded, the submission earns all the points assigned; you can’t edit or override the points earned.

Additional important details to note:

- Anonymous forms cannot be administered to groups.

- Class conversations are not supported when anonymous submissions is selected.

- To ensure anonymity, student activity, exceptions, exemptions and accommodations are not supported.

- To ensure anonymity, student progress/statistics are not captured.

- Modifications to form questions and settings are not permitted if the form has submissions and the due date has passed.

More information about Blackboard forms is available from: Blackboard Help – Forms

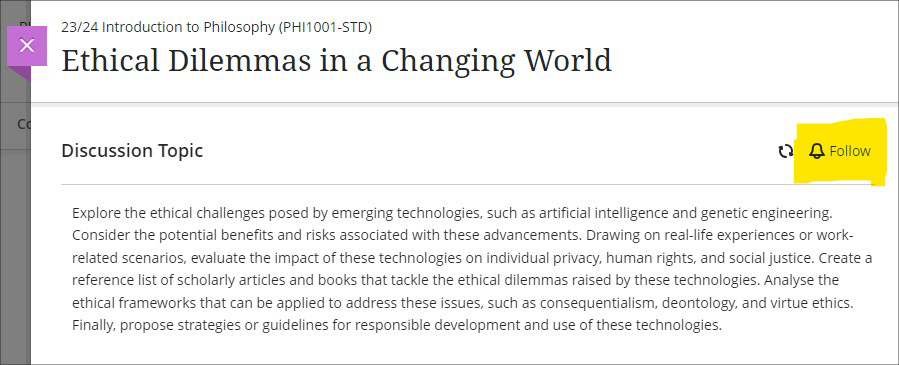

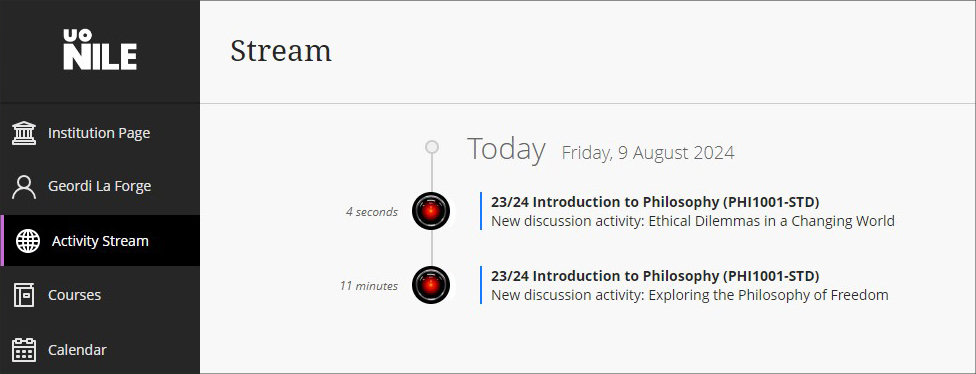

New option to follow discussions

August’s upgrade will introduce the option for staff and students to follow discussions, which has been a much requested feature. However, please note that this initial release is somewhat limited as notifications for followed discussions will appear in the activity stream, but will not be sent as emails or push notifications to mobile devices for Blackboard app users.

Staff and students can follow or unfollow particular discussions via the selector in the discussion forum, and new responses and replies to followed discussions will be shown in the activity stream.

More information about setting up and using discussions is available from: Blackboard Help – Discussions

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

The new features in Blackboard’s July upgrade are available now. This month’s upgrade includes the following new/improved features to Ultra courses:

- New NILE courses for the 24/25 academic year now available

- New option to generate a Turnitin similarity report when using Blackboard assignments

- Improvements to the grading interface for Blackboard assignments and tests

- Improvements to printing tests and assessments

- Improvements to announcements

- Enrolling students onto NILE courses

- Uploading video and audio files to NILE

- Increased adverts on YouTube videos (from 1st August)

- End of life for guest access to NILE, including welcome courses and organisations, and removal of old Original courses and organisations (from 31st December)

New NILE courses for the 24/25 academic year now available

Creation of new NILE courses for the 24/25 academic year has been completed, and staff are now able to enrol on and begin setting up their new NILE courses.

If content needs to be copied into a new NILE course from an old one, please ensure that the correct process is followed as this will reduce the likelihood of problems occurring later on in the new course, especially around non-functioning assignment submission points. More information about the course copy process is available from: Learning Technology Team – How do I copy content into a NILE Ultra course?

Full guidance about enrolling on and setting up new NILE courses is available from: Learning Technology Team – Getting your NILE course set up and ready for teaching

Following the move to the SITS student records system, NILE Course IDs are now in four parts, not three. e.g., PHI1001-SUN-2425-S1. The first part of the course ID is the module code; in this case, PHI1001. The second part of the course ID is the session code, in this case SUN. SUN (Standard University of Northampton) is a common session code and denotes that the course is delivered on campus here at the University of Northampton. The third part of the course ID is the academic year; in this case 2425, denoting the academic year 2024/2025. The final part of the course ID refers to the semester in which the course is taught; in this case S1, semester one. More information about the new NILE course IDs is available from: Learning Technology Team – How do I decode my NILE course ID?

Please note that, following new guidance issued to UK HEIs by the Office for Students, the NILE Design Standards have been updated this year, with the addition of the following items to section A: ‘Availability of course content in NILE courses’, and; ‘Availability of student assessment, grades and feedback in NILE’.

New option to generate a Turnitin similarity report when using Blackboard assignments

A popular request from staff has been the ability to use Blackboard’s assignment submission tool, but to also generate Turnitin similarity reports for work submitted. Prior the July upgrade this was not possible, and staff had to choose between generating a Turnitin submission report, or using Blackboard’s more flexible and feature rich assignment tool. However, following the July upgrade, and along with the switch to the new and improved flexible grading interface for Blackboard assignments and tests (see below), a new Turnitin integration has also been enabled in NILE allowing Turnitin similarity reports to be generated when using a Blackboard assignment.

More information about using Turnitin with Blackboard assignments is available from: Blackboard Help – Turnitin

Improvements to the grading interface for Blackboard assignments and tests

The gradebook in Ultra courses has been upgraded to Blackboard’s new flexible grading interface. This will not affect staff assessing Turnitin assignments, but staff marking Blackboard assignments and tests will notice a marked improvement in the grading interface, which is more intuitive and which allows for quicker and easier access to all Blackboard’s grading tools.

More information about grading assignments using the new interface is available from: Blackboard Help – Grade Assignments With Flexible Grading

More information about grading tests using the new interface is available from: Blackboard Help – Grade Tests With Flexible Grading

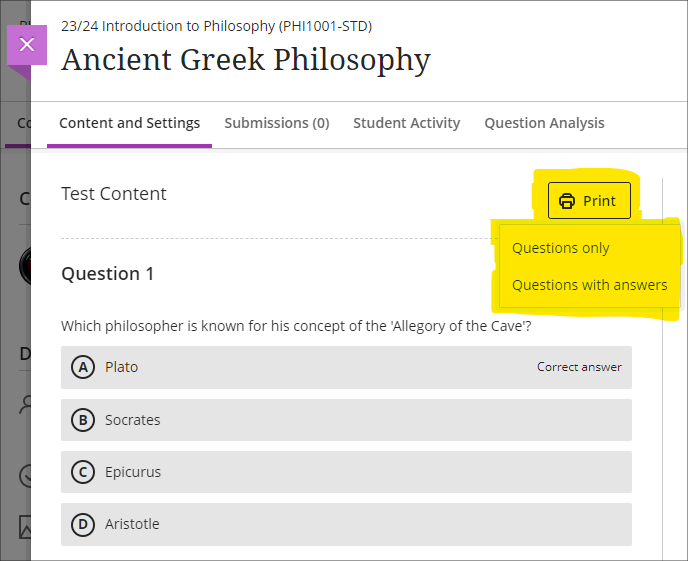

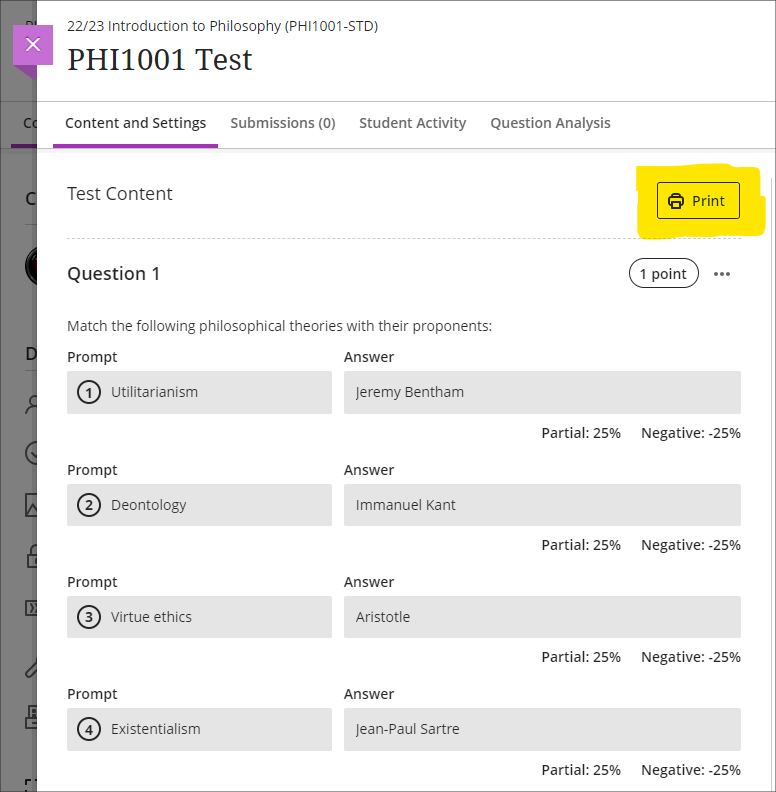

Improvements to printing tests and assessments

Following on from last month’s upgrade, which introduced the option for staff to print Ultra tests and assignments, this month’s upgrade adds the option to select whether to include both the questions and correct answers (where specified), or just the questions.

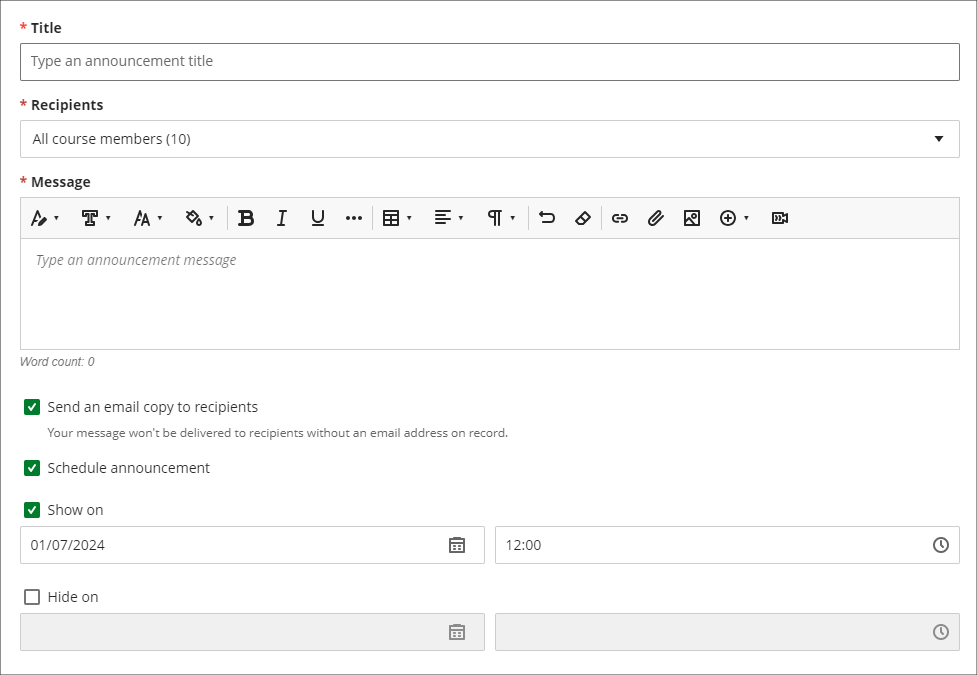

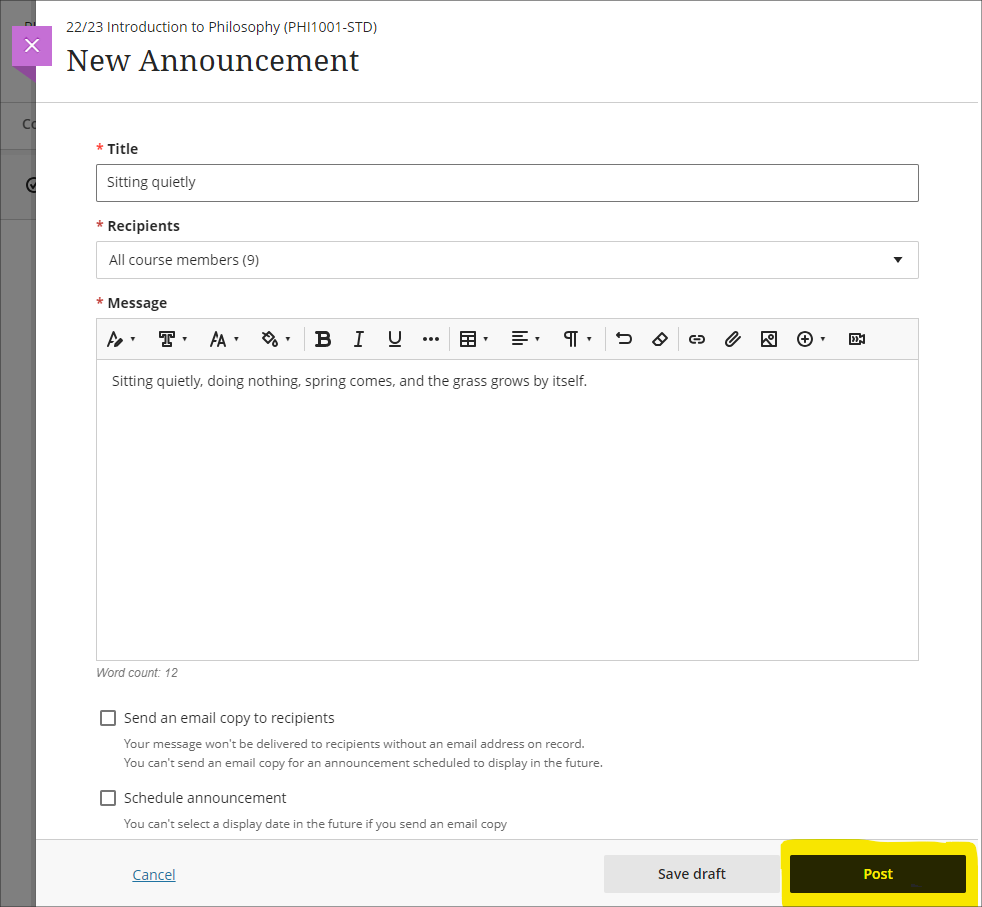

Improvements to announcements

Prior to the July upgrade, staff were not able to select both the ‘Schedule announcement’ and the ‘Send an email copy to recipients’ options when creating an announcement. Following the upgrade, it will be possible for staff to both schedule an announcement, and to send a copy via email at the time the announcement is scheduled. Previously, students were notified by email of scheduled announcements in a digest email sent the following morning, but staff now have the option for the email notification of the announcement to be sent as soon as the scheduled announcement is live.

Enrolling students onto NILE courses

Following the introduction of the SITS student records system, all enrolments onto and removals of students from module-level and programme-level NILE courses will be handled by a new SITS/NILE integration, which replaces the previous QL/NILE integration. Because of the need to ensure that the NILE student enrolment record and the SITS student enrolment record remain synchronised at all times, there is no longer any option for staff to manually enrol students onto NILE courses, or to remove students, change their role, or change their availability. Where a student is not enrolled on a NILE course but should be, this can no longer be fixed by manually enrolling the student; instead, the reason for non-enrolment must be identified and remedied in SITS, following which the student will be enrolled on the NILE course.

As a result of these changes, staff will no longer have access to view the ‘Member information’ panel in NILE, meaning that students’ UON email addresses are not viewable to staff in NILE. However, staff can still send emails to students via the announcements and messages tool. Where staff need to know a particular student’s email address, this information is available in the new Student Record View (SRV) tool, which is the SITS replacement for OASIS. For training on SRV please see: Staff Development: SRS -SRV Training

Staff still retain the ability to enrol themselves and other instructors onto their NILE courses, and to enrol their external examiners and other members of UON staff who are supporting their students.

Uploading video and audio files to NILE

Prior to the July upgrade there have been multiple methods of uploading a video or audio file to NILE, and it has not been clear as to the best method to use. Following the upgrade, staff and students will now be advised to use Kaltura, the University’s dedicated media streaming system, when uploading audio and video files to NILE. In order to assist with this process, if a video or audio file is being uploaded to NILE via a different method, staff and students will see a helpful pop-up screen prompting them to use Kaltura instead, and providing links to support materials which explain how to do this.

There are several advantages of uploading media files to NILE using Kaltura. Of particular importance, and unlike other upload methods which are subject to a maximum file size limit of 1024MB (1GB), there are no file size limits for uploads to NILE via Kaltura. This is especially useful for video files which can and often do exceed the 1024MB limit. Additionally, video files uploaded via Kaltura are transcoded to multiple streaming formats, allowing for the best viewing experience across different devices and different Internet connection speeds. This allows the viewing of video files in full HD quality on devices with large screens and good connection speeds, while also allowing videos to be viewed on devices with slower connection speeds without frequent stops to the playback while loading the video. And to aid accessibility, all Kaltura media is also auto-transcribed/captioned, and where auto-transcriptions are manually checked and edited for accuracy, they meet the required standards for accessibility.

More information for staff about Kaltura is available from: Learning Technology Guides – Kaltura

More information about video accessibility and transcription/captioning is available from: Learning Technology Guides – Captioning Collaborate lectures, Kaltura recordings, and other video and audio content

Increased adverts on YouTube videos (from 1st August)

YouTube videos embedded in NILE are currently subject to the same advertising policies as videos viewed directly on YouTube. This includes pre-roll, mid-roll, and post-roll adverts. Google, who own YouTube, have announced that starting August 1st 2024, the number of adverts shown in embedded videos will increase, meaning more interruptions during video playback. As an alternative to YouTube, staff may like to consider making use of Box of Broadcasts (BoB) to source media content.

More information about BoB is available from: Learning Technology Team – Box of Broadcasts (BoB)

End of life for guest access to NILE, including welcome courses and organisations, and removal of old Original courses and organisations (from 31st December)

In order to implement necessary security measures, from the 1st of January 2025 guest access to NILE will no longer be possible. This means that only logged in users will be able to access NILE. Guest access to Ultra courses has never been possible, however, some old Original courses, including welcome courses, may still be available via guest access and the information they contain may need to be relocated.

Additionally, please note that while most NILE courses are regularly archived and removed from NILE in accordance with the NILE Archiving and Retention Policy, some old Original courses and organisations remain on the system and will continue to be removed from NILE on a rolling ten year basis. Currently, all Original courses and organisations created before 01/01/2014 are no longer available on NILE, and courses created before 01/01/2015 will be no longer be available from 1st January 2025.

Staff who are concerned that they may be affected by either of these matters are encouraged to contact Robert Farmer, the Learning Technology Manager, to discuss their requirements. Where information needs to be available to people who do not have a NILE login, it will be necessary to use another platform to provide this. However, where using NILE is still the best option, we will be happy to provide a new Ultra course or organisation to replace the old Original one.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

The new features in Blackboard’s June upgrade will be available on Friday 7th June. This month’s upgrade includes the following new/improved features to Ultra courses:

Post announcements immediately

Following feedback from staff, June’s upgrade will make posting announcements in Ultra courses easier and more intuitive. Currently, an announcement has to be saved and closed before it can be posted from the announcements panel. However, this sometimes causes confusion, as the save button does not actually post the announcement. June’s upgrade will add the ‘Post’ button directly into the panel in which the announcement is created, allowing staff to compose and post the announcement in the same panel. The option to compose the announcement, save it, and post it later will still be available though.

Print Ultra tests and assessments – staff only

The June upgrade will introduce a print button which will allow a PDF copy of an Ultra test or assignment to be generated, or for a printable copy of a test or assignment to be sent to a printer. The print button is available to staff only, and there are no plans to make the print button available to students.

Please note that there are certain limitations with the initial release of the print functionality, specifically that when a question pool is used as a question type, these are not included in the print.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

The new features in Blackboard’s May upgrade will be available on Friday 2nd May. This month’s upgrade includes the following new/improved features to Ultra courses:

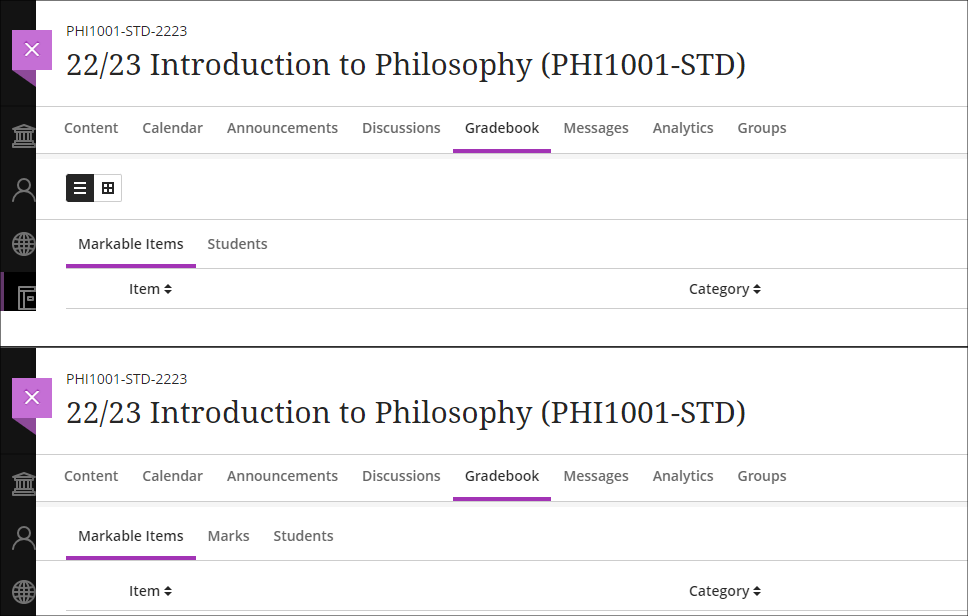

Improved gradebook navigation

There are currently three different views of the Ultra gradebook which are available under two gradebook sub-tabs, one of which has a grid/list selector. These views are:

- Markable items (list view)

- Markable items (grid view)

- Students

As this can make navigating the gradebook rather confusing, May’s upgrade will provide each gradebook view with its own sub-tab as follows:

- Markable items (list view) will become Markable items

- Markable items (grid view) will become Marks

- Students will remain as it is

Supporting multiple performance criteria in release conditions

Staff can currently set performance-based release conditions for items in Ultra courses against only a single performance criteria (i.e, a gradable item for which students have a grade in the Ultra gradebook). For example, if there are three tests in a course and staff want to make an item available only to students who have scored above a certain score in each test, this is not possible. As a workaround, staff can use cascading release criteria, e.g., releasing the second test when the first is passed, the third when the second is passed, and the content item when the third test is passed. However, using cascading release criteria is not always what is desired, and they can be complicated to set up.

Following the May release, staff will be able to set release conditions against multiple performance criteria, allowing staff to selectively release content in Ultra courses to students who have fulfilled multiple conditions. Where staff want to continue using a series of cascading release conditions they can continue to do so, but will no longer have to use them as a workaround. When setting up performance-based release conditions, staff can set content to be released to students who have scored n points/% or higher, or can specify custom ranges.

Release conditions can be especially useful for staff who want to automatically release content to students based upon their assessment scores. For example, releasing one set of content designed to support students who have failed an assessment, another set to students who have passed the assessment, and more challenging content to students who have performed exceptionally well.

More information about using release conditions in Ultra courses is available from: Blackboard Help – Content Release Conditions

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

With an overload of information and such dichotomous opinions about Artificial Intelligence (AI), it is difficult to know where to begin; especially if you are yet to experience using AI at all. One starting point is to find out where you are with your own knowledge and the Jisc discovery tool can assist with this.

The discovery tool, which was introduced to UON in 2020, is a developmental tool that students and staff can use to self-assess their digital capabilities, identify their strengths, and highlight opportunities to develop skills. The tool has been recently updated to include a question set for both staff and students on their capability and proficiency with AI and generative AI tools.

The question sets for students and staff have been developed with assistance from Jisc and aligns with the latest AI advice and AI guiding principles developed by Jisc and the Russell Group on the responsible and equitable use of AI to enhance learning and teaching.

How the discovery tool helps students and teachers

The new question sets provide users with a basis to self-assess their skills and knowledge of what AI is and how it could, or should, be used in the context of their studies or role.

Once users complete the question set, they can then access a personalised report with a confidence rating which will vary from ‘developing’ through ‘capable’ to ‘proficient’ depending on their experience. The report also provides recommendations and courses on how to advance knowledge around AI.

Users can repeat any of the discovery tool’s question sets at any point and therefore keep a dynamic view of their confidence levels.

Where can I access the tool?

Click here to log straight into the discovery tool and the AI question set, or copy and paste the link below into your address bar.

How can I Support Students?

To assist students in enhancing their digital skills and their knowledge and understanding of AI, we have put together a student guide which can be found here. It may also be helpful to add a link to this guide, or to the discovery tool itself, within NILE courses.

What if I would like to know more?

For more information about how to use the discovery tool, see: https://digitalcapability.jisc.ac.uk/resources-and-community/discovery-tool-guidance/staff/

For further information about the AI design assistant in NILE or Padlet’s new AI features, please get in touch with your Learning Technologist.

Helpful links

The new features in Blackboard’s April upgrade will be available on Friday 5th April. This month’s upgrade includes the following new/improved features to Ultra courses:

- Anonymous discussions

- AI Design Assistant: Select course items/context picker enhancements

- Duplicate test/form question option, plus changes to default test question value

- Likert form questions includes options for 4 and 6, as well as 3, 5, and 7

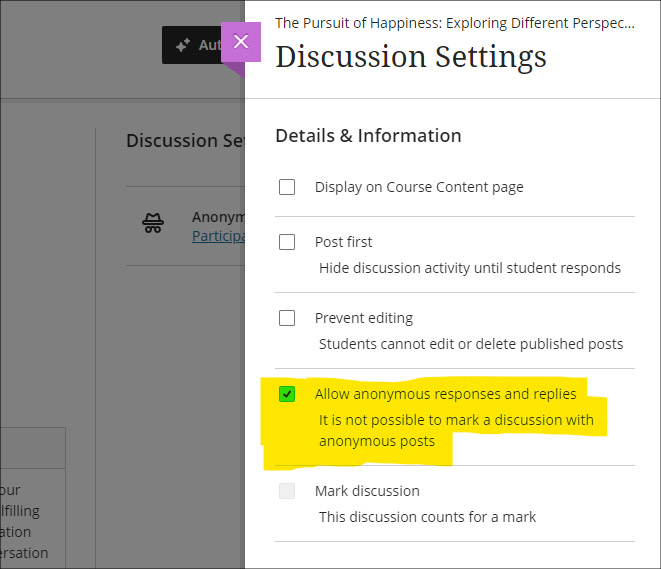

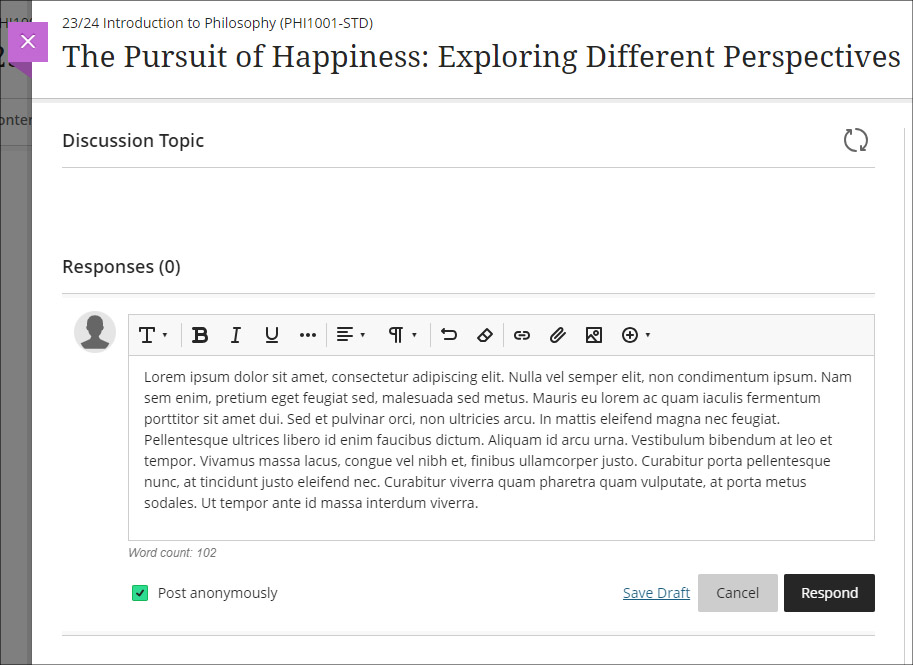

Anonymous discussions

Following feedback from staff, April’s upgrade will allow staff to set up Ultra discussions to allow students to post and reply to posts anonymously. After the upgrade, the option to allow anonymous responses and replied will be available in the ‘Discussion Settings’ panel.

Please note that selecting ‘Allow anonymous responses and replies’ does not mean that all replies and reponses will be anonymous; rather it means that students and staff can choose to post anonymously if they want to. To post anonymously, the ‘Post anonymously’ checkbox will need to be selected. Once posted, the anonymity of a post cannot be changed – i.e., an anonymous post cannot be de-anonymised by the person who posted it, and a non-anonymous post cannot be changed to anonymous.

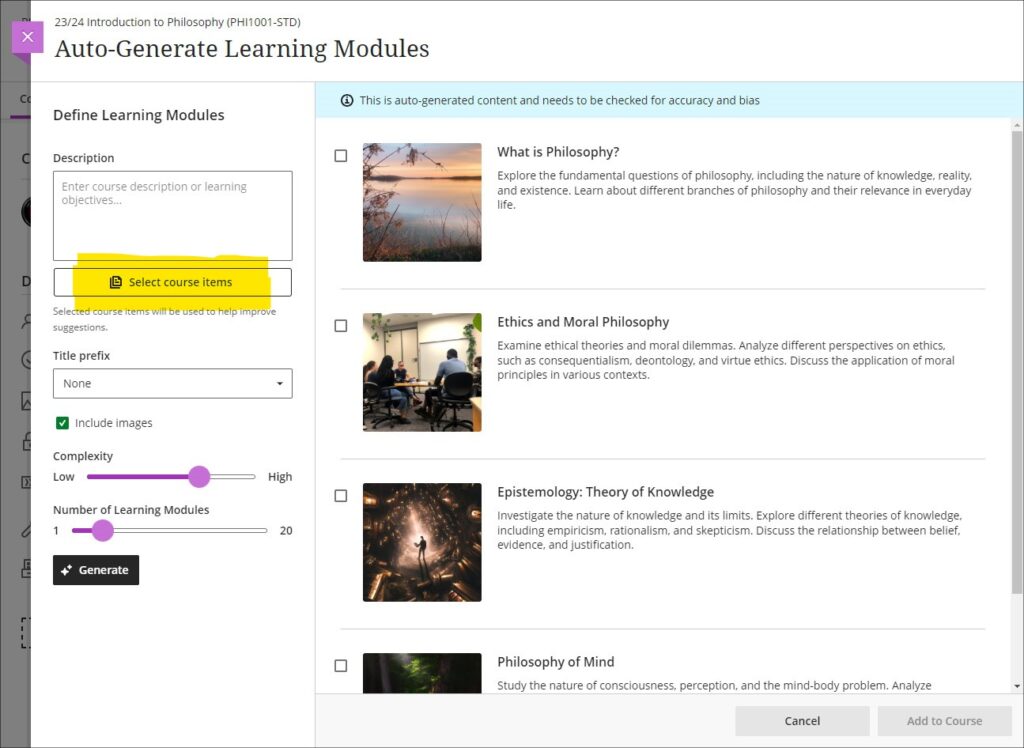

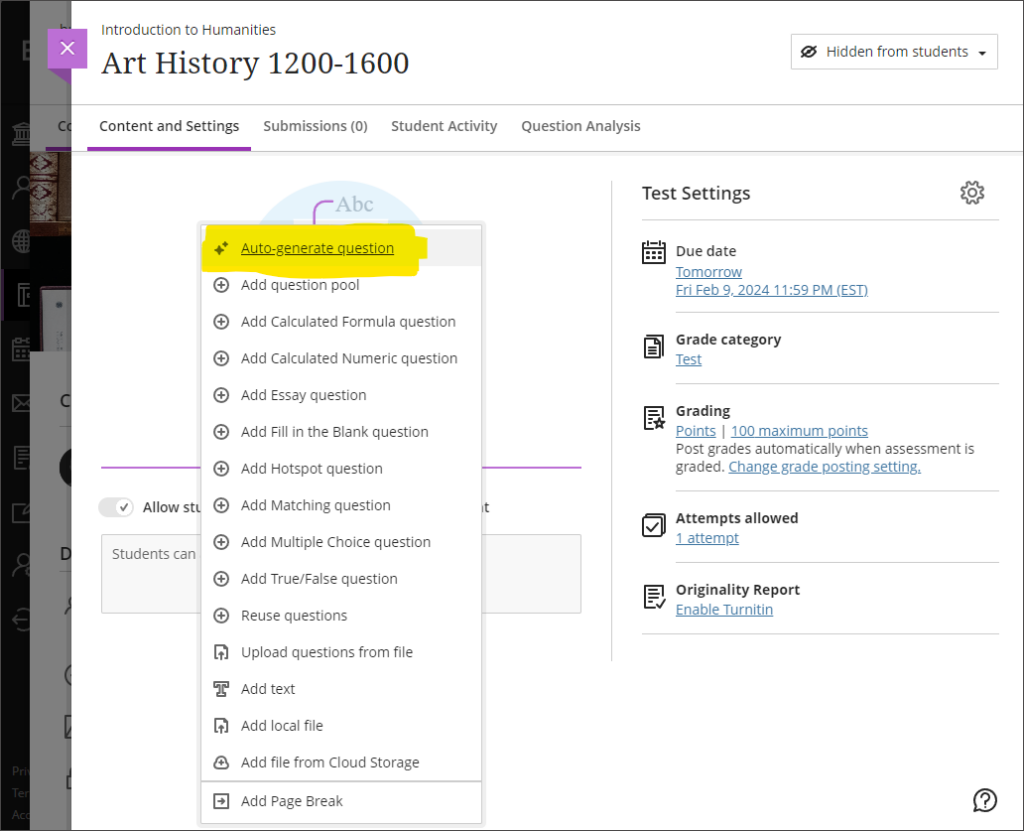

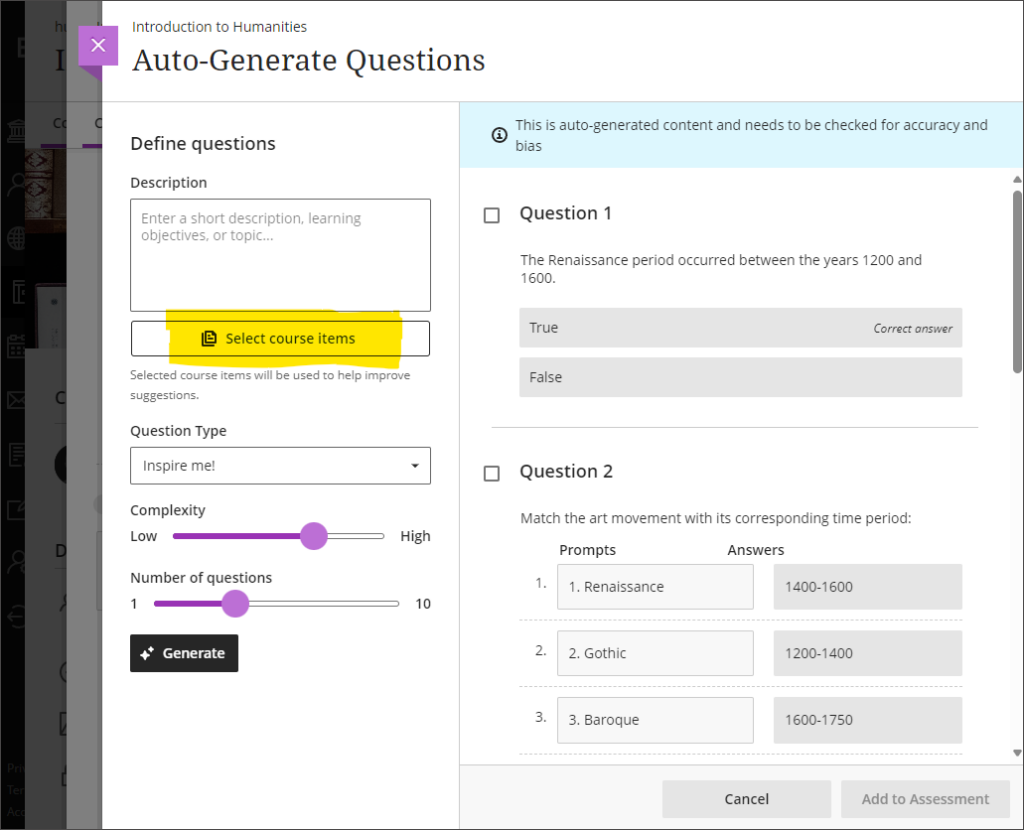

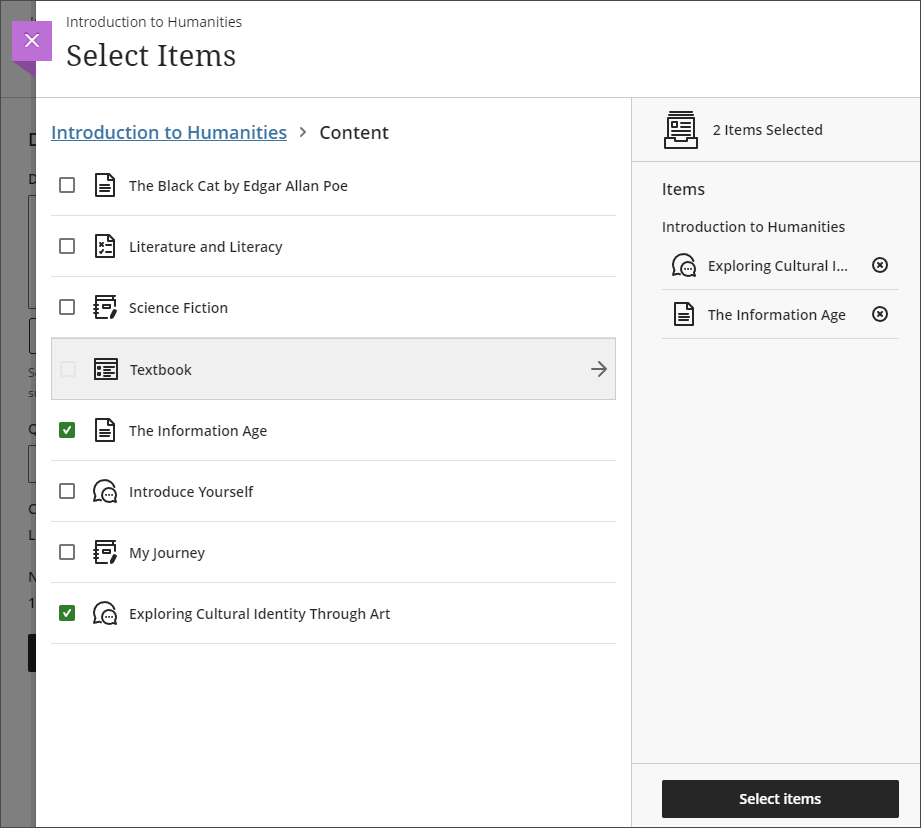

AI Design Assistant: Select course items/context picker enhancements

Following last month’s upgrade which introduced the context picker (the ‘Select course items’ tool) for auto-generated test questions, April’s upgrade introduces the option to select course items when auto-generating learning modules, assignments, and discussion and journal prompts.

The purpose of the ‘Select course items’ tool is to allow staff to specify exactly which resources should be used when auto-generating content. If ‘Select course items’ is used, the auto-generated content will be based only upon the items selected. Where no course items are selected, auto-generated content will be based upon the course title.

You can find out more about the AI Design Assistant and how to use it it at: Learning Technology Team: AI Design Assistant

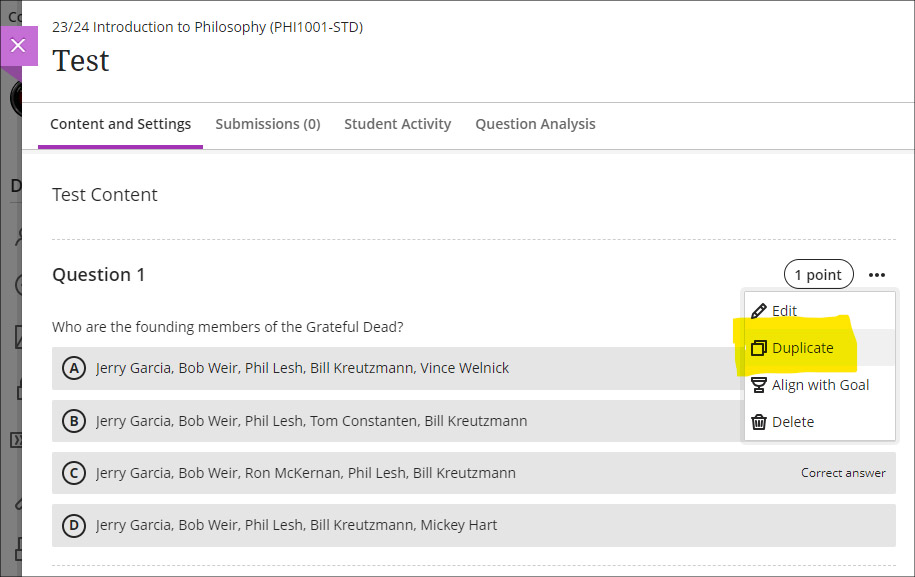

Duplicate test/form question option, plus change to default test question value

The April upgrade introduces the ability for staff to duplicate test and form questions. Additionally, following the upgrade the default point value for newly created test questions will be changed from 10 points to 1 point.

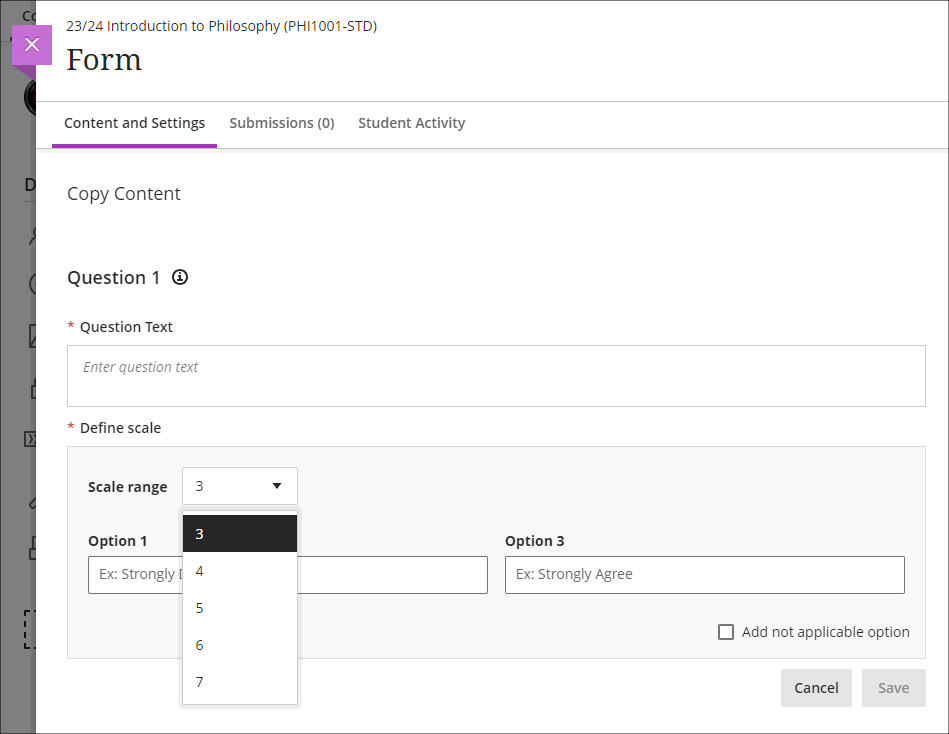

Likert form questions includes options for 4 and 6, as well as 3, 5, and 7

The February 2024 upgrade introduced the ‘Forms’ tool to Ultra courses. One of the question types available in forms is a Likert question; however, the original release only included options for staff to select Likert scales with 3, 5, or 7 points. April’s upgrade will add options to choose scales with 4 or 6 points.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

In 2023 the University appointed its first student Digital Skills Ambassador (DSA), the purpose of the role being to allow students to get digital skills support from other students. While it’s often assumed that most people are now confident and competent users of digital systems, especially young people (the so-called ‘digital natives’), the reality is that some students come to university without the basic digital skills they need to flourish on their courses. The University of Northampton is rightfully proud of the excellent digital facilities that support teaching and learning here, but being mindful of the pernicious effects that the digital divide can have in education, chose to create the student DSA role in order not to leave any student in the digital darkness. To understand a little more about what it means to be a DSA, we interviewed the current incumbent, Faith Kiragu, and asked them to explain in their own words how the role works.

1. Can you tell me a little about you and your role? How does the support work?

“As the Digital Skills Ambassador, my role primarily revolves around providing support and guidance to fellow students on various aspects of digital skills, with a focus on Microsoft Office Packages, NILE (Northampton Integrated Learning Environment), the student Hub, LinkedIn Learning, and related queries.

Students can seek my help by booking appointments through the Learning Technology platform. Upon visiting the platform, they fill out a form detailing their query briefly. After submission, they receive a confirmation email containing the details of their appointment. Additionally, to ensure they do not miss their session, students receive reminders a day before their scheduled appointment time. During the session, I address their queries, provide guidance, and offer practical assistance to help them navigate through any challenges they may encounter with digital tools and platforms. My aim is to empower students with the necessary digital skills to enhance their academic journey and future career prospects.”

2. What are the common support requests and how do you support these?

“The most common support requests I receive are related to navigating NILE, submitting assignments, accessing online classes on Collaborate, and Microsoft PowerPoint tasks like adding images and textboxes.

To support these requests, I provide personalized guidance during the one-on-one appointments. I offer step-by-step demonstrations, share relevant resources such as Linked-In Learning, and address specific queries to ensure students feel confident in handling these on their own. Additionally, I offer troubleshooting assistance and encourage students to practice these skills independently to enhance their proficiency over time.

3. Is the support used by students across all courses, or some areas more than others?

“Yes, I have noticed that more students from health-related courses seek digital skills support compared to other courses, Public Health being the course I have encountered most students. Students from the Business and Law Faculty come a close second.”

4. Do you have any (anonymous) examples of how you have helped students with their problems?

“A student asked for help with accessing their online classes on Collaborate via NILE. During our appointment, I guided them through the process of navigating to the correct module on NILE, locating the scheduled Collaborate session, and joining the virtual classroom. By the end of the session, the student could successfully participate in their online class without further difficulties.

Another student sought help creating a presentation on Microsoft PowerPoint, specifically needing guidance on how to add images and textboxes effectively. I provided a step-by-step demonstration of inserting images into slides, resizing and positioning them, and formatting textboxes for adding content and captions. Additionally, I shared tips on utilising PowerPoint’s features for enhancing visual appeal and maintaining a cohesive layout throughout the presentation. I also supported the student in accessing Linked-In Learning, and the student left the session equipped with the skills and confidence to complete their assignment using PowerPoint effectively.”

5. What do you think are the main benefits to students who have received support?

“The support I offer to students entails providing guidance and assistance with various digital tools and platforms, including NILE, Microsoft PowerPoint, and Collaborate. Through personalized appointments, students receive practical help in navigating these systems. This support not only enhances their digital skills but also boosts their confidence in engaging with coursework effectively. As a result, students experience improved academic performance and save valuable time by overcoming challenges efficiently. Furthermore, the support empowers students to take ownership of their learning journey, fostering independence and lifelong learning skills. Overall, the support provided equips students with the necessary resources and confidence to succeed academically in today’s digital-centric educational landscape.”

6. What have you learnt from your time in the role?

“In my role as the Student Digital Skills Ambassador, I have learned invaluable lessons that have enriched both my technical and interpersonal skills. Effective communication has been paramount as I translate complex technical information into accessible guidance for students with varying levels of digital literacy. Adaptability has been key as I tailor support to accommodate diverse learning styles and preferences. Through addressing queries, I have honed my problem-solving abilities while cultivating patience and empathy for students’ individual challenges. Additionally, this role has emphasized the importance of continuous learning, prompting me to stay updated on emerging technologies and digital trends. Overall, my experience has deepened my understanding of digital tools and platforms while enhancing my ability to support others in their learning journey, fostering a collaborative and empowering environment for student success.”

The new features in Blackboard’s March upgrade will be available on Friday 8th March. This month’s upgrade includes the following new/improved features to Ultra courses:

- AI Design Assistant – Context picker for test question auto-generation

- ‘No due date’ option for Blackboard assignments, tests, and forms

- Gradebook item statistics

AI Design Assistant – Context picker for test question auto-generation

Following the March upgrade, when using the auto-generate question tool in Blackboard tests, staff will be able to use the new ‘Select course items’ option to specify exactly which resources the AI Design Assistant auto-generate tool should use when generating questions. Prior to this, any auto-generated questions would be based on the course title (i.e., the module name).

The ‘Select course items’ option may be especially useful for staff wanting to create multiple tests, each based on one or more specific content items in the course, or for staff wanting to create a longer test built up from multiple auto-generated questions from different sections of the course, thus ensuring that the test represents questions testing students’ knowledge from across the entire course.

More information about the AI Design Assistant is available from: Learning Technology Team – AI Design Assistant

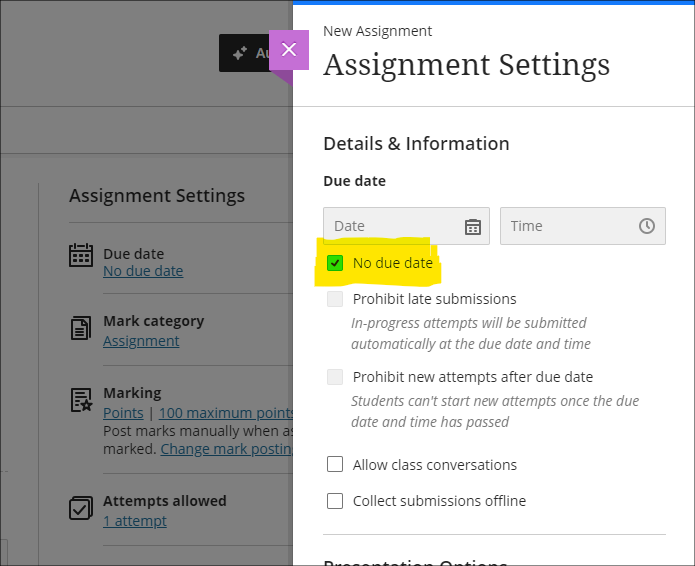

‘No due date’ option for Blackboard assignments, tests, and forms

After the March upgrade, staff will no longer have to specify a due date when setting up a Blackboard assignment, test, or form. Please note that this change does not affect Turnitin assignments, which will continue to require a due date to be specified.

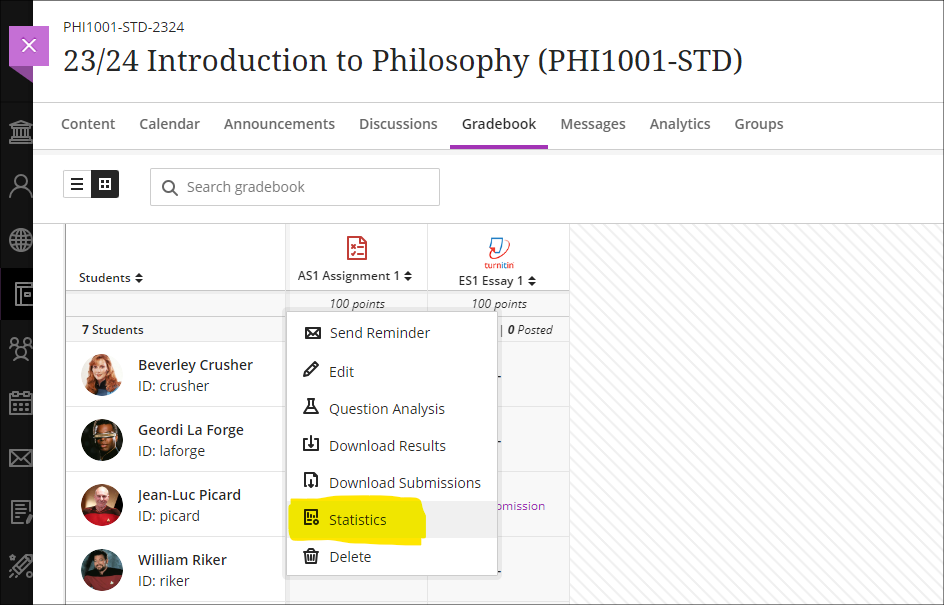

Gradebook item statistics

The March upgrade provides staff with the option to select a column in the Ultra gradebook and access summary statistics for any graded item. The statistics page displays key metrics including:

- Minimum and maximum value;

- Range;

- Average;

- Median;

- Standard deviation;

- Variance.

The number of submissions requiring grading and the distribution of grades is also displayed.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

Recent Posts

- 15 Years of the Learning Technology Blog!

- Blackboard Upgrade – March 2026

- Blackboard Upgrade – February 2026

- Blackboard Upgrade – January 2026

- Spotlight on Excellence: Bringing AI Conversations into Management Learning

- Blackboard Upgrade – December 2025

- Preparing for your Physiotherapy Apprenticeship Programme (PREP-PAP) by Fiona Barrett and Anna Smith

- Blackboard Upgrade – November 2025

- Fix Your Content Day 2025

- Blackboard Upgrade – October 2025

Tags

ABL Practitioner Stories Academic Skills Accessibility Active Blended Learning (ABL) ADE AI Artificial Intelligence Assessment Design Assessment Tools Blackboard Blackboard Learn Blackboard Upgrade Blended Learning Blogs CAIeRO Collaborate Collaboration Distance Learning Feedback FHES Flipped Learning iNorthampton iPad Kaltura Learner Experience MALT Mobile Newsletter NILE NILE Ultra Outside the box Panopto Presentations Quality Reflection SHED Submitting and Grading Electronically (SaGE) Turnitin Ultra Ultra Upgrade Update Updates Video Waterside XerteArchives

Site Admin