Breaking Boundaries: Engaging Students with Clear and Structured Course Design

This summer, Kiran Kaur, a lecturer in the Faculty of Business and Law, was recognised with a NILE Ultra Course Award for her exceptional work on BUS2900: Research, Trends, and Professional Directions, which she developed during the 2023-24 academic year. The NILE Ultra Awards celebrate excellence in course design, recognising modules that demonstrate high standards of structure, accessibility, and student engagement.

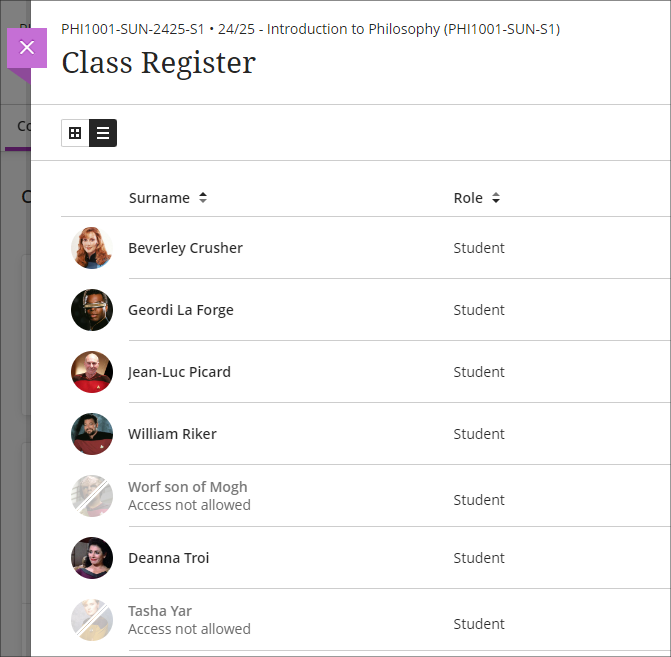

Kiran’s success with the 2023-24 module laid the foundation for her work this year when she took on a new module at short notice. She applied the same principles she used in her award-winning course—prioritising structure, clarity, and accessibility—and incorporated innovative tools like Padlet and emojis. These changes have had a powerful impact on student engagement, with the students in this new 2024-25 module providing glowing feedback. One student even described it as “the best module ever on NILE,” noting how easy it was to navigate and access resources.

Innovative Use of Emojis and Padlet for Engagement

A standout feature of Kiran’s teaching approach is her creative use of emojis to enhance course content. “I used emojis to help break up the text and make the material feel a little more fun and approachable for students. It’s a simple touch, but it got great feedback,” Kiran explained. This added a layer of visual clarity that students enjoyed.

In addition, Kiran made extensive use of Padlet, a collaborative tool that students used throughout the module to share ideas and engage with each other. “Padlet really worked to get students engaging with each other. It’s not just a tool for posting comments; it’s a space where students can collaborate in real-time, share their thoughts, and build a sense of belonging,” Kiran noted. Padlet’s role in the module went beyond traditional discussion boards, encouraging real-time collaboration and making students feel more connected despite being in an online environment. This interactivity was a key factor in building student engagement.

Sharing Best Practice and Building Consistency

Kiran plans to share her approach at an upcoming subject staff development day. She is eager to promote consistency across her programme, ensuring that students enjoy a seamless and cohesive learning experience throughout their three years of study. “It’s about fostering a culture where we share what’s working well, so that students benefit across the board,” Kiran explained.

Reflecting on her own experience, she encourages her colleagues to consider nominating themselves or each other for future NILE Ultra Course Awards. She credits her own nomination to a colleague’s encouragement and is now keen to inspire others to recognise the impact of their own teaching practices. “I wouldn’t have even thought to put myself forward if a colleague hadn’t mentioned the award. Now, I want to help others see the value in recognising their own achievements,” Kiran added.

Looking Forward

Kiran continues to apply her proven strategies to her new modules, maintaining her commitment to clear, structured design and student engagement. Her success demonstrates how thoughtful course development, and the use of innovative digital tools can greatly enhance the student experience. “For me, it’s about making sure that the students have the best possible experience. If they’re engaged and able to access the materials easily, they’ll get more out of the course,” she said.

Kiran’s story serves as an inspiration to her colleagues at the University of Northampton, illustrating how collaboration, innovation, and sharing best practices can lead to great results. Congratulations again to Kiran Kaur for her NILE Ultra Course Award, and we look forward to seeing her continued success!

NILE Ultra Course Awards 2025

Keep an eye out in the new year for the 2025 Ultra Courses Awards.

Last week, we highlighted Fix Your Content Day, where staff were encouraged to take small steps towards improving the accessibility of their module sites. Today, we’re excited to share a success story from Deborah Gardner, a Lecturer in Business Management, who took part in the initiative and saw significant improvements in her module’s accessibility.

When Deborah checked her module site’s accessibility report, she found it at 88%. While this was a decent score, she knew there was room for improvement. “I went through the steps the report suggested,” she explained, starting with adding alternative text to diagrams in her PowerPoint slides and correcting low-visibility text. These changes quickly raised her score to 99%, and with a bit more effort, she soon reached the perfect 100%. “Some of the corrections were a little time-consuming, but the effort paid off when my overall score hit 100%,” she shared.

Key Learnings

Deborah’s experience highlighted a few valuable takeaways:

Custom ALT Text: One of Deborah’s recommendations is to write your own ALT text descriptions for images, rather than relying on automatic suggestions. “When I asked to use the suggested [ALT text], it didn’t really describe the image that well,” she noted. Crafting accurate and meaningful descriptions ensures that students using screen readers fully understand visual content.

Tackling PDFs: For Deborah, the most time-consuming task was correcting PDFs, but the improvements were well worth the time. Ensuring all content is accessible, even documents, can have a huge impact on student inclusion.

A Habit of Accessibility

Deborah encourages her colleagues to get into the habit of reviewing their accessibility reports regularly: “The first step is to check your report. Quite often, it will just involve a few tweaks, so it won’t be too demanding on your time. Get into the habit of checking it once a week to ensure your site remains accessible.”

She also suggests incorporating accessible practices from the very beginning: “It helps to get into the habit of providing descriptions for any images in your content right from the start—they’re less likely to flag up in the report that way.”

Next steps

While Deborah has seen firsthand the benefits of improving her module’s accessibility, she hopes to work with colleagues across the programme to adopt similar practices. By sharing her experience and success, she would like to help others to do the same, creating a more inclusive learning environment for all students.

Accessibility doesn’t have to be overwhelming, and as Deborah’s story shows, even a few small steps can make a big difference. For more tips and support, check out our original blog post here and don’t hesitate to reach out to your Learning Technologist for guidance.

The new features in Blackboard’s October upgrade are available from Friday 4th October. This month’s upgrade includes the following new/improved features to Ultra courses:

- Students who have completed their studies no longer hidden in NILE courses

- Email notifications for followed discussions

- Auto-generate test questions in question banks

- End of life for guest access to NILE, including welcome courses and organisations, and removal of old Original courses and organisations (from 31st December)

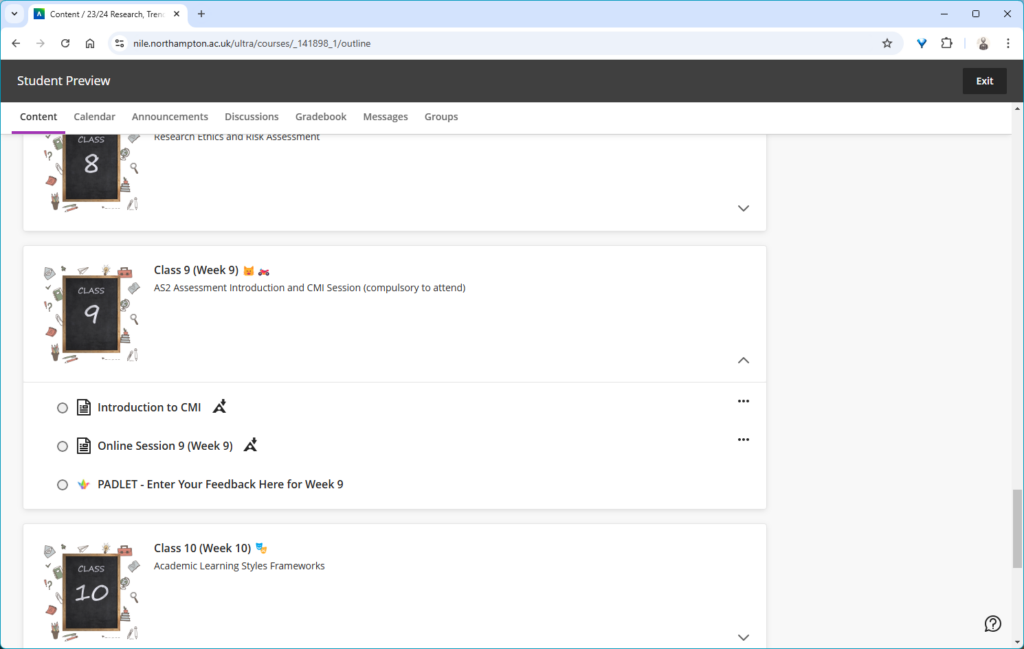

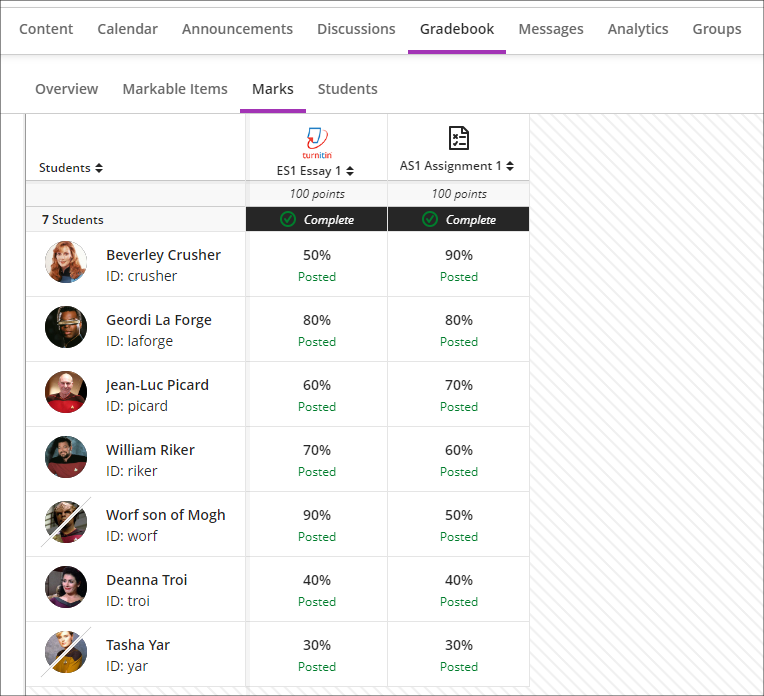

Students who have completed their studies no longer hidden in NILE courses

Students’ NILE accounts are automatically made unavailable in NILE approximately one month after their status in the SRS (Student Records System) is updated from ‘Enrolled’ to ‘Award’. This has had the effect of hiding these students in their NILE courses, which has made it difficult for staff to view the assignments, grades and feedback of students who have completed their studies. Following the October upgrade this will no longer happen, and instead of disappearing from the gradebook and from the class register, students whose NILE accounts have been made unavailable will remain in their NILE courses, with unavailable students’ accounts marked with a strikethrough. Due to the way that Turnitin functions, unavailable students and their submissions will not be visible in the Turnitin submission inbox, however, the grades of unavailable students’ Turnitin assignments will be visible in the gradebook. Unavailable students’ Blackboard assignments, tests, journals and discussions will be fully visible in NILE.

Please note that the above change to NILE courses only applies to students who have completed their studies and whose NILE accounts have been made unavailable. It will continue to be the case that students who have been withdrawn from a module or who have transferred off a module will not appear in those particular NILE courses.

Email notifications for followed discussions

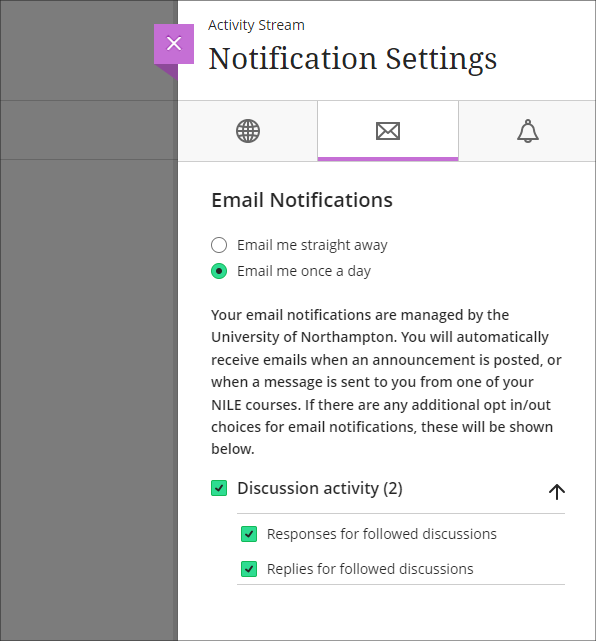

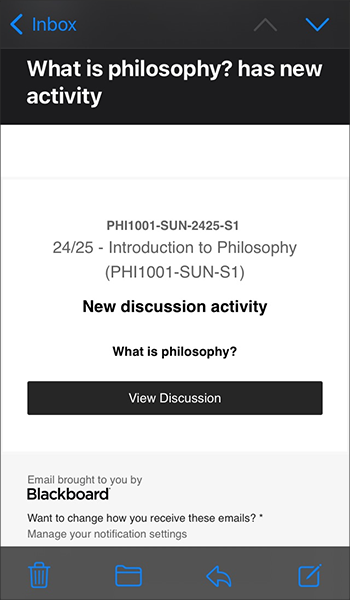

Following on from the August 2024 release, in which a ‘Follow discussion‘ option was introduced to Ultra discussions, the October 2024 release will allow staff and students to optionally receive email notifications for followed discussions.

Staff and students who opt to follow discussions will continue to receive notifications in the activity stream when there are responses and replies to the discussions they are following. Additionally, staff and students will also be able to receive email notifications when there are responses and replies to followed discussions, and these will be configurable in the email notifications section of the activity stream’s notification settings. Emails for followed discussions will be default on, and the default frequency for email notifications is ‘Email me once a day’.

Please note that the emails received for followed discussions will not contain the actual content of the discussion response or reply, but will only state that a response or reply has been made to a followed discussion, and will include a link to the discussion.

More information about discussions in Ultra courses is available from: Blackboard Help – Discussions

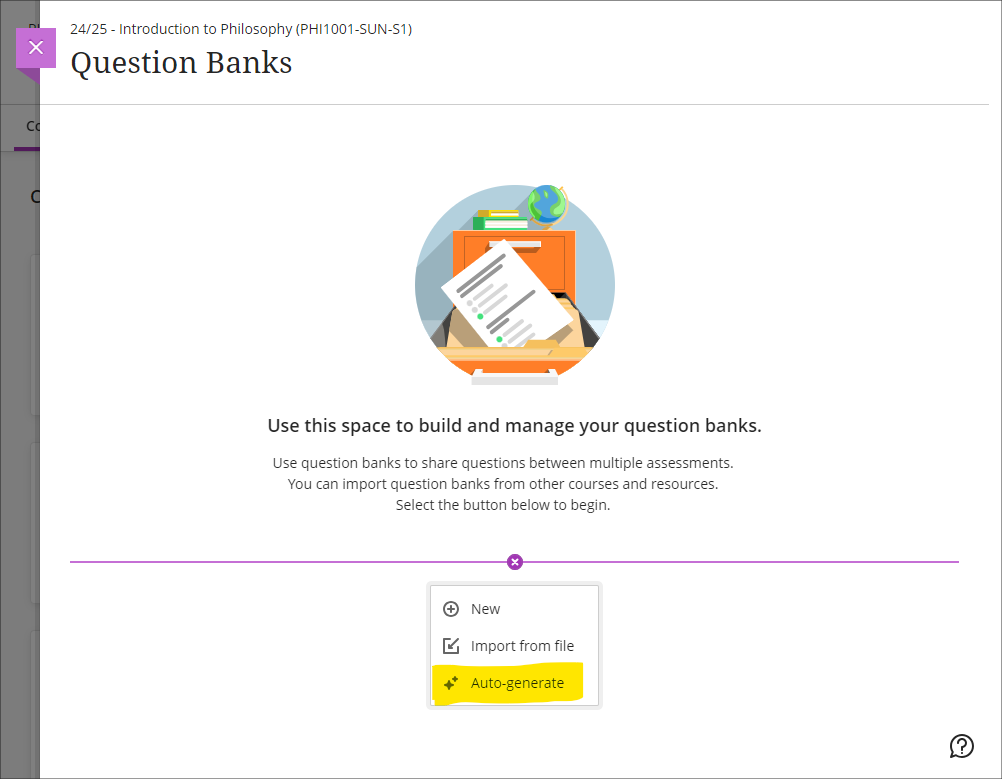

Auto-generate test questions in question banks

Following October’s upgrade, staff will be able to use the AI Design Assistant to auto-generate test questions in question banks. The question banks tool is available from the ‘Details & Actions’ menu in the content area of Ultra courses. Using question banks makes it much easier to reuse test questions in different tests, or to create a large pool of test questions which can be reused in tests which pick a random sample of test questions.

More information about using AI Design Assistant is available from: Learning Technology Team – AI Design Assist

More information about using question banks in Blackboard tests is available from: Blackboard Help – Question Banks

End of life for guest access to NILE, including welcome courses and organisations, and removal of old Original courses and organisations (from 31st December)

As previously announced in our Blackboard Upgrade – July 2024 post, in order to implement necessary security measures, from the 1st of January 2025 guest access to NILE will no longer be possible. This means that only logged in users will be able to access NILE. Guest access to Ultra courses has never been possible, however, some old Original courses, including welcome courses, may still be available via guest access and the information they contain may need to be relocated.

Additionally, please note that while most NILE courses are regularly archived and removed from NILE in accordance with the NILE Archiving and Retention Policy, some old Original courses and organisations remain on the system and will continue to be removed from NILE on a rolling ten year basis. Currently, all Original courses and organisations created before 01/01/2014 are no longer available on NILE, and courses created before 01/01/2015 will be no longer be available from 1st January 2025.

Staff who are concerned that they may be affected by either of these matters are encouraged to contact Robert Farmer, the Learning Technology Manager, to discuss their requirements. Where information needs to be available to people who do not have a NILE login, it will be necessary to use another platform to provide this. However, where using NILE is still the best option, we will be happy to provide a new Ultra course or organisation to replace the old Original one.

More information

As ever, please get in touch with your learning technologist if you would like any more information about the new features available in this month’s upgrade: Who is my learning technologist?

At the University of Northampton, we’re committed to creating an inclusive learning environment for all students. As part of this commitment, we’re happy to celebrate the Anthology Fix Your Content Day on Thursday, October 3rd, 2024. This 24-hour event is designed to create more accessible and inclusive digital learning content using Blackboard Ally. So, why not join us in making NILE more accessible?

How do I get involved?

If you’d like to take part, simply email your Learning Technologist with the code of your NILE module. On the morning of October 3rd, email them a screenshot of your current Ally Accessibility score, then spend the day making improvements to your module content. By 8 PM, submit another screenshot of your final Ally score and you might just get a special mention in Unify, along with the satisfaction of knowing you’ve created a more accessible learning environment for your students.

In previous years, tutors like Jean Edwards and Charlotte Dann embraced this challenge and made fantastic progress using the Ally module accessibility reports to guide their changes. Their work helped create more inclusive NILE modules, making a real difference for their students.

What do I need to do on October 3rd?

Here’s a guide to getting involved in the Anthology Fix Your Content Day 2024:

- Use this guide to find your Module-Level Ally score and identify areas for improvement.

- Use Blackboard Ally to assess the accessibility of your materials. Ally provides feedback and suggests improvements, helping you prioritise changes that will have the greatest impact.

- Review Your Documents: Ensure that your PDFs, Word documents, and presentations use clear headings and tags that can be easily navigated by screen readers.

- Add Alt Text to Images: Include descriptive alt text for any images in your materials. This ensures that students using screen readers can engage with the visual content.

- Check your Captions: Accurate captions support not just students with hearing impairments, but also those studying in noisy environments or those who are non-native English speakers.

We hope you’ll join us on October 3rd and make NILE more accessible. However, even if you can’t engage on the day, consider trying something new this semester – whether that’s adding clearer captions, using shorter filenames, or creating an Ultra document. Even small improvements can significantly transform the learning experience for all students.

Contact your Learning Technologist if you have any questions.

Claire Massie, a Careers and Employability Officer at the University of Northampton, creates online video tutorial guides for students having been trained on how to produce video demonstrations by Anne Misselbrook, the E-Learning/Multimedia Resources Developer.

What seemed to be unachievable for Claire, became achievable.

Anne receives complimentary feedback from Claire who sent an email to Rob Howe, Head of Learning Technology at the University. Here’s an extract of that feedback.

“I just wanted to say a massive thank you to Anne Misselbrook for what she has done for me over the past couple of months. She has leapt in quickly to provide one-to-one (bespoke) training for both H5P and video editing. She has been instrumental in getting me up to speed with tech that I previously knew nothing about, which in turn has enabled me to participate in two very interesting projects going on in Student Futures to put things in place for students at the start of the new academic year.

I have to say how much I enjoyed her delivery, how kind she was whilst I was practising, and how fantastic her post-delivery resources were.” Claire Massie

Claire was introduced to Anne by Lisa Anderson the Library Services Manager.

Lisa had previously attended virtual training sessions with Anne on how to record and edit video using tools available and H5P (HTML5 package), a University supported software application used to create interactive content.

Lisa says:

“The tools we have at UON are great for the job of interactive content and video creation, and it’s helpful to have someone like Anne to get you started and encourage you along the way. We’ve created many instructional videos now thanks to these sessions, and have now started creating interactive content too.”

Online Library Quest is available now for students.

Welcome new students – a new student page with video content

Training

Many staff have attended virtual training with Anne to upskill themselves on how to produce video content using tools available and create interactive online content using the software applications H5P and Xerte.

Students can benefit from active online resources as they interact with the content.

Staff can book onto a virtual training session to learn how to use tools by using the LibCal online staff development booking system.

In today’s digital age, many students face the challenge of mastering unfamiliar digital skills, especially when it comes to submitting assessments online. Providing students with opportunities to practice these tasks not only helps them build confidence but also reduces the stress associated with last-minute technical issues. Hands-on demonstrations and early exposure to digital platforms are essential to ensuring that students can navigate these tools with ease, ultimately enhancing their academic performance.

During a recent committee meeting, Hafiz, the Student’s Union VP Education, shared how his tutor, Alfred Akakpo, a Senior Lecturer in Business Analytics, had excelled in supporting students through the digital assessment process. His feedback illustrates how effective tutor engagement and practical demonstrations can positively impact students’ experiences with online submissions. As a representative of the student body, Hafiz provided valuable insight into how this approach significantly boosted his peers’ confidence in using digital tools for their assessments.

Hafiz explained, “Alfred taught us how to use Kaltura Capture to record and upload videos. If I’m not mistaken, he also provided a demo link that allowed us to practice uploading. This was incredibly helpful for us to understand how the app works beforehand.”

From Hafiz’s perspective, here are a few key strategies that tutors can adopt to better support students:

- Tutors should demonstrate how to submit an assessment.

- Clearly communicate the importance of deadlines to students.

- Provide students with opportunities to practice the submission process during class.

- Share a link to guidance that students can follow at their own pace.

- Ensure that this guidance is directly associated with the assessment brief.

Early Communication and Demonstration

Early communication plays a crucial role in reducing stress and anxiety among students. As Hafiz highlighted, “Early communications help a lot. If tutors demonstrate how to submit assessments and communicate the importance of early submission, it helps students reduce their mental pressure.” By proactively showing students how to use these platforms, educators can give them time to familiarise themselves with the tools, which in turn builds their confidence.

Timing of Support Sessions

Hafiz also suggested moving support sessions to around three weeks before the deadline. “Personally, I think if we can move that to around three weeks, it might help and motivate students to start the assessment early,” he proposed. This shift could encourage students to organise their time better and reduce the common last-minute rush, providing more space to focus on the quality of their work.

Creating Demo Videos

In addition to live demonstrations, Alfred provided students with video tutorials that guided them through the submission process. This approach allowed students to practice at their own pace. “The demo link left by Alfred gave us the chance to try beforehand and understand how the app works,” Hafiz remarked. Such resources can significantly enhance students’ comfort with digital tools, especially when included with assessment briefs or accessible via the course platform.

Encouraging Staff to Follow Suit

Alfred’s success in supporting students serves as a model for other staff looking to improve their students’ digital skills. By offering practical, hands-on guidance, tutors can reduce student anxiety and ensure that technical issues do not become barriers to academic success. Hafiz’s feedback highlights how impactful these interventions can be.

Supporting Data and Institutional Strategies

This approach aligns with broader institutional strategies, such as those outlined in the University of Northampton’s Access and Participation Plan. The plan underscores the importance of tailored support to enhance student engagement and success, particularly for those from underrepresented backgrounds. This strategic focus highlights the need for proactive and practical training to address digital skills gaps and ensure equitable access to educational opportunities.

Conclusion

By incorporating strategies like early communication, timely support sessions, and demo videos, tutors can significantly improve students’ ability to handle digital assessments. Hafiz’s experience as a student leader demonstrates the benefits of proactive support from staff like Alfred. This approach not only eases student stress but also equips them with valuable skills for the future. Educators are encouraged to follow Alfred’s example and explore similar methods to ensure their students feel supported and empowered in the digital landscape.

If you are a member of teaching staff and you would like training and support in offering this level of support to your students, get in touch with your Learning Technologist to arrange a 1:1. In my experience, the better you know how to use these digital tools, the more you can reduce student anxiety and ensure students achieve their best outcomes.

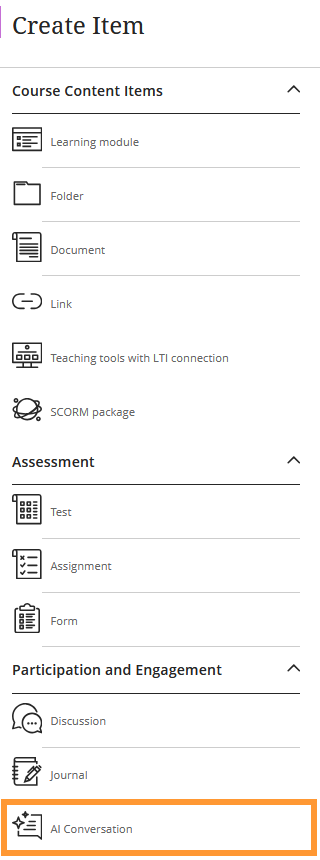

In addition to the updates included in the Blackboard Upgrade on 7th September 20204, the latest feature to reach Blackboard Ultra courses is the new AI Conversation activity.

This activity allows students to interact with an AI service to explore their own thoughts and ideas on a topic.

In Anthology’s words:

It’s tough to have 1:1 conversations with every student, especially in large courses. Some instructors are asking students to use AI services for topic-related activities to help. But, with many services and limited instructor visibility, results can vary.

To better serve instructors who want to use AI with students, we’re launching a new activity called AI Conversation. This is a Socratic questioning exercise guided by AI. AI Conversation lets students explore their thoughts on a topic.

https://help.blackboard.com/node/48711

The activity can be added to a course from the Create Item menu. Scroll to the bottom of the list to find it.

Full details on how to use the feature can be found on the Blackboard Help support site and an overview of the feature has been added to Learntech’s main AI Design Assistant page, which also includes links to the University’s position on Artificial Intelligence and an explanation of the benefits and limitations of the AI Design Assistant features in general.

As always, please contact your Learning Technologist if you would like further support and advice using this new feature: Who is my learning technologist?

At the University of Northampton, we aim to provide our academic staff and students with the best tools for teaching and learning. One such tool is Kaltura, our video and audio media platform which enables tutors and professional services staff to create engaging content as well as allowing students to create and submit video for assessment. But did you know that Kaltura now provides you with detailed insights into your content’s performance? Enter Kaltura My Content Analytics – a feature designed to help you understand how your videos are engaging your students.

What Can You Track?

Kaltura’s My Content Analytics provides you with a wealth of data at your fingertips. For example, you can track:

- Views and Engagement: See how many times your content has been viewed and by whom. This can give you a snapshot of how many students are engaging with the materials.

- Viewer Drop-off: Understand where viewers stop watching, helping you fine-tune your video length or key points.

Focus on Key Data Points

There’s a lot of information available in Kaltura’s analytics dashboard, which can feel overwhelming at first. If you’re a beginner, we’d recommend you focus on Top Videos – this section will show you which of your videos are getting the most views and how they’re performing. Once you’re comfortable with that, you can dive into deeper analytics.

Why Is This Important for Educators?

For educators at the University of Northampton, this data can be very useful. If you’re using video to deliver taught content or supplementary materials, knowing how students interact with that content can inform future teaching strategies. Are students dropping off before key explanations? Do certain areas require more emphasis? This feedback loop can directly impact student outcomes. Combine this insight with the new student engagement analytics in NILE and you have a lot of helpful data to better support your students.

How to Access My Content Analytics

- Go to mymedia.northampton.ac.uk.

- Log in with your university credentials.

- Select My Analytics from the drop-down menu.

From there, you can explore a range of metrics to optimise your teaching content. And if you want to learn more, schedule some training with your Learning Technologist, who can guide you through more advanced features.

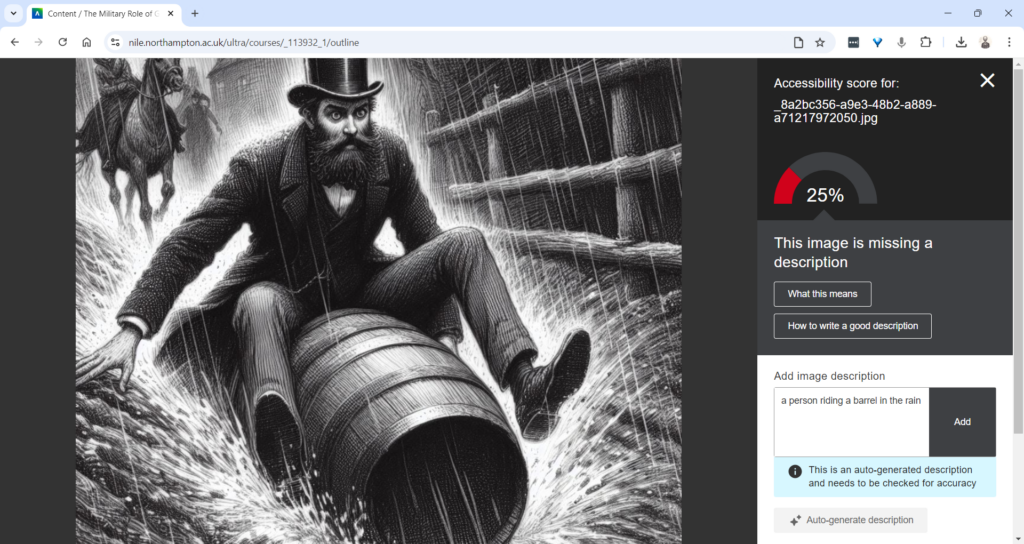

At the University of Northampton, we are committed to fostering an inclusive learning environment. That’s why we’ve introduced the AI-powered tool in Blackboard Ultra that automatically generates alternative text (alt text) for images. This feature offers instructors the support they need to generate meaningful alternative text (alt text) for images quickly and efficiently.

The Challenge of Creating Alt Text

Writing descriptive and meaningful alt text isn’t always straightforward. Many times, instructors need a bit of inspiration to find the right words. Enter Ally’s AI Alt Text Assistant—a tool designed to take the guesswork out of writing alt text by automatically generating suggestions. This not only saves time but also enhances the accessibility of learning materials for visually impaired students.

How Does the AI Alt Text Assistant Work?

Integrated directly into the Ally Instructor Feedback interface, the AI tool empowers instructors to address images without alt text more efficiently:

- Auto-Generate Description Button: When an image lacks a description, instructors can simply click the “Auto-Generate Description” button. The AI Alt Text Assistant will provide a concise and accurate suggestion based on the image’s content.

- Instructor Review and Control: Importantly, the AI does not automatically apply these suggestions. Each description requires instructor review, ensuring that the final text aligns with the course content and the image’s educational purpose. You can easily edit, refine, or remove the suggestions to suit your needs.

This combination of automation and instructor control guarantees that the alt text meets both accessibility standards and the specific context of your course materials.

Key Features and Benefits

- Time-Saving Automation: With just one click, you can generate accurate alt text suggestions, saving time while ensuring your materials are accessible.

- Instructor-Centred: The AI Alt Text Assistant empowers instructors by providing helpful suggestions, but leaves the final decision in your hands, giving you full control over the descriptions.

- Seamless Integration: This feature works within the existing Ally Instructor Feedback workflow, making it easy to fix accessibility issues as you work through your content.

Why Is This Important?

Creating accessible content isn’t just about meeting legal standards—it’s about ensuring that every student can engage with your materials. By incorporating alt text for images, you’re helping students who use screen readers to fully participate in the learning experience. Ally’s AI tool simplifies this process, helping you make your course content more inclusive with minimal effort.

Start Using the AI Alt Text Assistant Today

The new AI Alt Text Assistant is available now in Blackboard Ultra. Whether you’re updating old materials or creating new ones, this tool will help you maintain accessibility standards while saving valuable time.

For more information or guidance on using this new feature, check out our Ally guidance and get in touch with your Learning Technologist if you have any questions.

By leveraging this tool, you contribute to making learning at the University of Northampton a more inclusive experience for all.

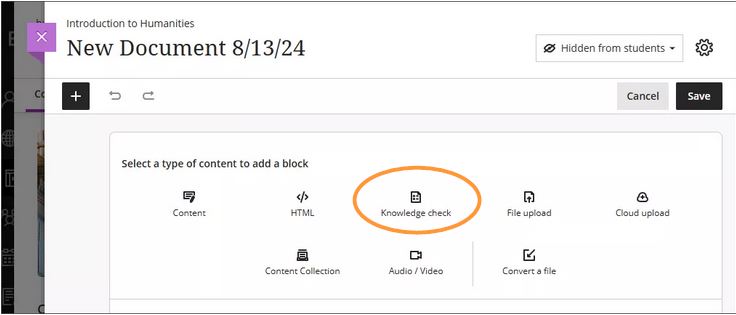

The new/improved features outlined below are available from Saturday 7th September 2024.

- Inline Knowledge Check questions within documents

- Course content page enhancements

- Gradebook overview improvements

- Enhanced Student Activity Log

- Wiris and math editor update

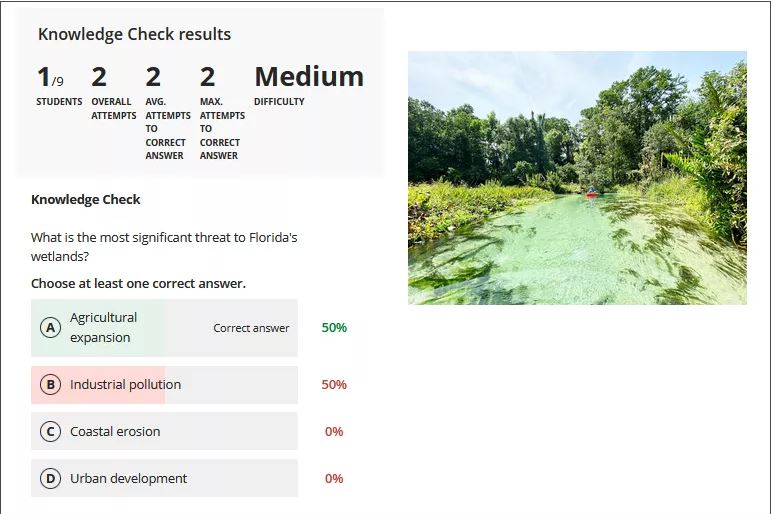

Inline Knowledge Check questions within documents

Building on last month’s new layout options within an Ultra document, instructors will now be able to add multiple choice and multiple answer questions directly to a document and include automated feedback for students.

Students receive immediate feedback on whether their answer is correct and can submit an unlimited number of attempts.

Instructors can keep an eye on student participation via detailed metrics, including:

- Number of students participating

- Total number of attempts

- Average number of attempts to reach the correct answer

- Maximum number of attempts to reach the correct answer

- Level of difficulty metric

- Percentage of students selecting each answer option

More information about Knowledge Checks in Blackboard Learn can be found here: https://www.youtube.com/watch?v=LtuFUPaKLSw

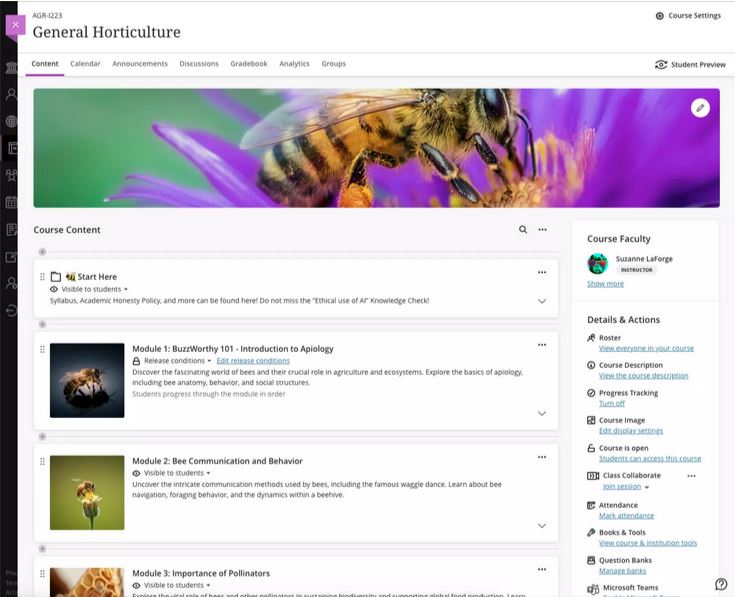

Course content page enhancements

There have been design changes to elements, colours, and layout of the course contents page. Most notably, the details and actions menu can now be found on the right hand side when viewing with a large screen.

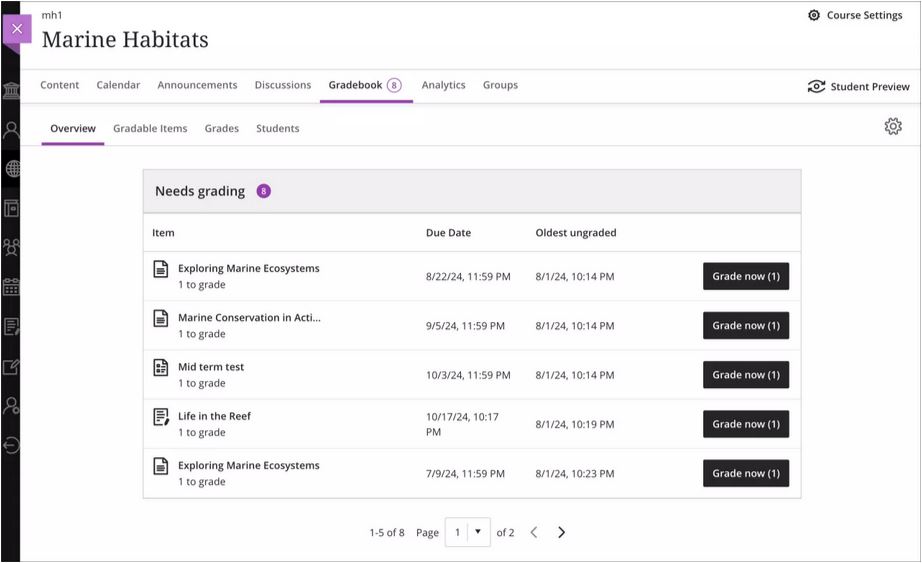

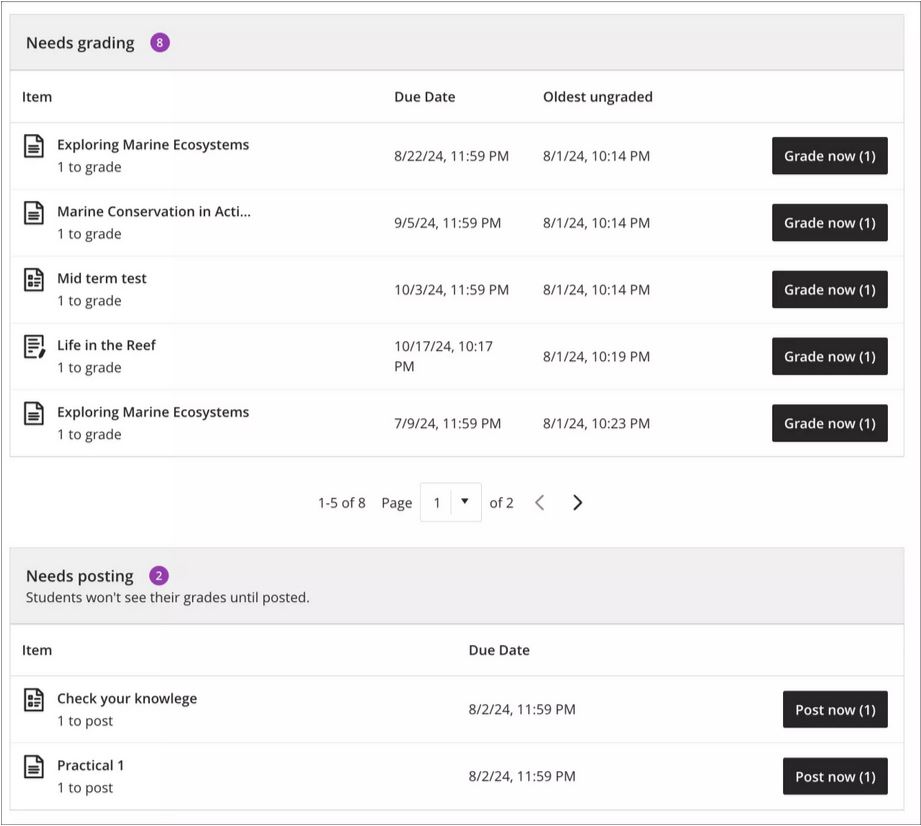

Gradebook overview improvements

A new indicator has been added that appears next to the gradebook heading in the course menu when there are new submissions available to grade. An overview page will now show a summary of those items which need grading or are yet to be posted.

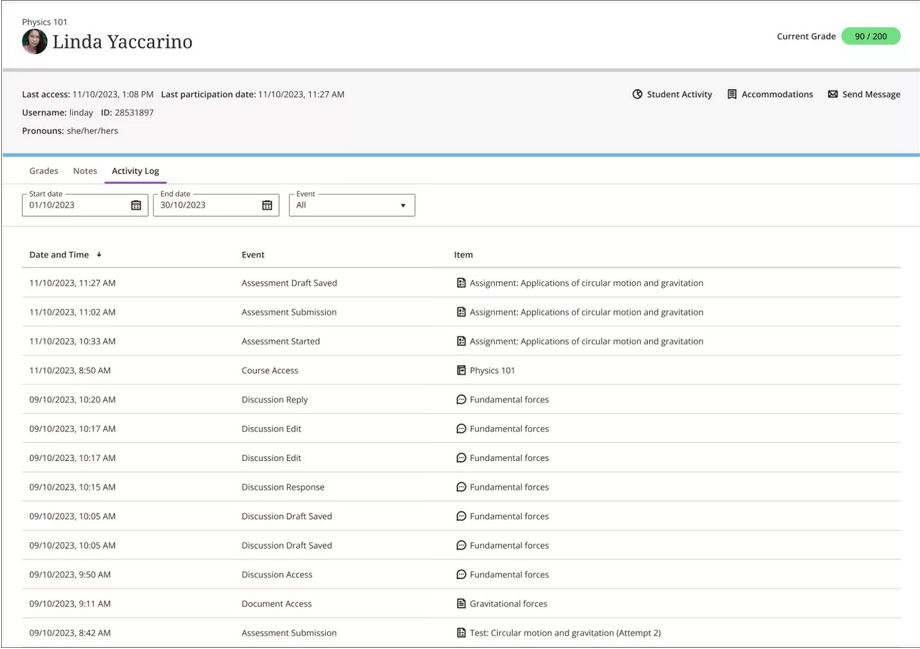

Enhanced Student Activity Log

Student activity has been upgraded to report on various interactions in great detail. Instructors will be able to view student actions within the course over the past 140 days. Any information older than that won’t be stored. The log can take up to 20 minutes to update from the last time a student performs an action.

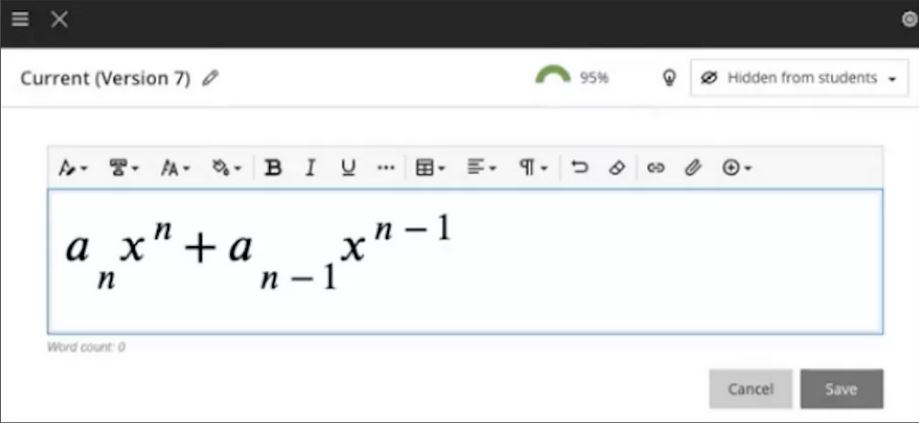

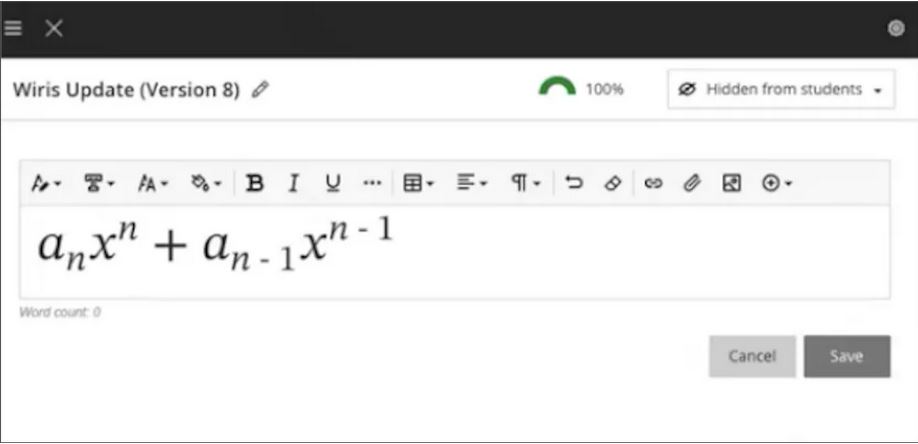

Wiris and math editor update

The Wiris engine and equation editor has been updated to improve performance, in particular the rendering of subscript and superscript formulas.

More information

Please get in touch with your learning technologist if you would like any more information or support using the new features available in this month’s upgrade: Who is my learning technologist?

Recent Posts

- Blackboard Upgrade – April 2026

- 15 Years of the Learning Technology Blog!

- Blackboard Upgrade – March 2026

- Blackboard Upgrade – February 2026

- Blackboard Upgrade – January 2026

- Spotlight on Excellence: Bringing AI Conversations into Management Learning

- Blackboard Upgrade – December 2025

- Preparing for your Physiotherapy Apprenticeship Programme (PREP-PAP) by Fiona Barrett and Anna Smith

- Blackboard Upgrade – November 2025

- Fix Your Content Day 2025

Tags

ABL Practitioner Stories Academic Skills Accessibility Active Blended Learning (ABL) ADE AI Artificial Intelligence Assessment Design Assessment Tools Blackboard Blackboard Learn Blackboard Upgrade Blended Learning Blogs CAIeRO Collaborate Collaboration Distance Learning Feedback FHES Flipped Learning iNorthampton iPad Kaltura Learner Experience MALT Mobile Newsletter NILE NILE Ultra Outside the box Panopto Presentations Quality Reflection SHED Submitting and Grading Electronically (SaGE) Turnitin Ultra Ultra Upgrade Update Updates Video Waterside XerteArchives

Site Admin